Hot Posts

Realtek Outlines SSD Controller Roadmap: High-End PCIe 5.0 x4 Platform in the Works While Realtek is best known in the enthusiast space for for its peripheral controllers such as audio codecs and network controllers, the company also has a small-but-respectable SSD controller business that tends to fly under the radar due to its focus on entry-level and mainstream drives. But Realtek's stature in the SSD space is on the rise, as the company is not only planning new PCIe Gen5 SSD controllers, but also their first high-end, DRAM-equipped SSD controller.

For this year's Computex trade show, Realtek laid out a new SSD controller roadmap that calls for the company to release a trio of new SSD controllers over the next couple of years. First up is a new four-channel entry-level PCIe 4.0 controller, the RTS5776DL, which will be joined a bit later by a PCIe 5.0 variant, the RTS5781DL. But most interesting on Realtek's new roadmap is the final chip being planned: the eight-channel, DRAM-equipped RTS5782, which would be the company's first high-end SSD controller, capable of hitting sequential read rates as high as 14GB/second.

| Realtek NVMe SSD Controller Comparison | |||||||||

| RTS5782 | RTS5781DL | RTS5776DL | RTS5772DL | RTS5766DL | |||||

| Market Segment | High-End | Mainstream | Entry-Level | ||||||

| Error Correction | 4K LDPC | 2K LDPC | |||||||

| DRAM | DDR4, LPDDR4(X) | No | No | No | No | ||||

| Host Interface | PCIe 5.0 x4 | PCIe 5.0 x4 | PCIe 4.0 x4 | PCIe 4.0 x4 | PCIe 3.0 x4 | ||||

| NVMe Version | NVMe 2.0 | NVMe 2.0 | NVMe 2.0 | NVMe 1.4 | NVMe 1.4 | ||||

| NAND Channels, Interface Speed | 8 ch, 3600 MT/s |

4 ch, 3600 MT/s |

4 ch, 3600 MT/s |

8 ch, 1600 MT/s |

4 ch, 1200 MT/s |

||||

| Sequential Read | 14 GB/s | 10 GB/s | 7.4 GB/s | 6 GB/s | 3.2 GB/s | ||||

| Sequential Write | 12 GB/s | 10 GB/s | 7.4 GB/s | 6 GB/s | 2.2 GB/s | ||||

| 4KB Random Read IOPS | 2500k | 1400k | 1200k | - | - | ||||

| 4KB Random Write IOPS | 2500k | 1400k | 1200k | - | - | ||||

Diving a bit deeper into Realtek's roadmap, the RTS5776DL is traditional DRAM-less PCIe Gen4 x4 controller with four NAND chann... SSDs

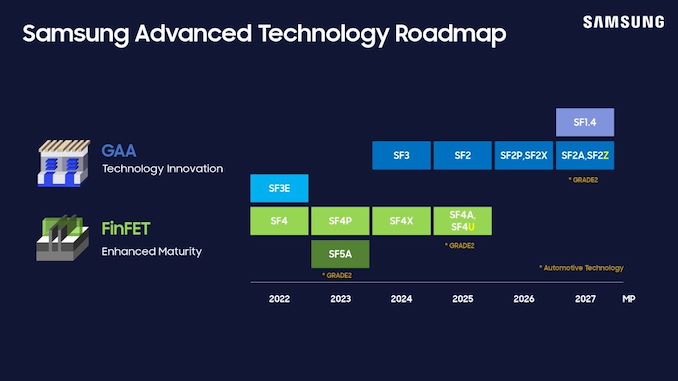

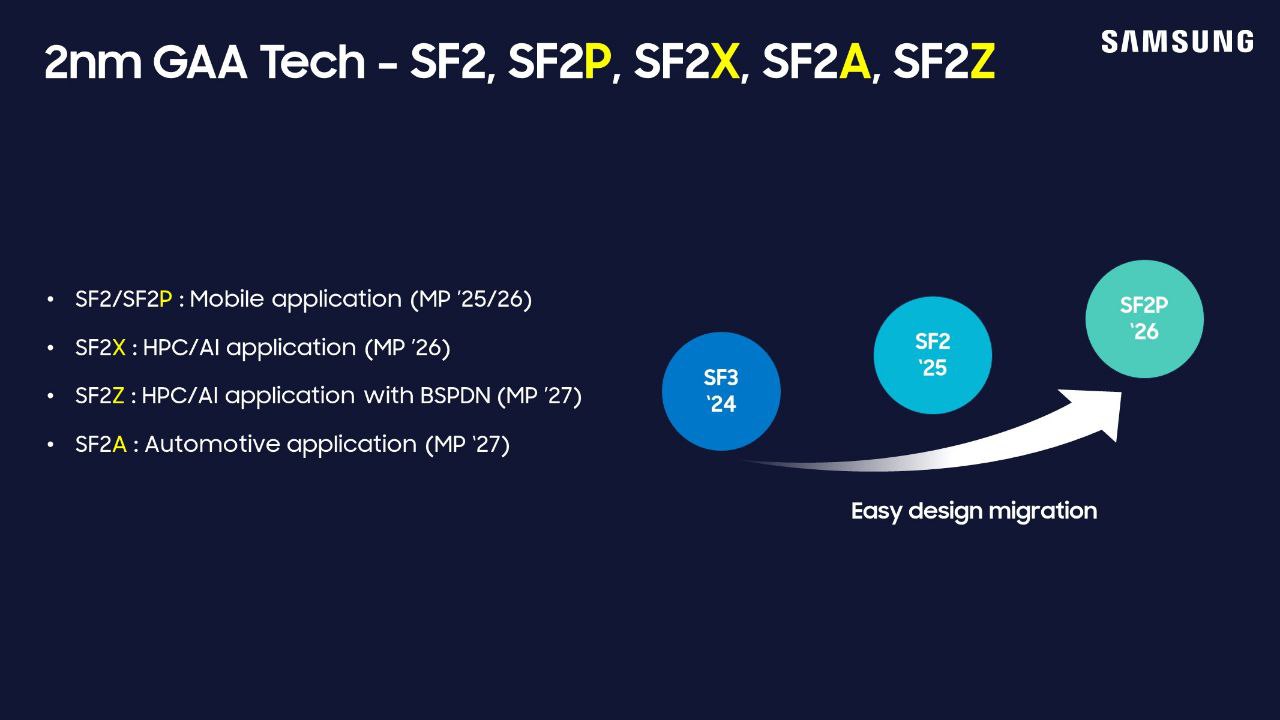

Samsung Foundry Unveils Updated Roadmap: BSPDN and 2nm Evolution Through 2027 Samsung this week has unveiled its latest process technologies roadmap at the company's Samsung Foundry Forum (SFF) U.S. The new plan covers the evolution of Samsung's 2nm-class production nodes through 2027, including a process technology with a backside power delivery, re-emphasizing plans to bring out a 1.4nm-class node in 2027, and the introduction of a 'high value' 4nm-class manufacturing tech.

Samsung Foundry's key announcements for today are clearly focused on the its 2nm-class process technologies, which are set to enter production in 2025 and will span to 2027, when the company's 1.4-nm class production node is set to enter the scene. Samsung is also adding (or rather, renaming) another 2nm-class node to their roadmap with SF2, which was previously disclosed by Samsung as SF3P and aimed at high-performance devices.

"We have refined and improved the SF3P, resulting in what we now refer to as SF2," a Samsung spokesperson told AnandTech. "This enhanced node incorporates various process design improvements, delivering notable power, performance, and area (PPA) benefits."

| Samsung Foundry for Leading-Edge Nodes Announced on June 12, 2024 Compiled by AnandTech |

||||||||

| HVM Start | 2023 | 2024 | 2025 | 2026 | 2027 | 2027 | ||

| Process | SF3E | SF3 | SF2 (aka SF3P) |

SF2P/SF2X | SF2Z | SF1.4 | ||

| FET | GAAFET | |||||||

| Power Delivery | Frontside | Backside (BSPDN) | ? | |||||

| EUV | 0.33 NA EUV | ? | ? | ? | ? | |||

This is another example of a rebranding of leading-edge fabrication nodes in the recent years by a major chipmaker. Samsung Foundry is not disclosing any specific PPA improvements SF3P has over SF2, and for now is only stating in high-level terms that it will be a better-performing node than the planned SF3P.

Meanwhile, this week's announcement also includes new information on Samsung's next batch of process nodes, which are planned for 2026 and 2027. In 2026 Samsung will have SF2P, a further refinement of SF2 which incorporates 'faster' yet less dense transistors. That will be followed up in 2027 with SF2Z, which adds backside power delivery to the mix for better and higher quality power delivery. In particular, Samsung is targetting voltate drop (aka IR drop) here, which is an ongoing concern in chip design.

Finally, SF1.4, a 1.4nm-class node, is on track for 2027 as well. Interestingly, however, it looks like it does not feature a backside power delivery. Which, per current roadmaps, would have Samsung as the only foundry not using BSPDN for their first 1.4nm/14Å-class node.

"We have optimized BSPDN and incorporated it for the first time in the SF2Z node we announced today," the spokesperso... Semiconductors

NVIDIA Closes Above $135, Becomes World’s Most Valuable Company Thanks to the success of the burgeoning market for AI accelerators, NVIDIA has been on a tear this year. And the only place that’s even more apparent than the company’s rapidly growing revenues is in the company’s stock price and market capitalization. After breaking into the top 5 most valuable companies only earlier this year, NVIDIA has reached the apex of Wall Street, closing out today as the world’s most valuable company.

With a closing price of $135.58 on a day that saw NVIDIA’s stock pop up another 3.5%, NVIDIA has topped both Microsoft and Apple in valuation, reaching a market capitalization of $3.335 trillion. This follows a rapid rise in the company’s stock price, which has increased by 47% in the last month alone – particularly on the back of NVIDIA’s most recent estimates-beating earnings report – as well as a recent 10-for-1 stock split. And looking at the company’s performance over a longer time period, NVIDIA’s stock jumped a staggering 218% over the last year, or a mere 3,474% over the last 5 years.

NVIDIA’s ascension continues a trend over the last several years of tech companies all holding the top spots in the market capitalization rankings. Though this is the first time in quite a while that the traditional tech leaders of Apple and Microsoft have been pushed aside.

| Market Capitalization Rankings | ||

| Market Cap | Stock Price | |

| NVIDIA | $3.335T | $135.58 |

| Microsoft | $3.317T | $446.34 |

| Apple | $3.285T | $214.29 |

| Alphabet | $2.170T | $176.45 |

| Amazon | $1.902T | $182.81 |

Driving the rapid growth of NVIDIA and its market capitalization has been demand for AI accelerators from NVIDIA, particularly the company’s server-grade H100, H200, and GH200 accelerators for AI training. As the demand for these products has spiked, NVIDIA has been scaling up accordingly, repeatedly beating market expectations for how many of the accelerators they can ship – and what price they can charge. And despite all that growth, orders for NVIDIA’s high-end accelerators are still backlogged, underscoring how NVIDIA still isn’t meeting the full demands of hyperscalers and other enterprises.

Consequently, NVIDIA’s stock price and market capitalization have been on a tear on the basis of these future expectations. With a price-to-earnings (P/E) ratio of 76.7 – more than twice that of Microsoft or Apple – NVIDIA is priced more like a start-up than a 30-year-old tech company. But then it goes without saying that most 30-year-old tech companies aren’t tripling their revenue in a single year, placing NVIDIA in a rather unique situation at this time.

Like the stock market itself, market capitalizations are highly volatile. And historically speaking, it’s far from guaranteed that NVIDIA will be able to hold the top spot for long, never mind day-to-day fluctuations. NVIDIA, Apple, and Microsoft’s valuations are all within $50 billion (1.%) of each other, so for the moment at least, it’s still a tight race between all three companies. But no matter what happens from here, NVIDIA gets the exceptionally rare claim of having been the most valuable company in the world at some point.

(Carousel image courtesy MSN Money)

GPUs

NVIDIA Closes Above $135, Becomes World’s Most Valuable Company Thanks to the success of the burgeoning market for AI accelerators, NVIDIA has been on a tear this year. And the only place that’s even more apparent than the company’s rapidly growing revenues is in the company’s stock price and market capitalization. After breaking into the top 5 most valuable companies only earlier this year, NVIDIA has reached the apex of Wall Street, closing out today as the world’s most valuable company.

With a closing price of $135.58 on a day that saw NVIDIA’s stock pop up another 3.5%, NVIDIA has topped both Microsoft and Apple in valuation, reaching a market capitalization of $3.335 trillion. This follows a rapid rise in the company’s stock price, which has increased by 47% in the last month alone – particularly on the back of NVIDIA’s most recent estimates-beating earnings report – as well as a recent 10-for-1 stock split. And looking at the company’s performance over a longer time period, NVIDIA’s stock jumped a staggering 218% over the last year, or a mere 3,474% over the last 5 years.

NVIDIA’s ascension continues a trend over the last several years of tech companies all holding the top spots in the market capitalization rankings. Though this is the first time in quite a while that the traditional tech leaders of Apple and Microsoft have been pushed aside.

| Market Capitalization Rankings | ||

| Market Cap | Stock Price | |

| NVIDIA | $3.335T | $135.58 |

| Microsoft | $3.317T | $446.34 |

| Apple | $3.285T | $214.29 |

| Alphabet | $2.170T | $176.45 |

| Amazon | $1.902T | $182.81 |

Driving the rapid growth of NVIDIA and its market capitalization has been demand for AI accelerators from NVIDIA, particularly the company’s server-grade H100, H200, and GH200 accelerators for AI training. As the demand for these products has spiked, NVIDIA has been scaling up accordingly, repeatedly beating market expectations for how many of the accelerators they can ship – and what price they can charge. And despite all that growth, orders for NVIDIA’s high-end accelerators are still backlogged, underscoring how NVIDIA still isn’t meeting the full demands of hyperscalers and other enterprises.

Consequently, NVIDIA’s stock price and market capitalization have been on a tear on the basis of these future expectations. With a price-to-earnings (P/E) ratio of 76.7 – more than twice that of Microsoft or Apple – NVIDIA is priced more like a start-up than a 30-year-old tech company. But then it goes without saying that most 30-year-old tech companies aren’t tripling their revenue in a single year, placing NVIDIA in a rather unique situation at this time.

Like the stock market itself, market capitalizations are highly volatile. And historically speaking, it’s far from guaranteed that NVIDIA will be able to hold the top spot for long, never mind day-to-day fluctuations. NVIDIA, Apple, and Microsoft’s valuations are all within $50 billion (1.%) of each other, so for the moment at least, it’s still a tight race between all three companies. But no matter what happens from here, NVIDIA gets the exceptionally rare claim of having been the most valuable company in the world at some point.

(Carousel image courtesy MSN Money)

GPUs

Report: MediaTek Working on Arm-Based Processor for Windows PCs As Qualcomm's exclusivity for Arm-powered processors for Windows PCs is reportedly coming to its end, other chipmakers are getting ready to offer their Arm-based system-on-chips for Windows computers. And, according to a new report from Reuters, MediaTek will be among the companies jumping into the Windows-on-Arm field, with plans to launch their first PC processor late next year.

MediaTek's system-on-chip for Windows PCs will rely on Arm's 'ready-made designs,' according to Reuters. Which in turn hints that MediaTek would be using Arm's compute sub-system (CSS) for client PCs, a building block designed to significantly speed up development of SoCs.

With the vauge nature of the Reuters report, however, which version of Arm's IP MediaTek might be using remains unclear, and the answer to that will largely hinge on timing. Arm refreshes its client cores and IP offerings yearly – typically announcing them to the public in May – with finished chips rolling out as early as later in the year. So depending on just how late in the year MediaTek is planning to launch their chip, the company has a large enough window to potentially use either the current 2024 client designs, or next year's 2025 designs.

For reference, Arm's 2024 CSS for client systems is quite powerful on its own. It includes two ultra-high-performance Arm Cortex-X925 cores (each with up to 3MB L2 cache and clock speeds over 3.60 GHz, supporting SVE and SVE2), four high-performance Cortex-A725 cores, two energy-efficient Cortex-A520 cores, and an Immortalis-G925 graphics processor. And, of course, MediaTek has the expertise to skip Arm's CSS and build their own bespoke designs as well, if that's what they'd prefer.

Overall, the latest client designs from Arm can accommodate up to 14 CPU cores – Arm intentionally leaves headroom for designs to be scaled-up for laptops – which would make for quite a formidable chip. But the PC SoC market has no shortage of capable contenders with their own designs; besides Qualcomm's Snapdragon X processors, MediaTek would also be going up against the latest designs from Intel and AMD. All of whom are planning to make big plays for the mobile PC market in the next several months. So MediaTek will need to make a serious effort if their effort to jump into the PC SoC market are to succeed.

Since 2016, Microsoft has partnered with Qualcomm to bring Arm's processor architecture, which is widely used in smartphones, to Windows PCs. Qualcomm has an exclusive agreement to supply these chips for the next several months (the exact timing remains unclear), after which other designers like MediaTek can enter the market. Qualcomm, for its part, has benefited greatly from collaborating with Microsoft, so it will be interesting to see if Microsoft extends a similar hand out to other Arm chip makers.

Ultimately, the market for Arm PC SoCs has the potential to get crowded quickly. According to previous reports from Reuters, both AMD and NVIDIA are also developing Arm-based chips for Windows. So if all of those projects come to fruition, there could potentially be several Arm SoCs available to PC manufacturers around the same time. All of which would be a massive change from the past 20 years of the PC, where Intel and AMD have been the entire market.

Both MediaTek and Microsoft have declined to comment on the ongoing developments, the news agency states.

CPUs

NVIDIA's AD102 GPU Pops Up in MSI GeForce RTX 4070 Ti Super Cards As GPU families enter the later part of their lifecycles, we often see chip manufacturers start to offload stockpiles of salvaged chips that, for one reason or another, didn't make the grade for the tier of cards they normally are used in. These recovered chips are fairly unremarkable overall, but they are unsold silicon that still works and has economic value, leading to them being used in lower-tier cards so that they can be sold. And, judging by the appearance of a new video card design from MSI, it looks like NVIDIA's Ada Lovelace generation of chips has reached that stage, as the Taiwanese video card maker has put out a new GeForce RTX 4070 Ti Super card based on a salvaged AD102 GPU.

Typically based on NVIDIA's AD103 GPU, NVIDIA's GeForce RTX 4070 Ti Super series sits a step below the company's flagship RTX 4080/4090 cards, both of which are based on the bigger and badder AD102 chip. But with some number of AD102 chips inevitably failing to live up to RTX 4080 specifications, rather than being thrown out, these chips can instead be used to make RTX 4070 cards. Which is exactly what MSI has done with their new GeForce RTX 4070 Ti Super Ventus 3X Black OC graphics card.

The card itself is relatively unremarkable – using a binned AD102 chip doesn't come with any advantages, and it should perform just like regular AD103 cards – and for that reason, video card vendors rarely publicly note when they're doing a run of cards with a binned-down version of a bigger chip. However, these larger chips have a tell-tale PCB footprint that usually makes it obvious what's going on. Which, as first noticed by @wxnod, is exactly what's going on with MSI's card.

Ada Lovelace Lineup: MSI GeForce RTX 4070 TiS (AD103), RTX 4070 TiS (AD102), & RTX 4090 (AD102)

The tell, in this case, is the rear board shot provided by MSI. The larger AD102 GPU uses an equally larger mounting bracket, and is paired with a slightly more complex array of filtering capacitors on the back side of the board PCB. Ultimately, since these are visible in MSI's photos of their GeForce RTX 4070 Ti Super Ventus 3X Black OC, it's easy to compare it to other video cards and see that it has exactly the same capacitor layout as MSI's GeForce RTX 4090, thus confirming the use of an AD102 GPU.

Chip curiosities aside, all of NVIDIA GeForce RTX 4070 Ti Super graphics cards – no matter whether they are based on the AD102 or AD103 GPU – come with a GPU with 8,448 active CUDA cores and 16 GB of GDDR6X memory, so it doesn't (typically) matter which chip they carry. Otherwise, compared to a fully-enabled AD102 chip, the RTX 4070 Ti Super specifications are relatively modest, with fewer than half as many CUDA cores, underscoring how the AD102 chip being used in MSI's card is a pretty heavy salvage bin.

As for the rest of the card, MSI GeForce RTX 4070 Ti Super Ventus 3X Black OC is a relatively hefty card overall, with a cooling system to match. Being overclocked, the Ventus also has a slightly higher TDP than normal GeForce RTX 4070 Ti Super cards, weighing in at 295 Watts, or 10 Watts above baseline cards.

Meanwhile, MSI is apparently not the only video card manufacturer using salvaged AD102 chips for GeForce RTX 4070 Ti Super, either. @wxnod has also posted a screenshot obtained on an Inno3D GeForce RTX 4070 Ti Super based on an AD102 GPU.

GPUs

Ada Lovelace Lineup: MSI GeForce RTX 4070 TiS (AD103), RTX 4070 TiS (AD102), & RTX 4090 (AD102)

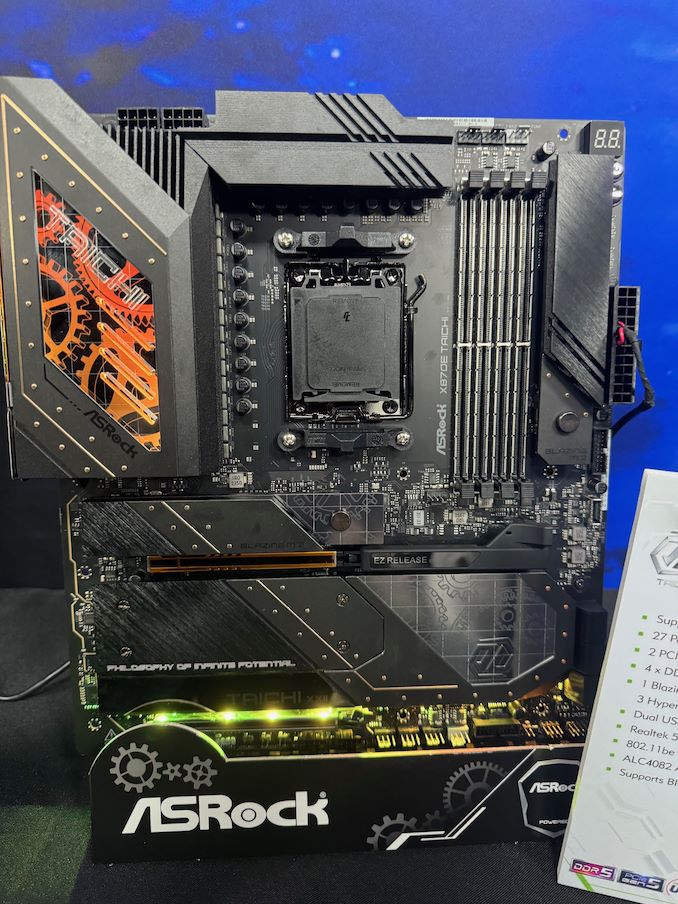

ASRock Unveils Motherboards For Ryzen 9000 At Computex 2024: X870E Taichi and X870E Taichi Lite During Computex 2024, ASRock held an event to unveil some of its upcoming X870E motherboards, designed for AMD's Zen 5-based Ryzen 9000 series processors. ASRock's announcement includes a pair of Taichi-branded boards, the X870E Taichi and the lighter X870E Taichi lite, which uses AMD's X870E (Promontory 21) chipset for AM5.

The current flagship model announced from ASRock's X870E line-up for Ryzen 9000 is the ASRock X870E Taichi. ASRock is advertising a large 27-phase power delivery through 110A SPS, suggesting this board is designed for overclockers and all-around power users. Two PCIe 5.0 x16 slots (operating in either x16/x0 or x8/x8) provide high-speed bandwidth for cutting-edge graphics cards and other devices. Meanwhile, ASRock has gone with 4 DIMM slots on this board, so system builders will be able to max out the board's memory capacity at the cost of bandwidth.

The storage offering is impressive; besides the obligatory PCIe Gen5 x4 M.2 slot (Blazing M.2), ASRock has outfit the board with another three PCIe Gen4 x4 (Hyper) M.2 slots. Also present are two USB4 Type-C ports for high-bandwidth external I/O, while networking support is a solid pairing of a discrete Wi-Fi 7 controller with a Realtek 5Gb Ethernet controller (and the first AM5 board we've come across with something faster than a 2.5GbE controller).

The audio setup includes a Realtek ALC4082 codec and ESS SABRE9218 DAC supporting high-fidelity sound. The BIOS flashback feature is also a nice touch, and we believe this should be a feature on all mid-range to high-end motherboards, which provides an easy way to update the firmware without installing a CPU. And, as no high-end board would be complete without it, ASRock has put RGB lighting on the X870E Taichi as well.

Ultimately, as ASRock's high-end X870E board, the X870E Taichi comes with pretty much every last cutting-edge technology that ASRock can fit on the board.

Comparatively, the ASRock X870E Taichi Lite is a more streamlined and functional version of the X870E Taichi. The Lite retaining all of the latter's key features, including the 27-phase power delivery with 110A smart power stages, dual PCIe 5.0 x16 slots operating at x16 or x8/x8, four DDR5 DIMM slots, and four M.2 slots (1x Gen5 + 3x Gen4). The only significant difference is aesthetics: the Taichi Lite features a simpler silver-themed design without the RGB lighting, while the standard Taichi has a more intricate gold-accented and fanciful aesthetics.

In terms of availability, ASRock is not disclosing a release date for the board at the show. And, checking around with other tech journalists, Andreas Schilling from HawrdwareLUXX has heard that X870E and X870 motherboards aren't expected to be available in time for the Ryzen 9000 series launch. We will investigate this and contact the motherboard vendors to confirm the situation. Though as X870E/X870 boards barely differ from the current crop of X670E/B650E boards to begin with, the Ryzen 9000 series won't be fazed by a lack of slightly newer motherboards.

Motherboards

Realtek Outlines SSD Controller Roadmap: High-End PCIe 5.0 x4 Platform in the Works While Realtek is best known in the enthusiast space for for its peripheral controllers such as audio codecs and network controllers, the company also has a small-but-respectable SSD controller business that tends to fly under the radar due to its focus on entry-level and mainstream drives. But Realtek's stature in the SSD space is on the rise, as the company is not only planning new PCIe Gen5 SSD controllers, but also their first high-end, DRAM-equipped SSD controller.

For this year's Computex trade show, Realtek laid out a new SSD controller roadmap that calls for the company to release a trio of new SSD controllers over the next couple of years. First up is a new four-channel entry-level PCIe 4.0 controller, the RTS5776DL, which will be joined a bit later by a PCIe 5.0 variant, the RTS5781DL. But most interesting on Realtek's new roadmap is the final chip being planned: the eight-channel, DRAM-equipped RTS5782, which would be the company's first high-end SSD controller, capable of hitting sequential read rates as high as 14GB/second.

| Realtek NVMe SSD Controller Comparison | |||||||||

| RTS5782 | RTS5781DL | RTS5776DL | RTS5772DL | RTS5766DL | |||||

| Market Segment | High-End | Mainstream | Entry-Level | ||||||

| Error Correction | 4K LDPC | 2K LDPC | |||||||

| DRAM | DDR4, LPDDR4(X) | No | No | No | No | ||||

| Host Interface | PCIe 5.0 x4 | PCIe 5.0 x4 | PCIe 4.0 x4 | PCIe 4.0 x4 | PCIe 3.0 x4 | ||||

| NVMe Version | NVMe 2.0 | NVMe 2.0 | NVMe 2.0 | NVMe 1.4 | NVMe 1.4 | ||||

| NAND Channels, Interface Speed | 8 ch, 3600 MT/s |

4 ch, 3600 MT/s |

4 ch, 3600 MT/s |

8 ch, 1600 MT/s |

4 ch, 1200 MT/s |

||||

| Sequential Read | 14 GB/s | 10 GB/s | 7.4 GB/s | 6 GB/s | 3.2 GB/s | ||||

| Sequential Write | 12 GB/s | 10 GB/s | 7.4 GB/s | 6 GB/s | 2.2 GB/s | ||||

| 4KB Random Read IOPS | 2500k | 1400k | 1200k | - | - | ||||

| 4KB Random Write IOPS | 2500k | 1400k | 1200k | - | - | ||||

Diving a bit deeper into Realtek's roadmap, the RTS5776DL is traditional DRAM-less PCIe Gen4 x4 controller with four NAND chann... SSDs

Micron: U.S. Fabs Will Start Operating in 2026 - 2029 When Micron announced plans to build two new fabs in the U.S. in 2022, the company vaguely said both would come online by the decade's end. Then, in 2023, it began to optimize its spending, which pushed production at these fabrication facilities. This week, the company outlined more precise timeframes for when its fabs in Idaho and New York will start operations: this will happen from calendar 2026 to calendar 2029.

"These fab construction investments are necessary to support supply growth for the latter half of this decade," a statement by Micron in its Q3 FY2024 financial results report reads. "This Idaho fab will not contribute to meaningful bit supply until fiscal 2027 and the New York construction capex is not expected to contribute to bit supply growth until fiscal 2028 or later. The timing of future [wafer fab equipment] spend in these fabs will be managed to align supply growth with expected demand growth."

Micron's fiscal year 2027 starts in September 2026, so the new fab near Boise, Idaho, is set to start operations between September 2026 and September 2027. The company's fiscal 2028 starts in September 2027, so the fab will likely begin operations in calendar 2028 or later, probably depending on the demand for DRAM memory in the coming years. That said, Micron's U.S. memory fabs will begin operations between late 2026 and 2029, which aligns with the company's original plans.

Construction of the fab in Idaho is well underway. In contrast, construction of the New York facility has yet to begin as the company is working on regulatory and permitting processes in the state.

Micron's capital expenditure (CaPex) plan for FY2024 is approximately $8.0 billion, with a decrease in year-over-year spending on wafer fabrication equipment (WFE). In Q4 FY2024, the company will spend around $3 billion on fab construction, new wafer fab tools, and various expansions/upgrades.

Looking ahead to FY2025, the company plans a substantial increase in capex, targeting a mid-30s percentage of revenue to support various technological and facility advancements. In particular, it expects its quarterly CapEx to average above the $3 billion level seen in the fourth quarter of FY2024, which means that it plans to spend about $12 billion in its fiscal 2025, which begins in late September.

Half or more of the total CapEx increase in FY2025 (i.e., over $2 billion) will be allocated to constructing new fabs in Idaho and New York. Meanwhile, the FY2025 CapEx will significantly rise to fund high-bandwidth memory (HBM) assembly and testing and the construction of fabrication and back-end facilities. This increase also includes investments in technology transitions to meet growing demand.

"Fab construction in Idaho is underway, and we are working diligently to complete the regulatory and permitting processes in New York," said Sanjay Mehrotra, chief executive officer of Micron, at the company's conference call with investors and financial analysts (via SeekingAlpha). "This additional leading-edge greenfield capacity, along with continued technology transition investments in our Asia facilities, is required to meet long-term demand in the second half of this decade and beyond. These investments support our objective to maintain our current bit share over time and to grow our memory bit supply in line with long-term industry bit demand."

Memory

The Enermax Revolution D.F. 12 750W ATX 3.1 PSU Review: Compact Contender Enermax, established in 1990, is a renowned Taiwanese company in the PC hardware industry, particularly recognized for its innovative power supply units (PSUs), cooling solutions, and PC cases. Over the years, Enermax has built a reputation for engineering reliable, high-performance PSUs that primarily target enthusiasts and professional users. Their commitment to quality and technological advancement has kept them at the forefront of the industry, constantly evolving to meet the demands of the ever-changing tech landscape.

In this review, we are looking at the latest addition to Enermax's impressive PSU lineup: the Revolution D.F. 12 750W PSU. This is the second ATX 3.1-compliant power supply to arrive in our labs, and, broadly speaking, illustrates how we're approaching the inflection point for PSU vendors to update their designs for Intel's revised PSU standard.

As for the Revolution D.F. 12 itself, Enermax's new PSU pursues a balanced design, meeting modern gaming PCs mid-way with good conversion efficiency and an overall robust power delivery system. With features like fully modular cables with per-wire sleeving, a dynamic hybrid fan control for optimal cooling, and advanced topologies, the Revolution D.F. 12 750W is primed to deliver on both reliability and performance. We will delve into its specifications, build quality, and performance metrics to see if the new unit lives up to Enermax's esteemed legacy.

Cases/Cooling/PSUs

CUDIMM Standard Set to Make Desktop Memory a Bit Smarter and a Lot More Robust While the new CAMM and LPCAMM memory modules for laptops have garnered a great deal of attention in recent months, it's not just the mobile side of the PC memory industry that is looking at changes. The desktop memory market is also coming due for some upgrades to further improve DIMM performance, in the form of a new DIMM variety called the Clocked Unbuffered DIMM (CUDIMM). And while this memory isn't in use quite yet, several memory vendors had their initial CUDIMM products on display at this year's Computex trade show, offering a glimpse into the future of desktop memory.

A variation on traditional Unbuffered DIMMs (UDIMMs), Clocked UDIMMs (and Clocked SODIMMs) have been created as another solution to the ongoing signal integrity challenges presented by DDR5 memory. DDR5 allows for rather speedy transfer rates with removable (and easily installed) DIMMs, but further performance increases are running up against the laws of physics when it comes to the electrical challenges of supporting memory on a stick – particularly with so many capacity/performance combinations like we see today. And while those challenges aren't insurmountable, if DDR5 (and eventually, DDR6) are to keep increasing in speed, some changes appear to be needed to produce more electrically robust DIMMs, which is giving rise to the CUDIMM.

Standardized by JEDEC earlier this year as JESD323, CUDIMMs tweak the traditional unbuffered DIMM by adding a clock driver (CKD) to the DIMM itself, with the tiny IC responsible for regenerating the clock signal driving the actual memory chips. By generating a clean clock locally on the DIMM (rather than directly using the clock from the CPU, as is the case today), CUDIMMs are designed to offer improved stability and reliability at high memory speeds, combating the electrical issues that would otherwise cause reliability issues at faster memory speeds. In other words, adding a clock driver is the key to keeping DDR5 operating reliably at high clockspeeds.

All told, JEDEC is proposing that CUDIMMs be used for DDR5-6400 speeds and higher, with the first version of the specification covering speeds up to DDR5-7200. The new DIMMs will also be drop-in compatible with existing platforms (at least on paper), using the same 288-pin connector as today's standard DDR5 UDIMM and allowing for a relatively smooth transition towards higher DDR5 clockspeeds.

Memory

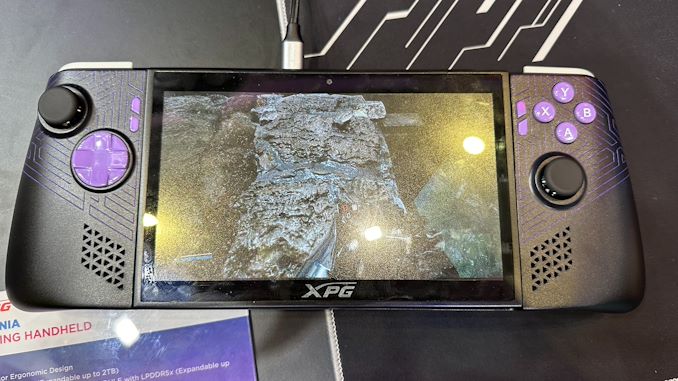

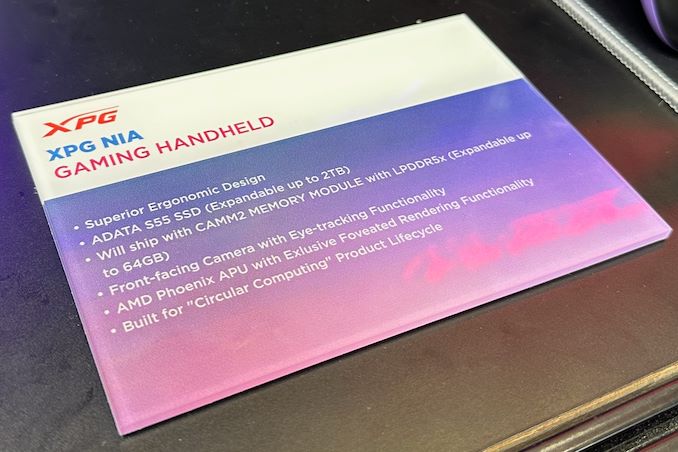

XPG Demos "Nia" Handheld Gaming PC With Foveated Rendering, Swappable DRAM With the rise of the handheld gaming PC market, we've seen PC vendors and their partners toy with a number of tricks and tweaks to improve improve framerates in games, with some of their latest efforts on display at this year's Computex trade show. Perhaps the most interesting find thus far comes from ADATA sub-brand XPG, who is demoing their prototype "Nia" handheld PC, which uses eye tracking and dynamic foveated rendering to further improve their rendering performance.

For those unfamiliar, dynamic foveated rendering is a graphics technique that is sometimes used to boost performance in virtual reality (VR) and augmented reality (AR) applications by taking advantage of how human vision works. Typically, humans can only perceive detailed imagery in the relatively small central area of our vision called the fovea, while our peripheral vision is much less detailed. Dynamic foveated rendering, in turn, exploits this by using real-time eye tracking to determine where the user is looking, and then rendering just that area in high/full resolution, while rendering the peripheral areas in lower resolution. The net result is that only a fraction of the screen is rendered at full detail, which cuts down on the total amount of rendering work required and boosting framerates on performance-limited devices.

As stated before, this technology is sometimes used in high-end AR/VR headsets, where high resolution displays are placed mere inches from one's face. This ends up being an ideal use case for the technique, since at those distances, only a small fraction of the screen is within the fovea.

Using dynamic foveated rendering for a handheld, on the other hand, is a more novel application. All of the same visual principles apply, but the resolutions at play are lower, and the screen is farther from the users' eyes. This makes a handheld device a less ideal use case, at least on paper, as a larger portion of the screen is going to be in the fovea, and thus will need to be rendered at full resolution. None the less, it will be interesting to see how XPG's efforts pan out, and if dynamic foveated rendering is beneficial enough for handheld PCs. As we sometimes see with trade show demos, not everything makes it out of the prototype stage.

According to a press release put out by ADATA ahead of the trade show, the eye tracking technology is being provided by AMD collaborator Eyeware. Notably, their software-based approach runs on top of standard webcams, rather than requiring IR cameras. So the camera hardware itself should be pretty straight-forward.

Foveated rendering aside, XPG is making sure that the Nia won't be a one-trick pony. The handheld's other major claim to fame is its hardware swappability. The prototype handheld not only features a removable M.2-2230 SSD, but the company is also taking advantage of the recently-introduced LPCAMM2 memory module standard to introduce removable DRAM. Via a hatch in the back of the handheld, device owners would be able to swap out LPCAMM2 LPDDR5X modules for higher capacity versions. This would give the handheld an additional degree of future-proofness over current handhelds, which use non-replaceable soldered-down memory.

Rounding out the package, the current prototype is based on an AMD's Zen 4 Phoenix APU, which is used across both of the company's current mobile lines (Ryzen Mobile 7000/8000 and Ryzen Z1). Meanwhile, the unit's display is adjustable, allowing it to be angled away from the body of the handheld.

Assuming all goes well with the prototype, XPG aims to release a finished product in 2025.

ADATA

The Enermax Revolution D.F. 12 750W ATX 3.1 PSU Review: Compact Contender Enermax, established in 1990, is a renowned Taiwanese company in the PC hardware industry, particularly recognized for its innovative power supply units (PSUs), cooling solutions, and PC cases. Over the years, Enermax has built a reputation for engineering reliable, high-performance PSUs that primarily target enthusiasts and professional users. Their commitment to quality and technological advancement has kept them at the forefront of the industry, constantly evolving to meet the demands of the ever-changing tech landscape.

In this review, we are looking at the latest addition to Enermax's impressive PSU lineup: the Revolution D.F. 12 750W PSU. This is the second ATX 3.1-compliant power supply to arrive in our labs, and, broadly speaking, illustrates how we're approaching the inflection point for PSU vendors to update their designs for Intel's revised PSU standard.

As for the Revolution D.F. 12 itself, Enermax's new PSU pursues a balanced design, meeting modern gaming PCs mid-way with good conversion efficiency and an overall robust power delivery system. With features like fully modular cables with per-wire sleeving, a dynamic hybrid fan control for optimal cooling, and advanced topologies, the Revolution D.F. 12 750W is primed to deliver on both reliability and performance. We will delve into its specifications, build quality, and performance metrics to see if the new unit lives up to Enermax's esteemed legacy.

Cases/Cooling/PSUs

Realtek Outlines SSD Controller Roadmap: High-End PCIe 5.0 x4 Platform in the Works While Realtek is best known in the enthusiast space for for its peripheral controllers such as audio codecs and network controllers, the company also has a small-but-respectable SSD controller business that tends to fly under the radar due to its focus on entry-level and mainstream drives. But Realtek's stature in the SSD space is on the rise, as the company is not only planning new PCIe Gen5 SSD controllers, but also their first high-end, DRAM-equipped SSD controller.

For this year's Computex trade show, Realtek laid out a new SSD controller roadmap that calls for the company to release a trio of new SSD controllers over the next couple of years. First up is a new four-channel entry-level PCIe 4.0 controller, the RTS5776DL, which will be joined a bit later by a PCIe 5.0 variant, the RTS5781DL. But most interesting on Realtek's new roadmap is the final chip being planned: the eight-channel, DRAM-equipped RTS5782, which would be the company's first high-end SSD controller, capable of hitting sequential read rates as high as 14GB/second.

| Realtek NVMe SSD Controller Comparison | |||||||||

| RTS5782 | RTS5781DL | RTS5776DL | RTS5772DL | RTS5766DL | |||||

| Market Segment | High-End | Mainstream | Entry-Level | ||||||

| Error Correction | 4K LDPC | 2K LDPC | |||||||

| DRAM | DDR4, LPDDR4(X) | No | No | No | No | ||||

| Host Interface | PCIe 5.0 x4 | PCIe 5.0 x4 | PCIe 4.0 x4 | PCIe 4.0 x4 | PCIe 3.0 x4 | ||||

| NVMe Version | NVMe 2.0 | NVMe 2.0 | NVMe 2.0 | NVMe 1.4 | NVMe 1.4 | ||||

| NAND Channels, Interface Speed | 8 ch, 3600 MT/s |

4 ch, 3600 MT/s |

4 ch, 3600 MT/s |

8 ch, 1600 MT/s |

4 ch, 1200 MT/s |

||||

| Sequential Read | 14 GB/s | 10 GB/s | 7.4 GB/s | 6 GB/s | 3.2 GB/s | ||||

| Sequential Write | 12 GB/s | 10 GB/s | 7.4 GB/s | 6 GB/s | 2.2 GB/s | ||||

| 4KB Random Read IOPS | 2500k | 1400k | 1200k | - | - | ||||

| 4KB Random Write IOPS | 2500k | 1400k | 1200k | - | - | ||||

Diving a bit deeper into Realtek's roadmap, the RTS5776DL is traditional DRAM-less PCIe Gen4 x4 controller with four NAND chann... SSDs

Report: MediaTek Working on Arm-Based Processor for Windows PCs As Qualcomm's exclusivity for Arm-powered processors for Windows PCs is reportedly coming to its end, other chipmakers are getting ready to offer their Arm-based system-on-chips for Windows computers. And, according to a new report from Reuters, MediaTek will be among the companies jumping into the Windows-on-Arm field, with plans to launch their first PC processor late next year.

MediaTek's system-on-chip for Windows PCs will rely on Arm's 'ready-made designs,' according to Reuters. Which in turn hints that MediaTek would be using Arm's compute sub-system (CSS) for client PCs, a building block designed to significantly speed up development of SoCs.

With the vauge nature of the Reuters report, however, which version of Arm's IP MediaTek might be using remains unclear, and the answer to that will largely hinge on timing. Arm refreshes its client cores and IP offerings yearly – typically announcing them to the public in May – with finished chips rolling out as early as later in the year. So depending on just how late in the year MediaTek is planning to launch their chip, the company has a large enough window to potentially use either the current 2024 client designs, or next year's 2025 designs.

For reference, Arm's 2024 CSS for client systems is quite powerful on its own. It includes two ultra-high-performance Arm Cortex-X925 cores (each with up to 3MB L2 cache and clock speeds over 3.60 GHz, supporting SVE and SVE2), four high-performance Cortex-A725 cores, two energy-efficient Cortex-A520 cores, and an Immortalis-G925 graphics processor. And, of course, MediaTek has the expertise to skip Arm's CSS and build their own bespoke designs as well, if that's what they'd prefer.

Overall, the latest client designs from Arm can accommodate up to 14 CPU cores – Arm intentionally leaves headroom for designs to be scaled-up for laptops – which would make for quite a formidable chip. But the PC SoC market has no shortage of capable contenders with their own designs; besides Qualcomm's Snapdragon X processors, MediaTek would also be going up against the latest designs from Intel and AMD. All of whom are planning to make big plays for the mobile PC market in the next several months. So MediaTek will need to make a serious effort if their effort to jump into the PC SoC market are to succeed.

Since 2016, Microsoft has partnered with Qualcomm to bring Arm's processor architecture, which is widely used in smartphones, to Windows PCs. Qualcomm has an exclusive agreement to supply these chips for the next several months (the exact timing remains unclear), after which other designers like MediaTek can enter the market. Qualcomm, for its part, has benefited greatly from collaborating with Microsoft, so it will be interesting to see if Microsoft extends a similar hand out to other Arm chip makers.

Ultimately, the market for Arm PC SoCs has the potential to get crowded quickly. According to previous reports from Reuters, both AMD and NVIDIA are also developing Arm-based chips for Windows. So if all of those projects come to fruition, there could potentially be several Arm SoCs available to PC manufacturers around the same time. All of which would be a massive change from the past 20 years of the PC, where Intel and AMD have been the entire market.

Both MediaTek and Microsoft have declined to comment on the ongoing developments, the news agency states.

CPUs

ASRock Unveils Motherboards For Ryzen 9000 At Computex 2024: X870E Taichi and X870E Taichi Lite During Computex 2024, ASRock held an event to unveil some of its upcoming X870E motherboards, designed for AMD's Zen 5-based Ryzen 9000 series processors. ASRock's announcement includes a pair of Taichi-branded boards, the X870E Taichi and the lighter X870E Taichi lite, which uses AMD's X870E (Promontory 21) chipset for AM5.

The current flagship model announced from ASRock's X870E line-up for Ryzen 9000 is the ASRock X870E Taichi. ASRock is advertising a large 27-phase power delivery through 110A SPS, suggesting this board is designed for overclockers and all-around power users. Two PCIe 5.0 x16 slots (operating in either x16/x0 or x8/x8) provide high-speed bandwidth for cutting-edge graphics cards and other devices. Meanwhile, ASRock has gone with 4 DIMM slots on this board, so system builders will be able to max out the board's memory capacity at the cost of bandwidth.

The storage offering is impressive; besides the obligatory PCIe Gen5 x4 M.2 slot (Blazing M.2), ASRock has outfit the board with another three PCIe Gen4 x4 (Hyper) M.2 slots. Also present are two USB4 Type-C ports for high-bandwidth external I/O, while networking support is a solid pairing of a discrete Wi-Fi 7 controller with a Realtek 5Gb Ethernet controller (and the first AM5 board we've come across with something faster than a 2.5GbE controller).

The audio setup includes a Realtek ALC4082 codec and ESS SABRE9218 DAC supporting high-fidelity sound. The BIOS flashback feature is also a nice touch, and we believe this should be a feature on all mid-range to high-end motherboards, which provides an easy way to update the firmware without installing a CPU. And, as no high-end board would be complete without it, ASRock has put RGB lighting on the X870E Taichi as well.

Ultimately, as ASRock's high-end X870E board, the X870E Taichi comes with pretty much every last cutting-edge technology that ASRock can fit on the board.

Comparatively, the ASRock X870E Taichi Lite is a more streamlined and functional version of the X870E Taichi. The Lite retaining all of the latter's key features, including the 27-phase power delivery with 110A smart power stages, dual PCIe 5.0 x16 slots operating at x16 or x8/x8, four DDR5 DIMM slots, and four M.2 slots (1x Gen5 + 3x Gen4). The only significant difference is aesthetics: the Taichi Lite features a simpler silver-themed design without the RGB lighting, while the standard Taichi has a more intricate gold-accented and fanciful aesthetics.

In terms of availability, ASRock is not disclosing a release date for the board at the show. And, checking around with other tech journalists, Andreas Schilling from HawrdwareLUXX has heard that X870E and X870 motherboards aren't expected to be available in time for the Ryzen 9000 series launch. We will investigate this and contact the motherboard vendors to confirm the situation. Though as X870E/X870 boards barely differ from the current crop of X670E/B650E boards to begin with, the Ryzen 9000 series won't be fazed by a lack of slightly newer motherboards.

Motherboards

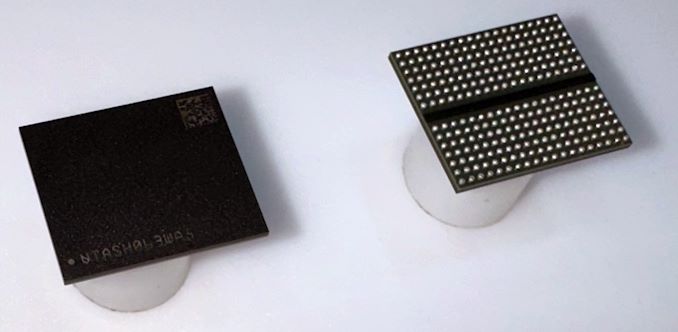

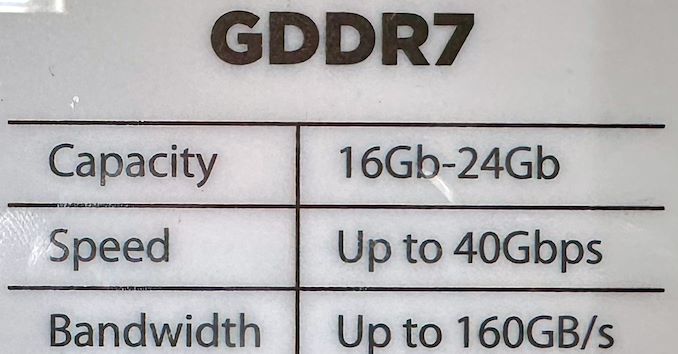

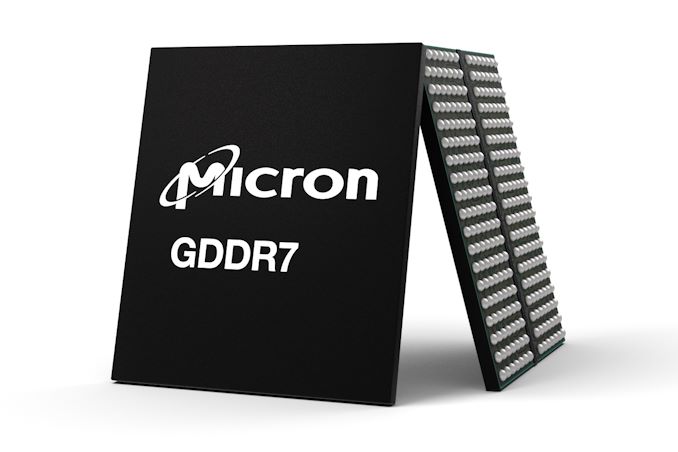

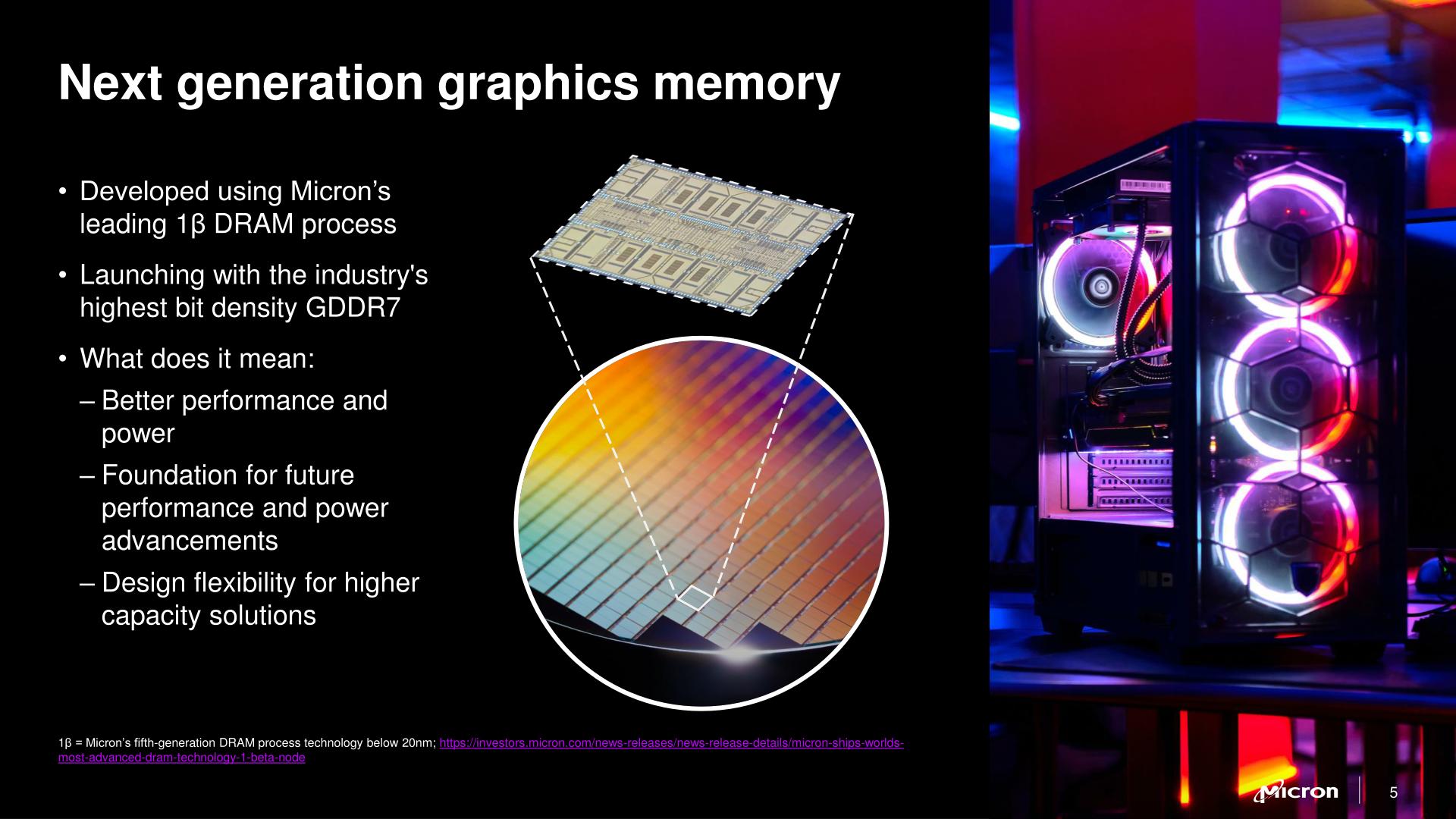

Micron's GDDR7 Chip Smiles for the Camera as Micron Aims to Seize Larger Share of HBM Market UPDATE 6/12: Micron notified us that it expects its HBM market share to rise to mid-20% in the middle of calendar 2025, not in the middle of fiscal 2025.

For Computex week, Micron was at the show in force in order to talk about its latest products across the memory spectrum. The biggest news for the memory company was that it has kicked-off sampling of it's next-gen GDDR7 memory, which is expected to start showing up in finished products later this year and was being demoed on the show floor. Meanwhile, the company is also eyeing taking a much larger piece of the other pillar of the high-performance memory market – High Bandwidth Memory – with aims of capturing around 25% of the premium HBM market.

GDDR7 to Hit the Market Later This Year

Micron's first GDDR7 chip is a 16 Gb memory device with a 32 GT/sec (32Gbps/pin) transfer rate, which is significantly faster than contemporary GDDR6/GDDR6X. As outlined with JEDEC's announcement of GDDR7 earlier this year, the latest iteration of the high-performance memory technology is slated to improve on both memory bandwidth and capacity, with bandwidths starting at 32 GT/sec and potentially climbing another 50% higher to 48 GT/sec by the time the technology reaches its apex. And while the first chips are starting off at the same 2GByte (16Gbit) capacity as today's GDDR6(X) chips, the standard itself defines capacities as high as 64Gbit.

Of particular note, GDDR7 brings with it the switch to PAM3 (3-state) signal encoding, moving from the industry's long-held NRZ (2-state) signaling. As Micron was responsible for the bespoke GDDR6X technology, which was the first major DRAM spec to use PAM signaling (in its case, 4-state PAM4), Micron reckons they have a leg-up with GDDR7 development, as they're already familiar with working with PAM.

The GDDR7 transition also brings with it a change in how chips are organized, with the standard 32-bit wide chip now split up into four 8-bit sub-channels. And, like most other contemporary memory standards, GDDR7 is adding on-die ECC support to hold the line on chip reliability (though as always, we should note that on-die ECC isn't meant to be a replacement for full, multi-chip ECC). The standard also implements some other RAS features such as error checking and scrubbing, which although are not germane to gaming, will be a big deal for compute/AI use cases.

The added complexity of GDDR7 means that the pin count is once again increasing as well, with the new standard adding a further 86 pins to accommodate the data transfer and power delivery changes, bringing it to a total of 266 pins. With that said, the actual package size is remaining unchanged from GDDR5/GDDR6, maintaining that familiar 14mm x 12mm package. Memory manufacturers are instead using smaller diameter balls, as well as decreasing the pitch between the individual solder balls – going from GDDR6's 0.75mm x 0.75mm pitch to a slightly shorter 0.75mm x 0.73mm pitch. This allows the same package to fit in another 5 rows of contacts.

As for Micron's own production plans, the company is using its latest 1-beta (1β) fabrication process. While the major memory manufacturers don't readily publish the physical parameters of their processes these days, Micron believes that they have the edge on density with 1β, and consequently will be producing the densest GDDR7 at launch. And, while more nebulous, the company company believes that 1β will give them an edge in power efficiency as well.

Micron says that the first devices incorporating GDDR7 will be available this year. And while video card vendors remain a major consumer of GDDR memory, in 2024 the AI accelerator market should not be overlooked. With AI accelerators still bottlenecked by memory capacity and bandwidth, GDDR7 is expected to pair very well with inference accelerators, which need a more cost-effective option than HBM.

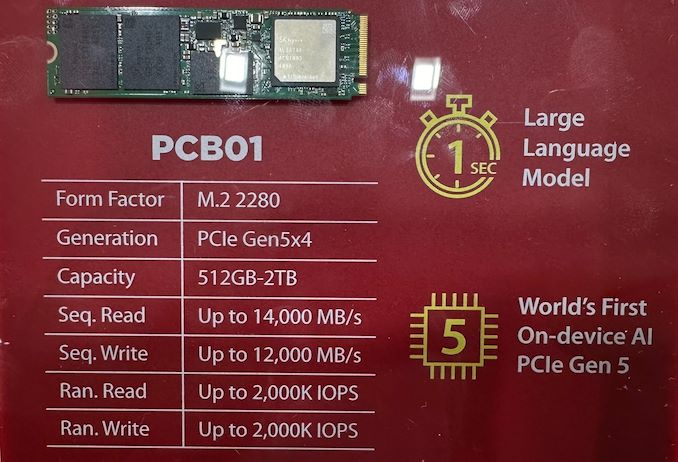

SK hynix early in Friday announced that the company has finished the development of it's PCB01 PCIe Gen5 SSD, the company's forthcoming high-end SSD for OEMs. Based on the company's new Alistar platform, the PCB01 is designed to deliver chart-topping performance for client machines. And, as a sign of the times, SK hynix is positioning the PCB01 for AI PCs, looking to synergize with the overall industry interest in anything and everything AI.

The bare, OEM-focused drives have previously been shown off by SK hynix, and make no attempt to hide what's under the hood. The PCB01 relies on SK hynix's Alistar controller, which features a PCIe Gen5 x4 host interface on the front end and eight NAND channels on the back end, placing it solidly in the realm of high-end SSDs. Paired with the Alistar controller is the company's latest 238-layer TLC NAND (H25T1TD48C & H25T2TD88C), which offers a maximum transfer speed of 2400 MT/second. Being that this is a high-end client SSD, there's also a DRAM chip on board, though the company isn't disclosing its capacity.

As with other high-end PCIe 5.0 client SSDs, SK hynix is planning on hitting peak read speeds of up to 14GB/second on the drive, while peak sequential write speeds should top 12GB/second (with pSLC caching, of course) – performance figures well within the realm of possibility for an 8 channel drive. As for random performance, at Computex the company was telling attendees that the drives should be able to sustain 4K random read and write rates of 2 million IOPS, which is very high as well. The SSDs are also said to consume up to 30% less power than 'predecessors,' according to SK hynix, though the company didn't elaborate on that figure. Typically in the storage industry, energy figures are based on iso-performance (rather than peak performance) – essentially measuring energy efficiency per bit rather than toal power consumption – and that is likely the case here as well.

At least initially, SK Hynix plans to release its PCB01 in three capacities – 512 GB, 1 TB, and 2 TB. The company has previously disclosed that their 238L TLC NAND has a capacity of 512Gbit, so these are typical capacity figures for single-sided drives. And while the focus of the company's press release this week was on OEM drives, this is the same controller and NAND that is also going into the company's previously-teased retail Platinum P51 SSD, so this week's reveal offers a bit more detail into what to expect from that drive family as well.

Specs aside, Ahn Hyun, the Head of the N-S Committee at SK hynix, said that multiple global CPU providers for on-device AI PCs are seeking collaboration for the compatibility validation process, which is underway, so expect PCB01 drives inside PCs in this back-to-school and holiday seasons.

"We will work towards enhancing our leadership as the global top AI memory provider also in the NAND solution space by successfully completing the customer validation and mass production of PCB01, which will be in the limelight," Ahn Hyun said.

SSDs' class='post-thumb' src='https://lh3.googleusercontent.com/blogger_img_proxy/AEn0k_vatrBzafFecsIZkXpp-DUmmMuySUP-3HlQiekHuFGiKw-Hkm7K8RY0IX_HcAfSRT66q1uQXAEgsOA-4-ytua3VaVJ2TOF7ChNartH_jZIjEo38Da2hEepHsjtEW0ZkY-dj2XcaBTPFy9uyMbZZFMSN5w=w72-h72-p-k-no-nu'/>

For Computex week, Micron was at the show in force in order to talk about its latest products across the memory spectrum. The biggest news for the memory company was that it has kicked-off sampling of it's next-gen GDDR7 memory, which is expected to start showing up in finished products later this year and was being demoed on the show floor. Meanwhile, the company is also eyeing taking a much larger piece of the other pillar of the high-performance memory market – High Bandwidth Memory – with aims of capturing around 25% of the premium HBM market.

SK hynix Wraps up Dev Work on High-End PCB01 PCIe 5.0 SSD for OEMs, Launching Later This Year SK hynix early in Friday announced that the company has finished the development of it's PCB01 PCIe Gen5 SSD, the company's forthcoming high-end SSD for OEMs. Based on the company's new Alistar platform, the PCB01 is designed to deliver chart-topping performance for client machines. And, as a sign of the times, SK hynix is positioning the PCB01 for AI PCs, looking to synergize with the overall industry interest in anything and everything AI.

The bare, OEM-focused drives have previously been shown off by SK hynix, and make no attempt to hide what's under the hood. The PCB01 relies on SK hynix's Alistar controller, which features a PCIe Gen5 x4 host interface on the front end and eight NAND channels on the back end, placing it solidly in the realm of high-end SSDs. Paired with the Alistar controller is the company's latest 238-layer TLC NAND (H25T1TD48C & H25T2TD88C), which offers a maximum transfer speed of 2400 MT/second. Being that this is a high-end client SSD, there's also a DRAM chip on board, though the company isn't disclosing its capacity.

As with other high-end PCIe 5.0 client SSDs, SK hynix is planning on hitting peak read speeds of up to 14GB/second on the drive, while peak sequential write speeds should top 12GB/second (with pSLC caching, of course) – performance figures well within the realm of possibility for an 8 channel drive. As for random performance, at Computex the company was telling attendees that the drives should be able to sustain 4K random read and write rates of 2 million IOPS, which is very high as well. The SSDs are also said to consume up to 30% less power than 'predecessors,' according to SK hynix, though the company didn't elaborate on that figure. Typically in the storage industry, energy figures are based on iso-performance (rather than peak performance) – essentially measuring energy efficiency per bit rather than toal power consumption – and that is likely the case here as well.

At least initially, SK Hynix plans to release its PCB01 in three capacities – 512 GB, 1 TB, and 2 TB. The company has previously disclosed that their 238L TLC NAND has a capacity of 512Gbit, so these are typical capacity figures for single-sided drives. And while the focus of the company's press release this week was on OEM drives, this is the same controller and NAND that is also going into the company's previously-teased retail Platinum P51 SSD, so this week's reveal offers a bit more detail into what to expect from that drive family as well.

Specs aside, Ahn Hyun, the Head of the N-S Committee at SK hynix, said that multiple global CPU providers for on-device AI PCs are seeking collaboration for the compatibility validation process, which is underway, so expect PCB01 drives inside PCs in this back-to-school and holiday seasons.

"We will work towards enhancing our leadership as the global top AI memory provider also in the NAND solution space by successfully completing the customer validation and mass production of PCB01, which will be in the limelight," Ahn Hyun said.

SSDs

NVIDIA Closes Above $135, Becomes World’s Most Valuable Company Thanks to the success of the burgeoning market for AI accelerators, NVIDIA has been on a tear this year. And the only place that’s even more apparent than the company’s rapidly growing revenues is in the company’s stock price and market capitalization. After breaking into the top 5 most valuable companies only earlier this year, NVIDIA has reached the apex of Wall Street, closing out today as the world’s most valuable company.

With a closing price of $135.58 on a day that saw NVIDIA’s stock pop up another 3.5%, NVIDIA has topped both Microsoft and Apple in valuation, reaching a market capitalization of $3.335 trillion. This follows a rapid rise in the company’s stock price, which has increased by 47% in the last month alone – particularly on the back of NVIDIA’s most recent estimates-beating earnings report – as well as a recent 10-for-1 stock split. And looking at the company’s performance over a longer time period, NVIDIA’s stock jumped a staggering 218% over the last year, or a mere 3,474% over the last 5 years.

NVIDIA’s ascension continues a trend over the last several years of tech companies all holding the top spots in the market capitalization rankings. Though this is the first time in quite a while that the traditional tech leaders of Apple and Microsoft have been pushed aside.

| Market Capitalization Rankings | ||

| Market Cap | Stock Price | |

| NVIDIA | $3.335T | $135.58 |

| Microsoft | $3.317T | $446.34 |

| Apple | $3.285T | $214.29 |

| Alphabet | $2.170T | $176.45 |

| Amazon | $1.902T | $182.81 |

Driving the rapid growth of NVIDIA and its market capitalization has been demand for AI accelerators from NVIDIA, particularly the company’s server-grade H100, H200, and GH200 accelerators for AI training. As the demand for these products has spiked, NVIDIA has been scaling up accordingly, repeatedly beating market expectations for how many of the accelerators they can ship – and what price they can charge. And despite all that growth, orders for NVIDIA’s high-end accelerators are still backlogged, underscoring how NVIDIA still isn’t meeting the full demands of hyperscalers and other enterprises.

Consequently, NVIDIA’s stock price and market capitalization have been on a tear on the basis of these future expectations. With a price-to-earnings (P/E) ratio of 76.7 – more than twice that of Microsoft or Apple – NVIDIA is priced more like a start-up than a 30-year-old tech company. But then it goes without saying that most 30-year-old tech companies aren’t tripling their revenue in a single year, placing NVIDIA in a rather unique situation at this time.

Like the stock market itself, market capitalizations are highly volatile. And historically speaking, it’s far from guaranteed that NVIDIA will be able to hold the top spot for long, never mind day-to-day fluctuations. NVIDIA, Apple, and Microsoft’s valuations are all within $50 billion (1.%) of each other, so for the moment at least, it’s still a tight race between all three companies. But no matter what happens from here, NVIDIA gets the exceptionally rare claim of having been the most valuable company in the world at some point.

(Carousel image courtesy MSN Money)

GPUs

XPG Demos "Nia" Handheld Gaming PC With Foveated Rendering, Swappable DRAM With the rise of the handheld gaming PC market, we've seen PC vendors and their partners toy with a number of tricks and tweaks to improve improve framerates in games, with some of their latest efforts on display at this year's Computex trade show. Perhaps the most interesting find thus far comes from ADATA sub-brand XPG, who is demoing their prototype "Nia" handheld PC, which uses eye tracking and dynamic foveated rendering to further improve their rendering performance.

For those unfamiliar, dynamic foveated rendering is a graphics technique that is sometimes used to boost performance in virtual reality (VR) and augmented reality (AR) applications by taking advantage of how human vision works. Typically, humans can only perceive detailed imagery in the relatively small central area of our vision called the fovea, while our peripheral vision is much less detailed. Dynamic foveated rendering, in turn, exploits this by using real-time eye tracking to determine where the user is looking, and then rendering just that area in high/full resolution, while rendering the peripheral areas in lower resolution. The net result is that only a fraction of the screen is rendered at full detail, which cuts down on the total amount of rendering work required and boosting framerates on performance-limited devices.

As stated before, this technology is sometimes used in high-end AR/VR headsets, where high resolution displays are placed mere inches from one's face. This ends up being an ideal use case for the technique, since at those distances, only a small fraction of the screen is within the fovea.

Using dynamic foveated rendering for a handheld, on the other hand, is a more novel application. All of the same visual principles apply, but the resolutions at play are lower, and the screen is farther from the users' eyes. This makes a handheld device a less ideal use case, at least on paper, as a larger portion of the screen is going to be in the fovea, and thus will need to be rendered at full resolution. None the less, it will be interesting to see how XPG's efforts pan out, and if dynamic foveated rendering is beneficial enough for handheld PCs. As we sometimes see with trade show demos, not everything makes it out of the prototype stage.

According to a press release put out by ADATA ahead of the trade show, the eye tracking technology is being provided by AMD collaborator Eyeware. Notably, their software-based approach runs on top of standard webcams, rather than requiring IR cameras. So the camera hardware itself should be pretty straight-forward.

Foveated rendering aside, XPG is making sure that the Nia won't be a one-trick pony. The handheld's other major claim to fame is its hardware swappability. The prototype handheld not only features a removable M.2-2230 SSD, but the company is also taking advantage of the recently-introduced LPCAMM2 memory module standard to introduce removable DRAM. Via a hatch in the back of the handheld, device owners would be able to swap out LPCAMM2 LPDDR5X modules for higher capacity versions. This would give the handheld an additional degree of future-proofness over current handhelds, which use non-replaceable soldered-down memory.

Rounding out the package, the current prototype is based on an AMD's Zen 4 Phoenix APU, which is used across both of the company's current mobile lines (Ryzen Mobile 7000/8000 and Ryzen Z1). Meanwhile, the unit's display is adjustable, allowing it to be angled away from the body of the handheld.

Assuming all goes well with the prototype, XPG aims to release a finished product in 2025.

ADATA

NVIDIA Closes Above $135, Becomes World’s Most Valuable Company Thanks to the success of the burgeoning market for AI accelerators, NVIDIA has been on a tear this year. And the only place that’s even more apparent than the company’s rapidly growing revenues is in the company’s stock price and market capitalization. After breaking into the top 5 most valuable companies only earlier this year, NVIDIA has reached the apex of Wall Street, closing out today as the world’s most valuable company.

With a closing price of $135.58 on a day that saw NVIDIA’s stock pop up another 3.5%, NVIDIA has topped both Microsoft and Apple in valuation, reaching a market capitalization of $3.335 trillion. This follows a rapid rise in the company’s stock price, which has increased by 47% in the last month alone – particularly on the back of NVIDIA’s most recent estimates-beating earnings report – as well as a recent 10-for-1 stock split. And looking at the company’s performance over a longer time period, NVIDIA’s stock jumped a staggering 218% over the last year, or a mere 3,474% over the last 5 years.

NVIDIA’s ascension continues a trend over the last several years of tech companies all holding the top spots in the market capitalization rankings. Though this is the first time in quite a while that the traditional tech leaders of Apple and Microsoft have been pushed aside.

| Market Capitalization Rankings | ||

| Market Cap | Stock Price | |

| NVIDIA | $3.335T | $135.58 |

| Microsoft | $3.317T | $446.34 |

| Apple | $3.285T | $214.29 |

| Alphabet | $2.170T | $176.45 |

| Amazon | $1.902T | $182.81 |

Driving the rapid growth of NVIDIA and its market capitalization has been demand for AI accelerators from NVIDIA, particularly the company’s server-grade H100, H200, and GH200 accelerators for AI training. As the demand for these products has spiked, NVIDIA has been scaling up accordingly, repeatedly beating market expectations for how many of the accelerators they can ship – and what price they can charge. And despite all that growth, orders for NVIDIA’s high-end accelerators are still backlogged, underscoring how NVIDIA still isn’t meeting the full demands of hyperscalers and other enterprises.

Consequently, NVIDIA’s stock price and market capitalization have been on a tear on the basis of these future expectations. With a price-to-earnings (P/E) ratio of 76.7 – more than twice that of Microsoft or Apple – NVIDIA is priced more like a start-up than a 30-year-old tech company. But then it goes without saying that most 30-year-old tech companies aren’t tripling their revenue in a single year, placing NVIDIA in a rather unique situation at this time.

Like the stock market itself, market capitalizations are highly volatile. And historically speaking, it’s far from guaranteed that NVIDIA will be able to hold the top spot for long, never mind day-to-day fluctuations. NVIDIA, Apple, and Microsoft’s valuations are all within $50 billion (1.%) of each other, so for the moment at least, it’s still a tight race between all three companies. But no matter what happens from here, NVIDIA gets the exceptionally rare claim of having been the most valuable company in the world at some point.

(Carousel image courtesy MSN Money)

GPUs

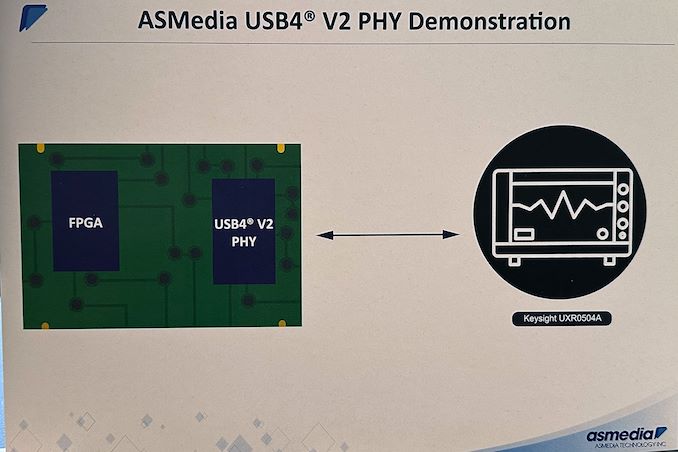

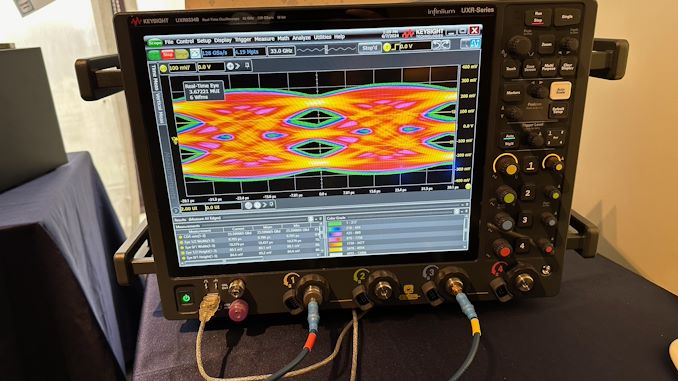

ASMedia Preps USB4 v2 Controller and PHY The USB Implementers Forum (USB-IF) introduced USB4 version 2.0 in fall 2022, and it expects systems and devices with the tech to emerge later this year and into next year. These upcoming products will largely rely on Intel's Barlow Ridge controller, a full-featured Thunderbolt 5 controller that goes above and beond the baseline USB4 v2 spec. And though extremely capable, Intel's Thunderbolt controllers are also quite expensive, and Barlow Ridge isn't expected to be any different. Fortunately, for system and device vendors that just need a basic USB4 v2 solution, ASMedia is also working on its own USB4 v2 controller.

At Computex 2024, ASMedia demonstrated a prototype of its upcoming USB4 v2 physical interface (PHY), which will support USB4 v2's new Gen 4 (160Gbps) data rates and the associated PAM-3 signal encoding. The prototype was implemented using an FPGA, as the company yet has to tape out the completed controller.

Ultimately, the purpose of showing off a FPGA-based PHY at Computex was to allow ASMedia to demonstrate their current PHY design. With the shift to PAM-3 encoding for USB4 v2, ASMedia (and the rest of the USB ecosystem) must develop significantly more complex controllers – and there's no part of that more critical than a solid and reliable PHY design.

As part of their demonstration, ASMedia had a classic eye diagram display. The eye diagram demoed has a clear opening in the center, which is indicative of good signal integrity, as the larger the eye opening, the less distortion and noise in the signal. The horizontal width of the eye opening represents the time window in which the signal can be sampled correctly, so the relatively narrow horizontal spread of the eye opening suggests that there is minimal jitter, meaning the signal transitions are consistent and predictable. Finally, the vertical height of the eye opening indicates the signal amplitude and the rather tall eye opening suggests a higher signal-to-noise ratio (SNR), meaning that the signal is strong compared to any noise present.

ASMedia itself is one of the major suppliers for discrete USB controllers, so the availability of ASMedia's USB4 v2 chip is crucial for adoption of the standard in general. While Intel will spearhead the industry with their Barlow Ridge Thunderbolt 5/USB4 v2 controller, ASMedia's controller is poised to end up in a far larger range of devices. So the importance of the company's USB4 v2 PHY demo is hard to overstate.

Demos aside, ASMedia is hoping to tape the chip out soon. If all goes well, the company expects their first USB4 v2 controllers to hit the market some time in the second half of 2025.

Peripherals

NVIDIA's AD102 GPU Pops Up in MSI GeForce RTX 4070 Ti Super Cards As GPU families enter the later part of their lifecycles, we often see chip manufacturers start to offload stockpiles of salvaged chips that, for one reason or another, didn't make the grade for the tier of cards they normally are used in. These recovered chips are fairly unremarkable overall, but they are unsold silicon that still works and has economic value, leading to them being used in lower-tier cards so that they can be sold. And, judging by the appearance of a new video card design from MSI, it looks like NVIDIA's Ada Lovelace generation of chips has reached that stage, as the Taiwanese video card maker has put out a new GeForce RTX 4070 Ti Super card based on a salvaged AD102 GPU.

Typically based on NVIDIA's AD103 GPU, NVIDIA's GeForce RTX 4070 Ti Super series sits a step below the company's flagship RTX 4080/4090 cards, both of which are based on the bigger and badder AD102 chip. But with some number of AD102 chips inevitably failing to live up to RTX 4080 specifications, rather than being thrown out, these chips can instead be used to make RTX 4070 cards. Which is exactly what MSI has done with their new GeForce RTX 4070 Ti Super Ventus 3X Black OC graphics card.

The card itself is relatively unremarkable – using a binned AD102 chip doesn't come with any advantages, and it should perform just like regular AD103 cards – and for that reason, video card vendors rarely publicly note when they're doing a run of cards with a binned-down version of a bigger chip. However, these larger chips have a tell-tale PCB footprint that usually makes it obvious what's going on. Which, as first noticed by @wxnod, is exactly what's going on with MSI's card.

Ada Lovelace Lineup: MSI GeForce RTX 4070 TiS (AD103), RTX 4070 TiS (AD102), & RTX 4090 (AD102)

The tell, in this case, is the rear board shot provided by MSI. The larger AD102 GPU uses an equally larger mounting bracket, and is paired with a slightly more complex array of filtering capacitors on the back side of the board PCB. Ultimately, since these are visible in MSI's photos of their GeForce RTX 4070 Ti Super Ventus 3X Black OC, it's easy to compare it to other video cards and see that it has exactly the same capacitor layout as MSI's GeForce RTX 4090, thus confirming the use of an AD102 GPU.

Chip curiosities aside, all of NVIDIA GeForce RTX 4070 Ti Super graphics cards – no matter whether they are based on the AD102 or AD103 GPU – come with a GPU with 8,448 active CUDA cores and 16 GB of GDDR6X memory, so it doesn't (typically) matter which chip they carry. Otherwise, compared to a fully-enabled AD102 chip, the RTX 4070 Ti Super specifications are relatively modest, with fewer than half as many CUDA cores, underscoring how the AD102 chip being used in MSI's card is a pretty heavy salvage bin.

As for the rest of the card, MSI GeForce RTX 4070 Ti Super Ventus 3X Black OC is a relatively hefty card overall, with a cooling system to match. Being overclocked, the Ventus also has a slightly higher TDP than normal GeForce RTX 4070 Ti Super cards, weighing in at 295 Watts, or 10 Watts above baseline cards.

Meanwhile, MSI is apparently not the only video card manufacturer using salvaged AD102 chips for GeForce RTX 4070 Ti Super, either. @wxnod has also posted a screenshot obtained on an Inno3D GeForce RTX 4070 Ti Super based on an AD102 GPU.

GPUs

Ada Lovelace Lineup: MSI GeForce RTX 4070 TiS (AD103), RTX 4070 TiS (AD102), & RTX 4090 (AD102)

Realtek Outlines SSD Controller Roadmap: High-End PCIe 5.0 x4 Platform in the Works While Realtek is best known in the enthusiast space for for its peripheral controllers such as audio codecs and network controllers, the company also has a small-but-respectable SSD controller business that tends to fly under the radar due to its focus on entry-level and mainstream drives. But Realtek's stature in the SSD space is on the rise, as the company is not only planning new PCIe Gen5 SSD controllers, but also their first high-end, DRAM-equipped SSD controller.

For this year's Computex trade show, Realtek laid out a new SSD controller roadmap that calls for the company to release a trio of new SSD controllers over the next couple of years. First up is a new four-channel entry-level PCIe 4.0 controller, the RTS5776DL, which will be joined a bit later by a PCIe 5.0 variant, the RTS5781DL. But most interesting on Realtek's new roadmap is the final chip being planned: the eight-channel, DRAM-equipped RTS5782, which would be the company's first high-end SSD controller, capable of hitting sequential read rates as high as 14GB/second.

| Realtek NVMe SSD Controller Comparison | |||||||||

| RTS5782 | RTS5781DL | RTS5776DL | RTS5772DL | RTS5766DL | |||||

| Market Segment | High-End | Mainstream | Entry-Level | ||||||

| Error Correction | 4K LDPC | 2K LDPC | |||||||

| DRAM | DDR4, LPDDR4(X) | No | No | No | No | ||||

| Host Interface | PCIe 5.0 x4 | PCIe 5.0 x4 | PCIe 4.0 x4 | PCIe 4.0 x4 | PCIe 3.0 x4 | ||||

| NVMe Version | NVMe 2.0 | NVMe 2.0 | NVMe 2.0 | NVMe 1.4 | NVMe 1.4 | ||||

| NAND Channels, Interface Speed | 8 ch, 3600 MT/s |

4 ch, 3600 MT/s |

4 ch, 3600 MT/s |

8 ch, 1600 MT/s |

4 ch, 1200 MT/s |

||||

| Sequential Read | 14 GB/s | 10 GB/s | 7.4 GB/s | 6 GB/s | 3.2 GB/s | ||||

| Sequential Write | 12 GB/s | 10 GB/s | 7.4 GB/s | 6 GB/s | 2.2 GB/s | ||||

| 4KB Random Read IOPS | 2500k | 1400k | 1200k | - | - | ||||

| 4KB Random Write IOPS | 2500k | 1400k | 1200k | - | - | ||||

Diving a bit deeper into Realtek's roadmap, the RTS5776DL is traditional DRAM-less PCIe Gen4 x4 controller with four NAND chann... SSDs

NVIDIA's AD102 GPU Pops Up in MSI GeForce RTX 4070 Ti Super Cards As GPU families enter the later part of their lifecycles, we often see chip manufacturers start to offload stockpiles of salvaged chips that, for one reason or another, didn't make the grade for the tier of cards they normally are used in. These recovered chips are fairly unremarkable overall, but they are unsold silicon that still works and has economic value, leading to them being used in lower-tier cards so that they can be sold. And, judging by the appearance of a new video card design from MSI, it looks like NVIDIA's Ada Lovelace generation of chips has reached that stage, as the Taiwanese video card maker has put out a new GeForce RTX 4070 Ti Super card based on a salvaged AD102 GPU.

Typically based on NVIDIA's AD103 GPU, NVIDIA's GeForce RTX 4070 Ti Super series sits a step below the company's flagship RTX 4080/4090 cards, both of which are based on the bigger and badder AD102 chip. But with some number of AD102 chips inevitably failing to live up to RTX 4080 specifications, rather than being thrown out, these chips can instead be used to make RTX 4070 cards. Which is exactly what MSI has done with their new GeForce RTX 4070 Ti Super Ventus 3X Black OC graphics card.

The card itself is relatively unremarkable – using a binned AD102 chip doesn't come with any advantages, and it should perform just like regular AD103 cards – and for that reason, video card vendors rarely publicly note when they're doing a run of cards with a binned-down version of a bigger chip. However, these larger chips have a tell-tale PCB footprint that usually makes it obvious what's going on. Which, as first noticed by @wxnod, is exactly what's going on with MSI's card.

Ada Lovelace Lineup: MSI GeForce RTX 4070 TiS (AD103), RTX 4070 TiS (AD102), & RTX 4090 (AD102)

The tell, in this case, is the rear board shot provided by MSI. The larger AD102 GPU uses an equally larger mounting bracket, and is paired with a slightly more complex array of filtering capacitors on the back side of the board PCB. Ultimately, since these are visible in MSI's photos of their GeForce RTX 4070 Ti Super Ventus 3X Black OC, it's easy to compare it to other video cards and see that it has exactly the same capacitor layout as MSI's GeForce RTX 4090, thus confirming the use of an AD102 GPU.

Chip curiosities aside, all of NVIDIA GeForce RTX 4070 Ti Super graphics cards – no matter whether they are based on the AD102 or AD103 GPU – come with a GPU with 8,448 active CUDA cores and 16 GB of GDDR6X memory, so it doesn't (typically) matter which chip they carry. Otherwise, compared to a fully-enabled AD102 chip, the RTX 4070 Ti Super specifications are relatively modest, with fewer than half as many CUDA cores, underscoring how the AD102 chip being used in MSI's card is a pretty heavy salvage bin.

As for the rest of the card, MSI GeForce RTX 4070 Ti Super Ventus 3X Black OC is a relatively hefty card overall, with a cooling system to match. Being overclocked, the Ventus also has a slightly higher TDP than normal GeForce RTX 4070 Ti Super cards, weighing in at 295 Watts, or 10 Watts above baseline cards.

Meanwhile, MSI is apparently not the only video card manufacturer using salvaged AD102 chips for GeForce RTX 4070 Ti Super, either. @wxnod has also posted a screenshot obtained on an Inno3D GeForce RTX 4070 Ti Super based on an AD102 GPU.

GPUs

Ada Lovelace Lineup: MSI GeForce RTX 4070 TiS (AD103), RTX 4070 TiS (AD102), & RTX 4090 (AD102)

Popular Post

Search This Blog

OfferNest

Subscribe Us

Most Popular

Tags

- https://www.amazon.com/2020-2021-Planner-Academic-Do-Twin-Wire/dp/B083V11TM5?tag=all0ad0-21https://m.media-amazon.com/images/I/41btLRSWksL.jpg