Hot Posts

MediaTek to Add NVIDIA G-Sync Support to Monitor Scalers, Make G-Sync Displays More Accessible

NVIDIA on Tuesday said that future monitor scalers from MediaTek will support its G-Sync technologies. NVIDIA is partnering with MediaTek to integrate its full range of G-Sync technologies into future monitors without requiring a standalone G-Sync module, which makes advanced gaming features more accessible across a broader range of displays.

Traditionally, G-Sync technology relied on a dedicated G-sync module – based on an Altera FPGA – to handle syncing display refresh rates with the GPU in order to reduce screen tearing, stutter, and input lag. As a more basic solution, in 2019 NVIDIA introduced G-Sync Compatible certification and branding, which leveraged the industry-standard VESA AdaptiveSync technology to handle variable refresh rates. In lieu of using a dedicated module, leveraging AdaptiveSync allowed for cheaper monitors, with NVIDIA's program serving as a stamp of approval that the monitor worked with NVIDIA GPUs and met NVIDIA's performance requirements. Still, G-Sync Compatible monitors still lack some features that, to date, require the dedicated G-Sync module.

Through this new partnership with MediaTek, MediaTek will bring support for all of NVIDIA's G-Sync technologies, including the latest G-Sync Pulsar, directly into their scalers. G-Sync Pulsar enhances motion clarity and reduces ghosting, providing a smoother gaming experience. In addition to variable refresh rates and Pulsar, MediaTek-based G-Sync displays will support such features as variable overdrive, 12-bit color, Ultra Low Motion Blur, low latency HDR, and Reflex Analyzer. This integration will allow more monitors to support a full range of G-Sync features without having to incorporate an expensive FPGA.

The first monitors to feature full G-Sync support without needing an NVIDIA module include the AOC Agon Pro AG276QSG2, Acer Predator XB273U F5, and ASUS ROG Swift 360Hz PG27AQNR. These monitors offer 360Hz refresh rates, 1440p resolution, and HDR support.

What remains to be seen is which specific MediaTek's scalers will support NVIDIA's G-Sync technology – or if the company is going to implement support into all of their scalers going forward. It also remains to be seen whether monitors with NVIDIA's dedicated G-Sync modules retain any advantages over displays with MediaTek's scalers.

MonitorsPopular Post

Intel Sells Its Arm Shares, Reduces Stakes in Other Companies Intel has divested its entire stake in Arm Holdings during the second quarter, raising approximately $147 million. Alongside this, Intel sold its stake in cybersecurity firm ZeroFox and reduced its holdings in Astera Labs, all as part of a broader effort to manage costs and recover cash amid significant financial challenges.

The sale of Intel's 1.18 million shares in Arm Holdings, as reported in a recent SEC filing, comes at a time when the company is struggling with substantial financial losses. Despite the $147 million generated from the sale, Intel reported a $120 million net loss on its equity investments for the quarter, which is a part of a larger $1.6 billion loss that Intel faced during this period.

In addition to selling its stake in Arm, Intel also exited its investment in ZeroFox and reduced its involvement with Astera Labs, a company known for developing connectivity platforms for enterprise hardware. These moves are in line with Intel's strategy to reduce costs and stabilize its financial position as it faces ongoing market challenges.

Despite the divestment, Intel's past investment in Arm was likely driven by strategic considerations. Arm Holdings is a significant force in the semiconductor industry, with its designs powering most mobile devices, and, for obvious reasons, Intel would like to address these. Intel and Arm are also collaborating on datacenter platforms tailored for Intel's 18A process technology. Additionally, Arm might view Intel as a potential licensee for its technologies and a valuable partner for other companies that license Arm's designs.

Intel's investment in Astera Labs was also a strategic one as the company probably wanted to secure steady supply of smart retimers, smart cable modems, and CXL memory controller, which are used in volumes in datacenters and Intel is certainly interested in selling as many datacenter CPUs as possible.

Intel's financial struggles were highlighted earlier this month when the company released a disappointing earnings report, which led to a 33% drop in its stock value, erasing billions of dollars of capitalization. To counter these difficulties, Intel announced plans to cut 15,000 jobs and implement other expense reductions. The company has also suspended its dividend, signaling the depth of its efforts to conserve cash and focus on recovery. When it comes to divestment of Arm stock, the need for immediate financial stabilization has presumably taken precedence, leading to the decision.

CPUs

NVIDIA Closes Above $135, Becomes World’s Most Valuable Company Thanks to the success of the burgeoning market for AI accelerators, NVIDIA has been on a tear this year. And the only place that’s even more apparent than the company’s rapidly growing revenues is in the company’s stock price and market capitalization. After breaking into the top 5 most valuable companies only earlier this year, NVIDIA has reached the apex of Wall Street, closing out today as the world’s most valuable company.

With a closing price of $135.58 on a day that saw NVIDIA’s stock pop up another 3.5%, NVIDIA has topped both Microsoft and Apple in valuation, reaching a market capitalization of $3.335 trillion. This follows a rapid rise in the company’s stock price, which has increased by 47% in the last month alone – particularly on the back of NVIDIA’s most recent estimates-beating earnings report – as well as a recent 10-for-1 stock split. And looking at the company’s performance over a longer time period, NVIDIA’s stock jumped a staggering 218% over the last year, or a mere 3,474% over the last 5 years.

NVIDIA’s ascension continues a trend over the last several years of tech companies all holding the top spots in the market capitalization rankings. Though this is the first time in quite a while that the traditional tech leaders of Apple and Microsoft have been pushed aside.

| Market Capitalization Rankings | ||

| Market Cap | Stock Price | |

| NVIDIA | $3.335T | $135.58 |

| Microsoft | $3.317T | $446.34 |

| Apple | $3.285T | $214.29 |

| Alphabet | $2.170T | $176.45 |

| Amazon | $1.902T | $182.81 |

Driving the rapid growth of NVIDIA and its market capitalization has been demand for AI accelerators from NVIDIA, particularly the company’s server-grade H100, H200, and GH200 accelerators for AI training. As the demand for these products has spiked, NVIDIA has been scaling up accordingly, repeatedly beating market expectations for how many of the accelerators they can ship – and what price they can charge. And despite all that growth, orders for NVIDIA’s high-end accelerators are still backlogged, underscoring how NVIDIA still isn’t meeting the full demands of hyperscalers and other enterprises.

Consequently, NVIDIA’s stock price and market capitalization have been on a tear on the basis of these future expectations. With a price-to-earnings (P/E) ratio of 76.7 – more than twice that of Microsoft or Apple – NVIDIA is priced more like a start-up than a 30-year-old tech company. But then it goes without saying that most 30-year-old tech companies aren’t tripling their revenue in a single year, placing NVIDIA in a rather unique situation at this time.

Like the stock market itself, market capitalizations are highly volatile. And historically speaking, it’s far from guaranteed that NVIDIA will be able to hold the top spot for long, never mind day-to-day fluctuations. NVIDIA, Apple, and Microsoft’s valuations are all within $50 billion (1.%) of each other, so for the moment at least, it’s still a tight race between all three companies. But no matter what happens from here, NVIDIA gets the exceptionally rare claim of having been the most valuable company in the world at some point.

(Carousel image courtesy MSN Money)

GPUs

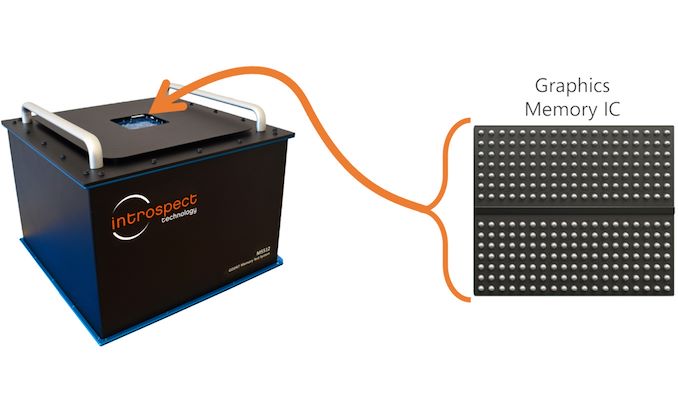

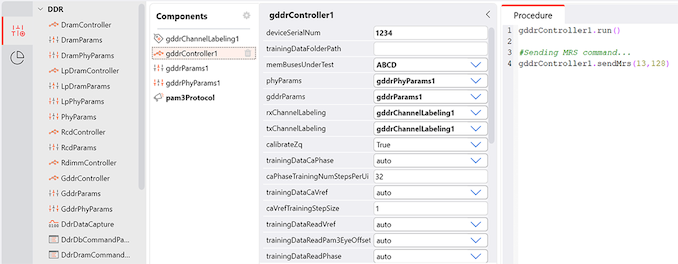

Introspect Intros GDDR7 Test System For Fast GDDR7 GPU Design Bring Up Introspect this week introduced its M5512 GDDR7 memory test system, which is designed for testing GDDR7 memory controllers, physical interface, and GDDR7 SGRAM chips. The tool will enable memory and processor manufacturers to verify that their products perform as specified by the standard.

One of the crucial phases of a processor design bring up is testing its standard interfaces, such as PCIe, DisplayPort, or GDDR is to ensure that they behave as specified both logically and electrically and achieve designated performance. Introspect's M5512 GDDR7 memory test system is designed to do just that: test new GDDR7 memory devices, troubleshoot protocol issues, assess signal integrity, and conduct comprehensive memory read/write stress tests.

The product will be quite useful for designers of GPUs/SoCs, graphics cards, PCs, network equipment and memory chips, which will speed up development of actual products that rely on GDDR7 memory. For now, GPU and SoC designers as well as memory makers use highly-custom setups consisting of many tools to characterize signal integrity as well as conduct detailed memory read/write functional stress testing, which are important things at this phase of development. But usage of a single tool greatly speeds up all the processes and gives a more comprehensive picture to specialists.

The M5512 GDDR7 Memory Test System is a desktop testing and measurement device that is equippped with 72 pins capable of functioning at up to 40 Gbps in PAM3 mode, as well as offering a virtual GDDR7 memory controller. The device features bidirectional circuitry for executing read and write operations, and every pin is equipped with an extensive range of analog characterization features, such as skew injection with femto-second resolution, voltage control with millivolt resolution, programmable jitter injection, and various eye margining features critical for AC characterization and conformance testing. Furthermore, the system integrates device power supplies with precise power sequencing and ramping controls, providing a comprehensive solution for both AC characterization and memory functional stress testing on any GDDR7 device.

Introspects M5512 has been designed in close collaboration with JEDEC members working on the GDDR7 specification, so it promises to meet all of their requirements for compliance testing. Notably, however, the device does not eliminate need for interoperability tests and still requires companies to develop their own test algorithms, but it's still a significant tool for bootstrapping device development and getting it to the point where chips can begin interop testing.

“In its quest to support the industry on GDDR7 deployment, Introspect Technology has worked tirelessly in the last few years with JEDEC members to develop the M5512 GDDR7 Memory Test System,” said Dr. Mohamed Hafed, CEO at Introspect Technology.

GPUs

Search This Blog

OfferNest

Subscribe Us

Most Popular

Intel Sells Its Arm Shares, Reduces Stakes in Other Companies Intel has divested its entire stake in Arm Holdings during the second quarter, raising approximately $147 million. Alongside this, Intel sold its stake in cybersecurity firm ZeroFox and reduced its holdings in Astera Labs, all as part of a broader effort to manage costs and recover cash amid significant financial challenges.

The sale of Intel's 1.18 million shares in Arm Holdings, as reported in a recent SEC filing, comes at a time when the company is struggling with substantial financial losses. Despite the $147 million generated from the sale, Intel reported a $120 million net loss on its equity investments for the quarter, which is a part of a larger $1.6 billion loss that Intel faced during this period.

In addition to selling its stake in Arm, Intel also exited its investment in ZeroFox and reduced its involvement with Astera Labs, a company known for developing connectivity platforms for enterprise hardware. These moves are in line with Intel's strategy to reduce costs and stabilize its financial position as it faces ongoing market challenges.

Despite the divestment, Intel's past investment in Arm was likely driven by strategic considerations. Arm Holdings is a significant force in the semiconductor industry, with its designs powering most mobile devices, and, for obvious reasons, Intel would like to address these. Intel and Arm are also collaborating on datacenter platforms tailored for Intel's 18A process technology. Additionally, Arm might view Intel as a potential licensee for its technologies and a valuable partner for other companies that license Arm's designs.

Intel's investment in Astera Labs was also a strategic one as the company probably wanted to secure steady supply of smart retimers, smart cable modems, and CXL memory controller, which are used in volumes in datacenters and Intel is certainly interested in selling as many datacenter CPUs as possible.

Intel's financial struggles were highlighted earlier this month when the company released a disappointing earnings report, which led to a 33% drop in its stock value, erasing billions of dollars of capitalization. To counter these difficulties, Intel announced plans to cut 15,000 jobs and implement other expense reductions. The company has also suspended its dividend, signaling the depth of its efforts to conserve cash and focus on recovery. When it comes to divestment of Arm stock, the need for immediate financial stabilization has presumably taken precedence, leading to the decision.

CPUs

NVIDIA Closes Above $135, Becomes World’s Most Valuable Company Thanks to the success of the burgeoning market for AI accelerators, NVIDIA has been on a tear this year. And the only place that’s even more apparent than the company’s rapidly growing revenues is in the company’s stock price and market capitalization. After breaking into the top 5 most valuable companies only earlier this year, NVIDIA has reached the apex of Wall Street, closing out today as the world’s most valuable company.

With a closing price of $135.58 on a day that saw NVIDIA’s stock pop up another 3.5%, NVIDIA has topped both Microsoft and Apple in valuation, reaching a market capitalization of $3.335 trillion. This follows a rapid rise in the company’s stock price, which has increased by 47% in the last month alone – particularly on the back of NVIDIA’s most recent estimates-beating earnings report – as well as a recent 10-for-1 stock split. And looking at the company’s performance over a longer time period, NVIDIA’s stock jumped a staggering 218% over the last year, or a mere 3,474% over the last 5 years.

NVIDIA’s ascension continues a trend over the last several years of tech companies all holding the top spots in the market capitalization rankings. Though this is the first time in quite a while that the traditional tech leaders of Apple and Microsoft have been pushed aside.

| Market Capitalization Rankings | ||

| Market Cap | Stock Price | |

| NVIDIA | $3.335T | $135.58 |

| Microsoft | $3.317T | $446.34 |

| Apple | $3.285T | $214.29 |

| Alphabet | $2.170T | $176.45 |

| Amazon | $1.902T | $182.81 |

Driving the rapid growth of NVIDIA and its market capitalization has been demand for AI accelerators from NVIDIA, particularly the company’s server-grade H100, H200, and GH200 accelerators for AI training. As the demand for these products has spiked, NVIDIA has been scaling up accordingly, repeatedly beating market expectations for how many of the accelerators they can ship – and what price they can charge. And despite all that growth, orders for NVIDIA’s high-end accelerators are still backlogged, underscoring how NVIDIA still isn’t meeting the full demands of hyperscalers and other enterprises.

Consequently, NVIDIA’s stock price and market capitalization have been on a tear on the basis of these future expectations. With a price-to-earnings (P/E) ratio of 76.7 – more than twice that of Microsoft or Apple – NVIDIA is priced more like a start-up than a 30-year-old tech company. But then it goes without saying that most 30-year-old tech companies aren’t tripling their revenue in a single year, placing NVIDIA in a rather unique situation at this time.

Like the stock market itself, market capitalizations are highly volatile. And historically speaking, it’s far from guaranteed that NVIDIA will be able to hold the top spot for long, never mind day-to-day fluctuations. NVIDIA, Apple, and Microsoft’s valuations are all within $50 billion (1.%) of each other, so for the moment at least, it’s still a tight race between all three companies. But no matter what happens from here, NVIDIA gets the exceptionally rare claim of having been the most valuable company in the world at some point.

(Carousel image courtesy MSN Money)

GPUs

Introspect Intros GDDR7 Test System For Fast GDDR7 GPU Design Bring Up Introspect this week introduced its M5512 GDDR7 memory test system, which is designed for testing GDDR7 memory controllers, physical interface, and GDDR7 SGRAM chips. The tool will enable memory and processor manufacturers to verify that their products perform as specified by the standard.

One of the crucial phases of a processor design bring up is testing its standard interfaces, such as PCIe, DisplayPort, or GDDR is to ensure that they behave as specified both logically and electrically and achieve designated performance. Introspect's M5512 GDDR7 memory test system is designed to do just that: test new GDDR7 memory devices, troubleshoot protocol issues, assess signal integrity, and conduct comprehensive memory read/write stress tests.

The product will be quite useful for designers of GPUs/SoCs, graphics cards, PCs, network equipment and memory chips, which will speed up development of actual products that rely on GDDR7 memory. For now, GPU and SoC designers as well as memory makers use highly-custom setups consisting of many tools to characterize signal integrity as well as conduct detailed memory read/write functional stress testing, which are important things at this phase of development. But usage of a single tool greatly speeds up all the processes and gives a more comprehensive picture to specialists.

The M5512 GDDR7 Memory Test System is a desktop testing and measurement device that is equippped with 72 pins capable of functioning at up to 40 Gbps in PAM3 mode, as well as offering a virtual GDDR7 memory controller. The device features bidirectional circuitry for executing read and write operations, and every pin is equipped with an extensive range of analog characterization features, such as skew injection with femto-second resolution, voltage control with millivolt resolution, programmable jitter injection, and various eye margining features critical for AC characterization and conformance testing. Furthermore, the system integrates device power supplies with precise power sequencing and ramping controls, providing a comprehensive solution for both AC characterization and memory functional stress testing on any GDDR7 device.

Introspects M5512 has been designed in close collaboration with JEDEC members working on the GDDR7 specification, so it promises to meet all of their requirements for compliance testing. Notably, however, the device does not eliminate need for interoperability tests and still requires companies to develop their own test algorithms, but it's still a significant tool for bootstrapping device development and getting it to the point where chips can begin interop testing.

“In its quest to support the industry on GDDR7 deployment, Introspect Technology has worked tirelessly in the last few years with JEDEC members to develop the M5512 GDDR7 Memory Test System,” said Dr. Mohamed Hafed, CEO at Introspect Technology.

GPUs

Micron Sells Out Entire HBM3E Supply for 2024, Most of 2025 Being the first company to ship HBM3E memory has its perks for Micron, as the company has revealed that is has managed to sell out the entire supply of its advanced high-bandwidth memory for 2024, while most of their 2025 production has been allocated, as well. Micron's HBM3E memory (or how Micron alternatively calls it, HBM3 Gen2) was one of the first to be qualified for NVIDIA's updated H200/GH200 accelerators, so it looks like the DRAM maker will be a key supplier to the green company.

"Our HBM is sold out for calendar 2024, and the overwhelming majority of our 2025 supply has already been allocated," said Sanjay Mehrotra, chief executive of Micron, in prepared remarks for the company's earnings call this week. "We continue to expect HBM bit share equivalent to our overall DRAM bit share sometime in calendar 2025."

Micron's first HBM3E product is an 8-Hi 24 GB stack with a 1024-bit interface, 9.2 GT/s data transfer rate, and a total bandwidth of 1.2 TB/s. NVIDIA's H200 accelerator for artificial intelligence and high-performance computing will use six of these cubes, providing a total of 141 GB of accessible high-bandwidth memory.

"We are on track to generate several hundred million dollars of revenue from HBM in fiscal 2024 and expect HBM revenues to be accretive to our DRAM and overall gross margins starting in the fiscal third quarter," said Mehrotra.

The company has also began sampling its 12-Hi 36 GB stacks that offer a 50% more capacity. These KGSDs will ramp in 2025 and will be used for next generations of AI products. Meanwhile, it does not look like NVIDIA's B100 and B200 are going to use 36 GB HBM3E stacks, at least initially.

Demand for artificial intelligence servers set records last year, and it looks like it is going to remain high this year as well. Some analysts believe that NVIDIA's A100 and H100 processors (as well as their various derivatives) commanded as much as 80% of the entire AI processor market in 2023. And while this year NVIDIA will face tougher competition from AMD, AWS, D-Matrix, Intel, Tenstorrent, and other companies on the inference front, it looks like NVIDIA's H200 will still be the processor of choice for AI training, especially for big players like Meta and Microsoft, who already run fleets consisting of hundreds of thousands of NVIDIA accelerators. With that in mind, being a primary supplier of HBM3E for NVIDIA's H200 is a big deal for Micron as it enables it to finally capture a sizeable chunk of the HBM market, which is currently dominated by SK Hynix and Samsung, and where Micron controlled only about 10% as of last year.

Meanwhile, since every DRAM device inside an HBM stack has a wide interface, it is physically bigger than regular DDR4 or DDR5 ICs. As a result, the ramp of HBM3E memory will affect bit supply of commodity DRAMs from Micron, the company said.

"The ramp of HBM production will constrain supply growth in non-HBM products," Mehrotra said. "Industrywide, HBM3E consumes approximately three times the wafer supply as DDR5 to produce a given number of bits in the same technology node."

Memory

Noctua Launches 145mm Tall Chromax.black NH-D12L CPU Cooler Today, Noctua announced the launch of its NH-D12L chromax.black CPU cooler, an all-black version of the existing NH-D12L. The cooler sports not only a coat of mattte black paint, but also a relatively short height of 145mm, which Noctua says makes the NH-D12L suitable for slimmer cases and 4U server racks.

Having launched in 2022, the NH-D12L is essentially a shorter version of the NH-U12A, which stands at 158mm tall. While plenty of cases have the room for a cooler that tall, not all do (especially small form factor cases). The NH-D12L exists to offer similar performance as the NH-U12A but for cases where 145mm would fit but 158mm wouldn’t. However, the NH-D12L has just a single 120mm NF-A12x25 fan, whereas the NH-U12A has two. Additionally, the NH-D12L has five heatpipes to the NH-U12A’s seven. These two factors mean the NH-D12L can’t quite catch up to the NH-U12A when it comes to cooling capacity.

The chromax.black model is practically identical to the original, but features Noctua’s popular black motif. It should perform the same, and its SecuFirm 2 mounting hardware supports the same sockets: AMD’s AM4 and AM5, and Intel’s LGA 1700 and LGA 1851 for upcoming Arrow Lake CPUs. Despite its compact design, the NH-D12L also has “100% RAM compatibility” for sticks with tall heatspreaders, which sometimes pose clearance issues with air coolers.

The NH-D12L chromax.black also comes with the usual Noctua accessories: a screwdriver, NH-T1 thermal paste, and a four-pin low-noise adapter for the NF-A12x25 fan. Additionally, the 120mm fan is mounted to the cooler via a bracket, meaning no screws are necessary and it can be removed or installed toollessly.

At $99/€109, the NH-D12L is positioned fairly high in the market, next to larger high-end air coolers such as Corsair’s A115, as well as 240mm to 360mm AIO liquid coolers. However, the NH-D12L holds a substantial advantage in its size and compatibility, and while many of these high-end air coolers are 160mm tall or more, the NH-D12L is just 145mm. In some cases, even 15mm could make a big difference.

CPU cooler

Introspect Intros GDDR7 Test System For Fast GDDR7 GPU Design Bring Up Introspect this week introduced its M5512 GDDR7 memory test system, which is designed for testing GDDR7 memory controllers, physical interface, and GDDR7 SGRAM chips. The tool will enable memory and processor manufacturers to verify that their products perform as specified by the standard.

One of the crucial phases of a processor design bring up is testing its standard interfaces, such as PCIe, DisplayPort, or GDDR is to ensure that they behave as specified both logically and electrically and achieve designated performance. Introspect's M5512 GDDR7 memory test system is designed to do just that: test new GDDR7 memory devices, troubleshoot protocol issues, assess signal integrity, and conduct comprehensive memory read/write stress tests.

The product will be quite useful for designers of GPUs/SoCs, graphics cards, PCs, network equipment and memory chips, which will speed up development of actual products that rely on GDDR7 memory. For now, GPU and SoC designers as well as memory makers use highly-custom setups consisting of many tools to characterize signal integrity as well as conduct detailed memory read/write functional stress testing, which are important things at this phase of development. But usage of a single tool greatly speeds up all the processes and gives a more comprehensive picture to specialists.

The M5512 GDDR7 Memory Test System is a desktop testing and measurement device that is equippped with 72 pins capable of functioning at up to 40 Gbps in PAM3 mode, as well as offering a virtual GDDR7 memory controller. The device features bidirectional circuitry for executing read and write operations, and every pin is equipped with an extensive range of analog characterization features, such as skew injection with femto-second resolution, voltage control with millivolt resolution, programmable jitter injection, and various eye margining features critical for AC characterization and conformance testing. Furthermore, the system integrates device power supplies with precise power sequencing and ramping controls, providing a comprehensive solution for both AC characterization and memory functional stress testing on any GDDR7 device.

Introspects M5512 has been designed in close collaboration with JEDEC members working on the GDDR7 specification, so it promises to meet all of their requirements for compliance testing. Notably, however, the device does not eliminate need for interoperability tests and still requires companies to develop their own test algorithms, but it's still a significant tool for bootstrapping device development and getting it to the point where chips can begin interop testing.

“In its quest to support the industry on GDDR7 deployment, Introspect Technology has worked tirelessly in the last few years with JEDEC members to develop the M5512 GDDR7 Memory Test System,” said Dr. Mohamed Hafed, CEO at Introspect Technology.

GPUs

Micron Sells Out Entire HBM3E Supply for 2024, Most of 2025 Being the first company to ship HBM3E memory has its perks for Micron, as the company has revealed that is has managed to sell out the entire supply of its advanced high-bandwidth memory for 2024, while most of their 2025 production has been allocated, as well. Micron's HBM3E memory (or how Micron alternatively calls it, HBM3 Gen2) was one of the first to be qualified for NVIDIA's updated H200/GH200 accelerators, so it looks like the DRAM maker will be a key supplier to the green company.

"Our HBM is sold out for calendar 2024, and the overwhelming majority of our 2025 supply has already been allocated," said Sanjay Mehrotra, chief executive of Micron, in prepared remarks for the company's earnings call this week. "We continue to expect HBM bit share equivalent to our overall DRAM bit share sometime in calendar 2025."

Micron's first HBM3E product is an 8-Hi 24 GB stack with a 1024-bit interface, 9.2 GT/s data transfer rate, and a total bandwidth of 1.2 TB/s. NVIDIA's H200 accelerator for artificial intelligence and high-performance computing will use six of these cubes, providing a total of 141 GB of accessible high-bandwidth memory.

"We are on track to generate several hundred million dollars of revenue from HBM in fiscal 2024 and expect HBM revenues to be accretive to our DRAM and overall gross margins starting in the fiscal third quarter," said Mehrotra.

The company has also began sampling its 12-Hi 36 GB stacks that offer a 50% more capacity. These KGSDs will ramp in 2025 and will be used for next generations of AI products. Meanwhile, it does not look like NVIDIA's B100 and B200 are going to use 36 GB HBM3E stacks, at least initially.

Demand for artificial intelligence servers set records last year, and it looks like it is going to remain high this year as well. Some analysts believe that NVIDIA's A100 and H100 processors (as well as their various derivatives) commanded as much as 80% of the entire AI processor market in 2023. And while this year NVIDIA will face tougher competition from AMD, AWS, D-Matrix, Intel, Tenstorrent, and other companies on the inference front, it looks like NVIDIA's H200 will still be the processor of choice for AI training, especially for big players like Meta and Microsoft, who already run fleets consisting of hundreds of thousands of NVIDIA accelerators. With that in mind, being a primary supplier of HBM3E for NVIDIA's H200 is a big deal for Micron as it enables it to finally capture a sizeable chunk of the HBM market, which is currently dominated by SK Hynix and Samsung, and where Micron controlled only about 10% as of last year.

Meanwhile, since every DRAM device inside an HBM stack has a wide interface, it is physically bigger than regular DDR4 or DDR5 ICs. As a result, the ramp of HBM3E memory will affect bit supply of commodity DRAMs from Micron, the company said.

"The ramp of HBM production will constrain supply growth in non-HBM products," Mehrotra said. "Industrywide, HBM3E consumes approximately three times the wafer supply as DDR5 to produce a given number of bits in the same technology node."

Memory

TSMC's 1.6nm Technology Announced for Late 2026: A16 with "Super Power Rail" Backside Power With the arrival of spring comes showers, flowers, and in the technology industry, TSMC's annual technology symposium series. With customers spread all around the world, the Taiwanese pure play foundry has adopted an interesting strategy for updating its customers on its fab plans, holding a series of symposiums from Silicon Valley to Shanghai. Kicking off the series every year – and giving us our first real look at TSMC's updated foundry plans for the coming years – is the Santa Clara stop, where yesterday the company has detailed several new technologies, ranging from more advanced lithography processes to massive, wafer-scale chip packing options.

Today we're publishing several stories based on TSMC's different offerings, starting with TSMC's marquee announcement: their A16 process node. Meanwhile, for the rest of our symposium stories, please be sure to check out the related reading below, and check back for additional stories.

- TSMC 2nm Update: N2 In 2025, N2P Loses Backside Power, and NanoFlex Brings Optimal Cells

- TSMC Preps Cheaper 4nm N4C Process For 2025, Aiming For 8.5% Cost Reduction

- TSMC's System-on-Wafer Platform Goes 3D: CoW-SoW Stacks Up the Chips

- TSMC Jumps Into Silicon Photonics, Lays Out Roadmap For 12.8 Tbps COUPE On-Package Interconnect

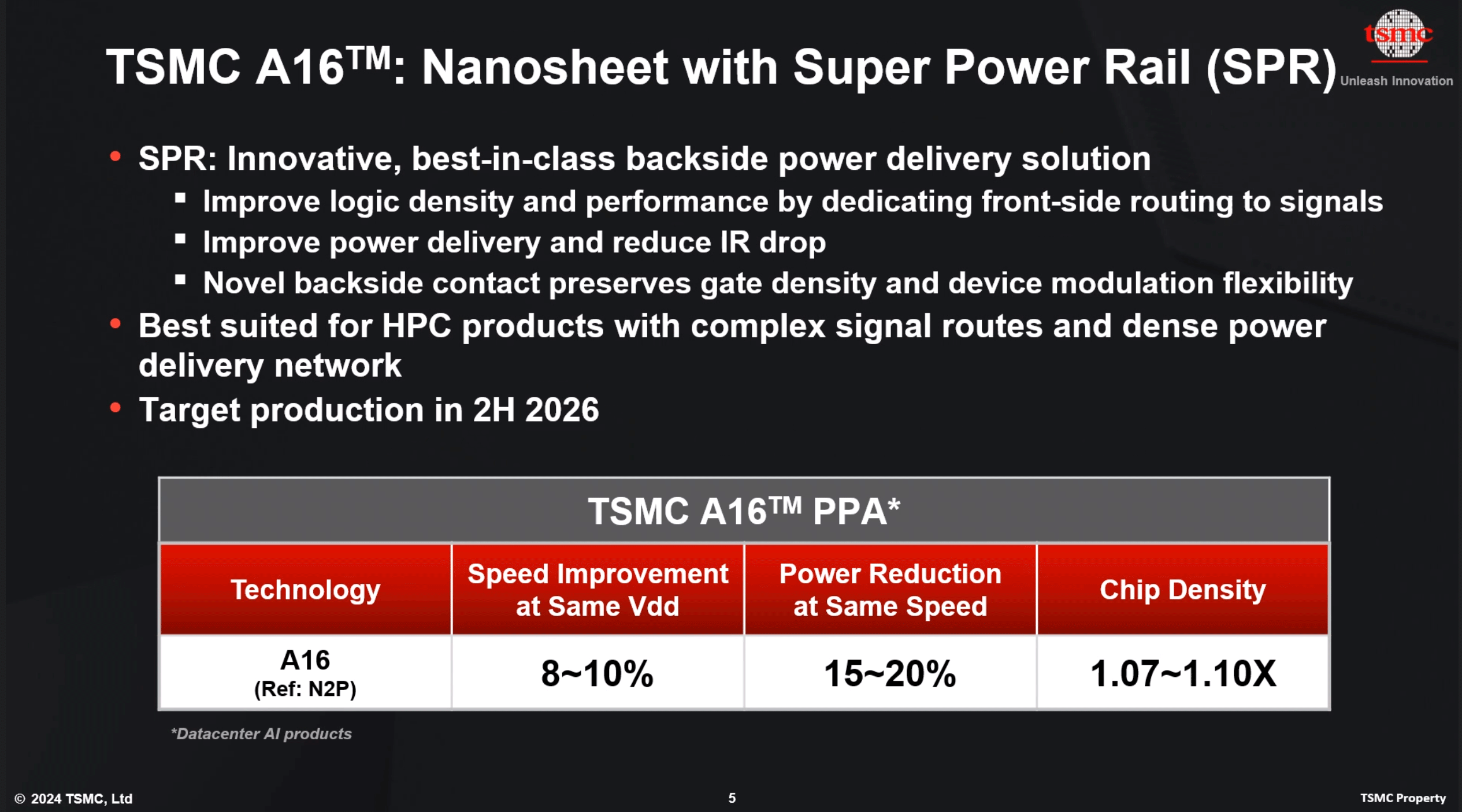

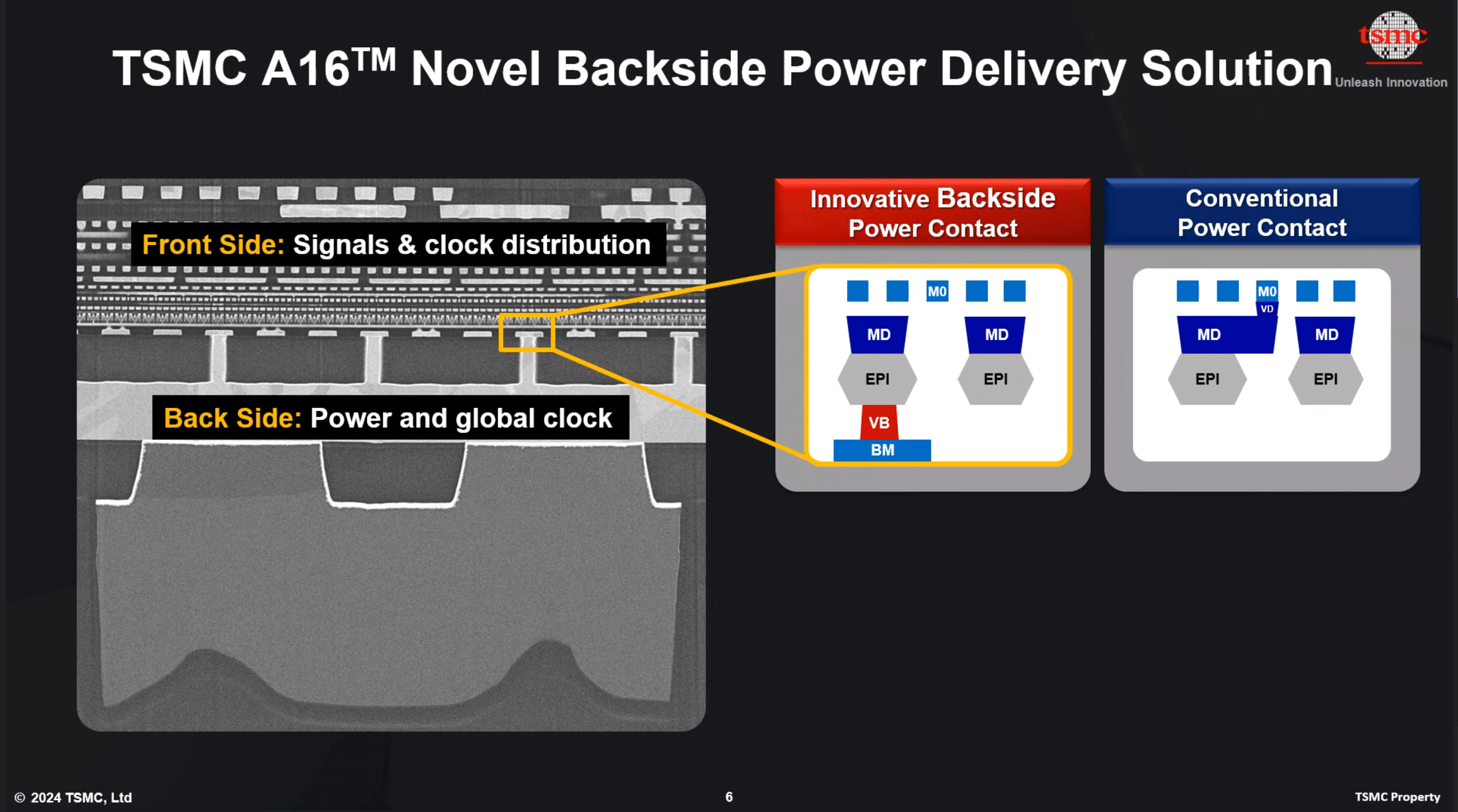

Headlining its Silicon Valley stop, TSMC announced its first 'angstrom-class' process technology: A16. Following a production schedule shift that has seen backside power delivery network technology (BSPDN) removed from TSMC's N2P node, the new 1.6nm-class production node will now be the first process to introduce BSPDN to TSMC's chipmaking repertoire. With the addition of backside power capabilities and other improvements, TSMC expects A16 to offer significantly improved performance and energy efficiency compared to TSMC's N2P fabrication process. It will be available to TSMC's clients starting H2 2026.

TSMC A16: Combining GAAFET With Backside Power Delivery

At a high level, TSMC's A16 process technology will rely on gate-all-around (GAAFET) nanosheet transistors and will feature a backside power rail, which will both improve power delivery and moderately increase transistor density. Compared to TSMC's N2P fabrication process, A16 is expected to offer a performance improvement of 8% to 10% at the same voltage and complexity, or a 15% to 20% reduction in power consumption at the same frequency and transistor count. TSMC is not listing detailed density parameters this far out, but the company says that chip density will increase by 1.07x to 1.10x – keeping in mind that transistor density heavily depends on the type and libraries of transistors used.

The key innovation of TSMC's A16 node, is its Super Power Rail (SPR) backside power delivery network, a first for TSMC. The contract chipmaker claims that A16's SPR is specifically tailored for high-performance computing products that feature both complex signal routes and dense power circuitry.

As noted earlier, with this week's announcement, A16 has now become the launch vehicle for backside power delivery at TSMC. The company was initially slated to offer BSPDN technology with N2P in 2026, but for reasons that aren't entirely clear, the tech has been punted from N2P and moved to A16. TSMC's official timing for N2P in 2023 was always a bit loose, so it's hard to say if this represents much of a practical delay for BSPDN at TSMC. But at the same time, it's important to underscore that A16 isn't just N2P renamed, but rather it will be a distinct technology from N2P.

TSMC is not the only fab pursuing backside power delivery, and accordingly, we're seeing multiple variations on the technique crop up at different fabs. The... Semiconductors

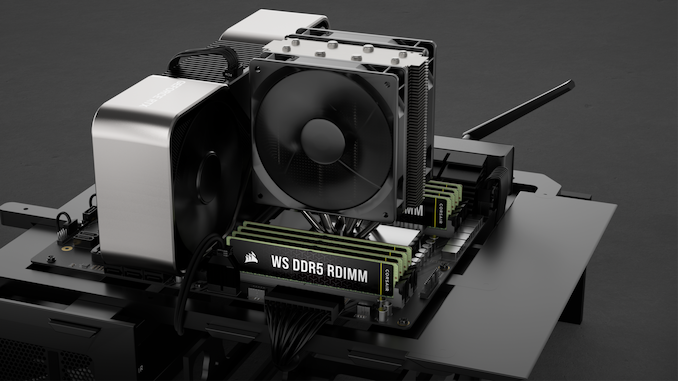

Corsair Enters Workstation Memory Market with WS Series XMP/EXPO DDR5 RDIMMs Corsair has introduced a family of registered memory modules with ECC that are designed for AMD's Ryzen Threadripper 7000 and Intel's Xeon W-2400/3400-series processors. The new Corsair WS DDR5 RDIMMs with AMD EXPO and Intel XMP 3.0 profiles will be available in kits of up to 256 GB capacity and at speeds of up to 6400 MT/s.

Corsair's family of WS DDR5 RDIMMs includes 16 GB modules operating at up to 6400 MT/s with CL32 latency as well as 32 GB modules functioning at 5600 MT/s with CL40 latency. At present, Corsair offers a quad-channel 64 GB kit (4×16GB, up to 6400 MT/s), a quad-channel 128GB kit (4×32GB, 5600 MT/s), an eight-channel 128 GB kit (8×16GB, 5600 MT/s), and an eight-channel 256 GB kit (8×32GB, 5600 MT/s) and it remains to be seen whether the company will expand the lineup.

Corsair's WS DDR5 RDIMMs are designed for AMD's TRX50 and WRX90 platforms as well as Intel's W790 platform and are therefore compatible with AMD's Ryzen Threadripper Pro 7000 and 7000WX-series as well as Intel's Xeon W-2400/3400-series CPUs. The modules feature both AMD EXPO and Intel XMP 3.0 profiles to easily set their beyond-JEDEC-spec settings and come with thin heat spreaders made of pyrolytic graphite sheet (PGS), which thermal conductivity than that of copper and aluminum of the same thickness. For now, Corsair does not disclose which RCD and memory chips its registered memory modules use.

Unlike many of its rivals among leading DIMM manufacturers, Corsair did not introduce its enthusiast-grade RDIMMs when AMD and Intel released their Ryzen Threadripper and Xeon W-series platforms for extreme workstations last year. It is hard to tell what the reason for that is, but perhaps the company wanted to gain experience working with modules featuring registered clock drivers (RCDs) as well as AMD's and Intel's platforms for extreme workstations.

The result of the delay looks to be quite rewarding: unlike modules from its competitors that either feature AMD EXPO or Intel XMP 3.0 profiles, Corsair's WS DDR5 RDIMMs come with both. While this may not be important on the DIY market where people know exactly what they are buying for their platform, this is a great feature for system integrators, which can use Corsair WS DDR5 RDIMMs both for their AMD Ryzen Threadripper and Intel Xeon W-series builds, something that greatly simplifies their inventory management.

Since Corsair's WS DDR5 RDIMMs are aimed at workstations and are tested to offer reliable performance beyond JEDEC specifications, they are quite expensive. The cheapest 64 GB DDR5-5600 CL40 kit costs $450, the fastest 64 GB DDR5-6400 CL32 kit is priced at $460, whereas the highest end 256 GB DDR5-5600 CL40 kit is priced at $1,290.

Memory

Tags

- https://www.amazon.com/2020-2021-Planner-Academic-Do-Twin-Wire/dp/B083V11TM5?tag=all0ad0-21https://m.media-amazon.com/images/I/41btLRSWksL.jpg

- https://www.amazon.com/Acid-Dreams-Complete-History-Sixties-ebook/dp/B005012G6U?tag=all0ad0-21https://m.media-amazon.com/images/I/51SwQkWyzAL.jpg

- https://www.amazon.com/Adaptive-Charging-Charger-Compatible-EP-TA20JBE/dp/B07NPD5T5H?tag=all0ad0-21https://m.media-amazon.com/images/I/419ZKbzdOwL.jpg

- https://www.amazon.com/Adjustable-Foldable-Portable-Compatible-Smartphones/dp/B0963PBY4C?tag=all0ad0-21https://m.media-amazon.com/images/I/51p4wF13kCL.jpg

- https://www.amazon.com/African-Twisted-Headwraps-Headband-Headscarf/dp/B09FDMKTZP?tag=all0ad0-21https://m.media-amazon.com/images/I/41WGbzL+RkL.jpg

- https://www.amazon.com/AINOPE-Charging-Braided-compatible-MacBook/dp/B094YDZQ1C?tag=all0ad0-21https://m.media-amazon.com/images/I/51ppc0xIVtL.jpg

- https://www.amazon.com/Ambergris-Saints-Madmen-Shriek-Finch/dp/B08GGCSN3S?tag=all0ad0-21https://m.media-amazon.com/images/I/51zgVCTiUiL.jpg

- https://www.amazon.com/Amplim-Hospital-Thermometer-Professional-Thermometer/dp/B0865R5H82?tag=all0ad0-21https://m.media-amazon.com/images/I/31K01H4s6UL.jpg

- https://www.amazon.com/Animal-Gaming-Electronic-Lights-Birthday/dp/B0B2QTLWMS?tag=all0ad0-21https://m.media-amazon.com/images/I/41GY22qHwkL.jpg

- https://www.amazon.com/Animals-Flashcards-Children-Alphabet-cards/dp/9811168881?tag=all0ad0-21https://m.media-amazon.com/images/I/51U2gcSb62L.jpg

- https://www.amazon.com/ANNKIE-Dance-Electronic-Lights-Birthday/dp/B0B74DVQQV?tag=all0ad0-21https://m.media-amazon.com/images/I/51K45aP99DL.jpg

- https://www.amazon.com/Anti-Wrinkle-Silicone-Reusable-D%C3%A9collet%C3%A9-Eliminate/dp/B07FQ3QV1C?tag=all0ad0-21https://m.media-amazon.com/images/I/41RhHEsEi3L.jpg

- https://www.amazon.com/anyloop-Military-Smartwatch-Bluetooth-Waterproof/dp/B0C4P7R6CK?tag=all0ad0-21https://m.media-amazon.com/images/I/41lUAHTmi5L.jpg

- https://www.amazon.com/Aromatherapy-Shower-Steamers-Relaxation-Everything/dp/B08QDKWBWS?tag=all0ad0-21https://m.media-amazon.com/images/I/613KwmLJJ1L.jpg

- https://www.amazon.com/Audible-A-Rose-in-Winter/dp/B09JHTGT14?tag=all0ad0-21https://m.media-amazon.com/images/I/51z1bVj4CxL.jpg

- https://www.amazon.com/Audible-Fall-School-Good-Evil/dp/B0B8SZY3P5?tag=all0ad0-21https://m.media-amazon.com/images/I/51MWO0bOLIL.jpg

- https://www.amazon.com/Audible-Termination-Shock-A-Novel/dp/B09556Y79B?tag=all0ad0-21https://m.media-amazon.com/images/I/51jEsfJXG3S.jpg

- https://www.amazon.com/Automatic-Toddlers-Operated-Batteries-Birthday/dp/B0BZH34G2G?tag=all0ad0-21https://m.media-amazon.com/images/I/51PQaTAouaL.jpg

- https://www.amazon.com/AWGOU-Baby-Wipes-Dispenser-Large-Capacity/dp/B0BS3K9BFV?tag=all0ad0-21https://m.media-amazon.com/images/I/41emlsr6WnL.jpg

- https://www.amazon.com/AYAO-Blades-8-Inch-12TPI-2-Pack/dp/B0C9C1VB3D?tag=all0ad0-21https://m.media-amazon.com/images/I/41jMumgfJ4L.jpg

- https://www.amazon.com/Backless-Sleeve-Ribbed-Fitted-Shirts/dp/B0B68KPGP8?tag=all0ad0-21https://m.media-amazon.com/images/I/41vB0uLnuzL.jpg

- https://www.amazon.com/BCHWAY-Stuffed-Storage-Beanbag-Organizer/dp/B09WR2KPPG?tag=all0ad0-21https://m.media-amazon.com/images/I/41GBr+1tEBL.jpg

- https://www.amazon.com/beeprt-Bluetooth-Shipping-Label-Printer/dp/B0BK93ZSNC?tag=all0ad0-21https://m.media-amazon.com/images/I/41Ufm05KrJL.jpg

- https://www.amazon.com/Benewid-Creami-Pints-Lids-Containers/dp/B0C85Q44N6?tag=all0ad0-21https://m.media-amazon.com/images/I/41bFv6o0xjL.jpg

- https://www.amazon.com/BIG-TEETH-Magnetic-Microfiber-5-Piece/dp/B0BRXBM2T9?tag=all0ad0-21https://m.media-amazon.com/images/I/51BCt4B8jDL.jpg

- https://www.amazon.com/Blackbeard-Americas-Most-Notorious-Pirate/dp/B086N4X4SG?tag=all0ad0-21https://m.media-amazon.com/images/I/51fqeuICW+L.jpg

- https://www.amazon.com/Blaster-Automatic-Toddlers-Christmas-Birthday/dp/B0CCV9RDM5?tag=all0ad0-21https://m.media-amazon.com/images/I/51j8FkZtBiL.jpg

- https://www.amazon.com/Bloodline-Jess-Lourey/dp/1542016312?tag=all0ad0-21https://m.media-amazon.com/images/I/51KLqBsOIbL.jpg

- https://www.amazon.com/Bracelet-Stainless-Zirconium-Ceramic-Statement/dp/B0B2CQR5YW?tag=all0ad0-21https://m.media-amazon.com/images/I/41iUmnfsAmL.jpg

- https://www.amazon.com/Bride-Shadow-King-Book/dp/B0B75RL7DX?tag=all0ad0-21https://m.media-amazon.com/images/I/51LyIt-n5+L.jpg

- https://www.amazon.com/Bright-Empires-House-Spirit-Shadow/dp/B08T4VG1S2?tag=all0ad0-21https://m.media-amazon.com/images/I/61-JxjVNClL.jpg

- https://www.amazon.com/BRIGHTWORLD-Stuffers-Upgrade-5-9inch-Birthday/dp/B0B6RBCYZ7?tag=all0ad0-21https://m.media-amazon.com/images/I/61pQaIf3NVL.jpg

- https://www.amazon.com/Bunfly-Clipper-Grooming-Suction-Capacity/dp/B0C6PMSY3Z?tag=all0ad0-21https://m.media-amazon.com/images/I/51ig7m1g9OL.jpg

- https://www.amazon.com/C412H-Spring-Wound-Commercial-12-Hour-Automatic/dp/B00CTW2LYA?tag=all0ad0-21https://m.media-amazon.com/images/I/41QNVA+3MRL.jpg

- https://www.amazon.com/Cardone-Select-84-832-Ignition-Distributor/dp/B000CFFAYY?tag=all0ad0-21https://m.media-amazon.com/images/I/414iGfzryML.jpg

- https://www.amazon.com/ceiba-tree-Graduation-Envelopes-Classroom/dp/B0BQQKSLFK?tag=all0ad0-21https://m.media-amazon.com/images/I/51ZOS4YOvzL.jpg

- https://www.amazon.com/CellElection-Elastic-Ponytail-Holders-Straight/dp/B09TFDLR85?tag=all0ad0-21https://m.media-amazon.com/images/I/514QbooGKKL.jpg

- https://www.amazon.com/Certified-Charger-Charging-Braveridge-Lightning/dp/B0C1VKRXN1?tag=all0ad0-21https://m.media-amazon.com/images/I/41XG+lopk8L.jpg

- https://www.amazon.com/Certified%E3%80%91-Charger-Fasting-Charging-Compatible/dp/B0C489SXGB?tag=all0ad0-21https://m.media-amazon.com/images/I/41dNzZS3BML.jpg

- https://www.amazon.com/Charger-Certified-Lightning-Charging-Compatible/dp/B0C4L9S7QH?tag=all0ad0-21https://m.media-amazon.com/images/I/514iP4Fy28L.jpg

- https://www.amazon.com/Chicken-Shredder-Ergonomic-Anti-Slip-Dishwasher/dp/B0C5R1KZP6?tag=all0ad0-21https://m.media-amazon.com/images/I/61cx6f737WL.jpg

- https://www.amazon.com/Christmas-Decorations-PHITRIC-Sparkling-Fireplace/dp/B0B7WNC93J?tag=all0ad0-21https://m.media-amazon.com/images/I/51Gv07W+JCL.jpg

- https://www.amazon.com/Christmas-Snowflake-Stamping-Manicure-Designer/dp/B09L4SV5YY?tag=all0ad0-21https://m.media-amazon.com/images/I/51TQJxPWLrL.jpg

- https://www.amazon.com/Cleaning-Bathroom-Crevice-Bristle-Multifunctional/dp/B0CDBK4C9T?tag=all0ad0-21https://m.media-amazon.com/images/I/415dsUeaDmL.jpg

- https://www.amazon.com/Clinic-Crohns-Disease-Ulcerative-Colitis-ebook/dp/B09ZBLJLFL?tag=all0ad0-21https://m.media-amazon.com/images/I/41f5FHJle+L.jpg

- https://www.amazon.com/Coasters-Absorbent-Ceramic-Coaster-Housewarming/dp/B09ZKJRSLH?tag=all0ad0-21https://m.media-amazon.com/images/I/51POqbEgyOL.jpg

- https://www.amazon.com/CoBak-Rotating-Case-iPad-Generation/dp/B0BBR8MFHM?tag=all0ad0-21https://m.media-amazon.com/images/I/516NR1N0QKL.jpg

- https://www.amazon.com/COLORFULLEAF-Bamboo-Underwear-Breathable-Trunks/dp/B0B9BX5S9L?tag=all0ad0-21https://m.media-amazon.com/images/I/31MYPhHHapL.jpg

- https://www.amazon.com/Comforter-Paisley-Microfiber-Bohemian-Pillowcases/dp/B0BZP1SC6F?tag=all0ad0-21https://m.media-amazon.com/images/I/51KkoN3AgNL.jpg

- https://www.amazon.com/Compressed-Cordless-Electric-Brushless-Portable/dp/B0BBR1XHLS?tag=all0ad0-21https://m.media-amazon.com/images/I/41dJ2sJpGjL.jpg

- https://www.amazon.com/Cordking-14-Protectors-Shockproof-Microfiber/dp/B0B6GKRCGM?tag=all0ad0-21https://m.media-amazon.com/images/I/41tXMeWi5FL.jpg

- https://www.amazon.com/Cordless-High-Speed-Brushless-Lightweight-Cleaners/dp/B0CGL8NBM8?tag=all0ad0-21https://m.media-amazon.com/images/I/41NfsXSEnLL.jpg

- https://www.amazon.com/Cordless-Straightening-Travel-Wireless-Straightener/dp/B0CJ2HQL3H?tag=all0ad0-21https://m.media-amazon.com/images/I/31wTmdUZyuL.jpg

- https://www.amazon.com/Corrector-Clavicle-Adjustable-Straightener-Providing/dp/B07L41CV8B?tag=all0ad0-21https://m.media-amazon.com/images/I/41B0xbK2kRL.jpg

- https://www.amazon.com/Court-Wizard-Terry-Mancour-audiobook/dp/B07PC2RQSC?tag=all0ad0-21https://m.media-amazon.com/images/I/512jFQbt6JL.jpg

- https://www.amazon.com/Cozivwaiy-Platform-Sandals-Studded-Evening/dp/B0BM43W7VF?tag=all0ad0-21https://m.media-amazon.com/images/I/41+RFM1gP7L.jpg

- https://www.amazon.com/Crenova-Magnetic-Construction-Preschool-Educational/dp/B0CC1RZ2BJ?tag=all0ad0-21https://m.media-amazon.com/images/I/51lJxAlaL3L.jpg

- https://www.amazon.com/Dan-Darci-Marbling-Paint-Kids/dp/B08CLVVJ8C?tag=all0ad0-21https://m.media-amazon.com/images/I/61nDIOC0B0L.jpg

- https://www.amazon.com/Dash-Cam-Front-BOOGIIO-Dashboard/dp/B08LZJ8GMH?tag=all0ad0-21https://m.media-amazon.com/images/I/41B3QK42N1L.jpg

- https://www.amazon.com/Democracy-America-What-Wrong-About-ebook/dp/B0867TRV52?tag=all0ad0-21https://m.media-amazon.com/images/I/4129LSadlmL.jpg

- https://www.amazon.com/Detailing-Attachment-Scrubber-Cleaning-Upholstery/dp/B07WGKQVN7?tag=all0ad0-21https://m.media-amazon.com/images/I/41K75BhGaML.jpg

- https://www.amazon.com/Diameter-Hydrophilic-Filtration-Non-sterile-COBETTER/dp/B0B7BB3L1R?tag=all0ad0-21https://m.media-amazon.com/images/I/31KD4E7TW5L.jpg

- https://www.amazon.com/Diamond-Organizer-Jewelry-Storage-Diamonds/dp/B08JLVSZ15?tag=all0ad0-21https://m.media-amazon.com/images/I/514Z+bbZfQL.jpg

- https://www.amazon.com/Diamond-Painting-Diamonds-12x16inch-30%C3%9740cm/dp/B09X1CQJHX?tag=all0ad0-21https://m.media-amazon.com/images/I/51xEpqCkI-L.jpg

- https://www.amazon.com/didforu-Monocular-Telescope-Monoscope-Binocular/dp/B0C3757D5G?tag=all0ad0-21https://m.media-amazon.com/images/I/512qer0p1oL.jpg

- https://www.amazon.com/Dinkhiiro-Outdoor-Pickleball-Balls-Pickle-Ball-Accessories-Pickleball/dp/B0BNQ8HM76?tag=all0ad0-21https://m.media-amazon.com/images/I/41ni+GPR71L.jpg

- https://www.amazon.com/Distant-Horizon-Backyard-Starship-Book/dp/B0BDP9RPQL?tag=all0ad0-21https://m.media-amazon.com/images/I/512FYS+c9wL.jpg

- https://www.amazon.com/Dorman-1650134-Chevrolet-Driver-Assembly/dp/B00JW1XGDG?tag=all0ad0-21https://m.media-amazon.com/images/I/51p-ja2Vc9L.jpg

- https://www.amazon.com/DosTutu-Mermaid-Costume-Pageant-Birthday/dp/B09NJK6K9M?tag=all0ad0-21https://m.media-amazon.com/images/I/51pVYQBZKJL.jpg

- https://www.amazon.com/dp/B09GFWPXWH?tag=all0ad0-21https://m.media-amazon.com/images/I/51xO7ZL-sVL.jpg

- https://www.amazon.com/DREAMS-VISIONS-Jesus-Awakening-Muslim-ebook/dp/B0078FAA3M?tag=all0ad0-21https://m.media-amazon.com/images/I/51BKVftuXDL.jpg

- https://www.amazon.com/DSJUGGLING-Transparent-Two-Tone-Juggling-Beginners/dp/B09WHRZCFF?tag=all0ad0-21https://m.media-amazon.com/images/I/419NOeSGijL.jpg

- https://www.amazon.com/Empire-of-Storms-Sarah-J-Maas-audiobook/dp/B01KIQV5EU?tag=all0ad0-21https://m.media-amazon.com/images/I/51EMceUgxFL.jpg

- https://www.amazon.com/Eniucow-Montessori-Permanent-Traction-Toddlers/dp/B0B7CZ9KGN?tag=all0ad0-21https://m.media-amazon.com/images/I/31bJyZYqhJL.jpg

- https://www.amazon.com/Eslazoer-insulated-neoprene-reusable-activity/dp/B0BKSMXVN8?tag=all0ad0-21https://m.media-amazon.com/images/I/41kTXH2JxBL.jpg

- https://www.amazon.com/Everyday-Solutions-Mug-Tree-Polished/dp/B0B4T6NCML?tag=all0ad0-21https://m.media-amazon.com/images/I/31-7RTd1fUL.jpg

- https://www.amazon.com/Extender-Universal-Rotatable-Extension-Attachment/dp/B0C4YLVH3D?tag=all0ad0-21https://m.media-amazon.com/images/I/41+YysChcFL.jpg

- https://www.amazon.com/Eyelash-Extension-Cleanser-BREYLEE-Shampoo/dp/B08RJFTFN4?tag=all0ad0-21https://m.media-amazon.com/images/I/51UncwwSzwL.jpg

- https://www.amazon.com/Fatal-Discord-Michael-Massing-audiobook/dp/B078YDCMBD?tag=all0ad0-21https://m.media-amazon.com/images/I/51AJdROll+L.jpg

- https://www.amazon.com/Faucet-Sprayer-Attachment-Replacement-included/dp/B0BCFMT7WY?tag=all0ad0-21https://m.media-amazon.com/images/I/31wZDbk-bYL.jpg

- https://www.amazon.com/Feeling-Good-David-D-Burns-audiobook/dp/B01N9TCVLD?tag=all0ad0-21https://m.media-amazon.com/images/I/51ixV6lf9AL.jpg

- https://www.amazon.com/FeelinGirl-Waitsted-Shapewear-Control-Lifting/dp/B0CBK29G76?tag=all0ad0-21https://m.media-amazon.com/images/I/31Cl6qaK0HL.jpg

- https://www.amazon.com/Fenceguru-Decorative-Rustproof-Barrier-Landscape/dp/B0BZ91ZPHF?tag=all0ad0-21https://m.media-amazon.com/images/I/519kGS3sjxL.jpg

- https://www.amazon.com/Fernco-PQC-105-Flexible-Reusable-Plastic/dp/B00CFVNCCK?tag=all0ad0-21https://m.media-amazon.com/images/I/21xcHMaS37L.jpg

- https://www.amazon.com/Floating-Shelves-Bathroom-Bedroom-Kitchen/dp/B0CF8J497J?tag=all0ad0-21https://m.media-amazon.com/images/I/51cT9HpSh4L.jpg

- https://www.amazon.com/Forehead-Thermometer-Infrared-Eligible-Indicator/dp/B0B4ZD6K43?tag=all0ad0-21https://m.media-amazon.com/images/I/31J97vQVUHL.jpg

- https://www.amazon.com/FRGROW-Lights-Spectrum-Function-Gooseneck/dp/B0CC4P13L7?tag=all0ad0-21https://m.media-amazon.com/images/I/51UXtap-56L.jpg

- https://www.amazon.com/Funrous-Mattress-Lifter-Helper-Stainless/dp/B09WMZPM1N?tag=all0ad0-21https://m.media-amazon.com/images/I/31Vg6rJibzL.jpg

- https://www.amazon.com/GAOY-Glassy-Foundation-Combination-Polish/dp/B0BD4MMFVM?tag=all0ad0-21https://m.media-amazon.com/images/I/414wIShbm+L.jpg

- https://www.amazon.com/Gay-Pride-Rainbow-Heart-Silicone/dp/B01J8E5NUA?tag=all0ad0-21https://m.media-amazon.com/images/I/31TtNjUl3uL.jpg

- https://www.amazon.com/Gerod-Compatible-Replacement-Cushions-Headphones/dp/B09BPV34ZB?tag=all0ad0-21https://m.media-amazon.com/images/I/41cXXPmWNoL.jpg

- https://www.amazon.com/GetKen-Dispenser-Rechargeable-Portable-Automatic/dp/B0C4T26LK4?tag=all0ad0-21https://m.media-amazon.com/images/I/41v5jE7AsIL.jpg

- https://www.amazon.com/Gifts-Girls-birthday-Toys-Duplication/dp/B0B6FY328P?tag=all0ad0-21https://m.media-amazon.com/images/I/51mMmkuwdfL.jpg

- https://www.amazon.com/Girls-Charm-Bracelet-Making-Kit/dp/B0CFCC8HBZ?tag=all0ad0-21https://m.media-amazon.com/images/I/81-SB1q4h1L.jpg

- https://www.amazon.com/Girls-Charm-Bracelet-Making-Kit/dp/B0CFF3SLJT?tag=all0ad0-21https://m.media-amazon.com/images/I/81kJ83iM+IL.jpg

- https://www.amazon.com/GloFX-Blue-Rave-Bedroom-Decor/dp/B0B7ZXG6PS?tag=all0ad0-21https://m.media-amazon.com/images/I/41iPdpfs1DL.jpg

- https://www.amazon.com/GMSOL-Diamond-Necklaces-Necklace-Layered/dp/B0BXKY3XW9?tag=all0ad0-21https://m.media-amazon.com/images/I/21iQgpW3i4L.jpg

- https://www.amazon.com/Greenland-Home-GL-THROWSH-Shangri-La-Throw/dp/B017U6U8JO?tag=all0ad0-21https://m.media-amazon.com/images/I/61VISLraXWL.jpg

- https://www.amazon.com/Gyierwe-High-Pressure-Stainless-Adjustable-Filtration/dp/B0C5RHCSN8?tag=all0ad0-21https://m.media-amazon.com/images/I/51RZ3tTLzyL.jpg

- https://www.amazon.com/Halloween-Decorations-Indoor-DECSPAS-Haunted/dp/B0C6JPZ6K5?tag=all0ad0-21https://m.media-amazon.com/images/I/51zSmWVx7HL.jpg

- https://www.amazon.com/HawSkgFub-Curtains-Farmhouse-Seasonal-Bathroom/dp/B0BVLTJR4P?tag=all0ad0-21https://m.media-amazon.com/images/I/412k-TN9yzL.jpg

- https://www.amazon.com/Helping-Soldering-Hand-Base-Microscope/dp/B0BBR46ZQ9?tag=all0ad0-21https://m.media-amazon.com/images/I/41IZoepkAkL.jpg

- https://www.amazon.com/Her-Soul-Take-Souls-Trilogy/dp/B0BDT2M2QZ?tag=all0ad0-21https://m.media-amazon.com/images/I/51Z1AUkTytL.jpg

- https://www.amazon.com/HISANDUK-Pendant-Fixtures-Kitchen-Adjustable/dp/B0B76G6VCT?tag=all0ad0-21https://m.media-amazon.com/images/I/41SzQX+tAeL.jpg

- https://www.amazon.com/Homeleo-Operated-Christmas-Strawberry-Decorations/dp/B07WTVGTWX?tag=all0ad0-21https://m.media-amazon.com/images/I/51+CNn9QowL.jpg

- https://www.amazon.com/House-of-Impossible-Beauties-audiobook/dp/B077VQ68HH?tag=all0ad0-21https://m.media-amazon.com/images/I/51VFkDIrDsL.jpg

- https://www.amazon.com/HOUSE-Organizer-Upgraded-Undersink-Organizers/dp/B0BY8XZK71?tag=all0ad0-21https://m.media-amazon.com/images/I/510FKKVDhpL.jpg

- https://www.amazon.com/House-Witch-Humorous-Romantic-Fantasy/dp/B0BLJ7CQKK?tag=all0ad0-21https://m.media-amazon.com/images/I/61+JJSw9jZL.jpg

- https://www.amazon.com/HR-Quadcopter-Beginners-Altitude-Batteries/dp/B08L8YFT4S?tag=all0ad0-21https://m.media-amazon.com/images/I/41pf-DNDj5L.jpg

- https://www.amazon.com/Humble-Chic-Wall-Art-Prints/dp/B07QL3GTX4?tag=all0ad0-21https://m.media-amazon.com/images/I/31QO1OLNDGL.jpg

- https://www.amazon.com/Huyerdo-Corduroy-Cosmetic-Aesthetic-Organizer/dp/B0C1YXPX5M?tag=all0ad0-21https://m.media-amazon.com/images/I/51Es7IjWzjL.jpg

- https://www.amazon.com/I-Invited-Her-In-Adele-Parks-audiobook/dp/B07JZGFFHY?tag=all0ad0-21https://m.media-amazon.com/images/I/51ak3gyHziL.jpg

- https://www.amazon.com/In1docom-Peanut-Massage-Massager-Lacrosse/dp/B0CB4S2JRX?tag=all0ad0-21https://m.media-amazon.com/images/I/41sBnhqhZzL.jpg

- https://www.amazon.com/INeedIt-D101-Portable-Wireless-Organization/dp/B0BCKV8B81?tag=all0ad0-21https://m.media-amazon.com/images/I/21OGF1G7x9L.jpg

- https://www.amazon.com/Inflatable-Ground-Dustproof-Rainproof-Waterproof/dp/B0CB8B4PKX?tag=all0ad0-21https://m.media-amazon.com/images/I/51o0yuVrjPL.jpg

- https://www.amazon.com/Insane-Labz-Bitartrate-AMPiberry-Endurance/dp/B07V6JCWJG?tag=all0ad0-21https://m.media-amazon.com/images/I/51GZtu2lUZL.jpg

- https://www.amazon.com/Island-Queen-A-Novel/dp/B08MLPY619?tag=all0ad0-21https://m.media-amazon.com/images/I/51snO62ltvL.jpg

- https://www.amazon.com/J-hong-Toddlers-Learning-Montessori-Christmas/dp/B0C4LK67Q5?tag=all0ad0-21https://m.media-amazon.com/images/I/51q4mMYfoLL.jpg

- https://www.amazon.com/jalz-Wooden-Spoons-Cooking-3-Piece/dp/B07DZKTC9B?tag=all0ad0-21https://m.media-amazon.com/images/I/416iXJ1B8PL.jpg

- https://www.amazon.com/JENN-ARDOR-Fashion-Sneakers-Comfortable/dp/B08N16X7HR?tag=all0ad0-21https://m.media-amazon.com/images/I/41y+m0CTBeS.jpg

- https://www.amazon.com/John-Sterling-Sports-7-Ball-Capacity/dp/B01DWSH1I0?tag=all0ad0-21https://m.media-amazon.com/images/I/31-O3z3v+XL.jpg

- https://www.amazon.com/Jorpet-Elevated-Adjustable-Non-Slip-Stainless/dp/B0C3YRH31J?tag=all0ad0-21https://m.media-amazon.com/images/I/41+P1DIyA0L.jpg

- https://www.amazon.com/JOYMODE-women-workout-clothes-Legging/dp/B08766FN91?tag=all0ad0-21https://m.media-amazon.com/images/I/31DoD7LD8EL.jpg

- https://www.amazon.com/Kettlebell-Whiskey-Shaped-Silicone-Melting/dp/B0C5NBXHDF?tag=all0ad0-21https://m.media-amazon.com/images/I/41XwetcdbXL.jpg

- https://www.amazon.com/Kingdom-Come-Backyard-Starship-Book/dp/B0BKBRDCV2?tag=all0ad0-21https://m.media-amazon.com/images/I/51DX-OPQv6L.jpg

- https://www.amazon.com/Kitchen-BAYZZ-Cushioned-Non-Slip-Waterproof/dp/B095GZYG7Z?tag=all0ad0-21https://m.media-amazon.com/images/I/41-vM6JlCeL.jpg

- https://www.amazon.com/KOIOS-Immersion-Multifunctional-Stainless-Titanium/dp/B076GW89V9?tag=all0ad0-21https://m.media-amazon.com/images/I/41YrmEtdu0L.jpg

- https://www.amazon.com/Lady-Orc-Sworn-Book/dp/B0B4BB9B21?tag=all0ad0-21https://m.media-amazon.com/images/I/51VsjpQ+WoL.jpg

- https://www.amazon.com/Large-Multipurpose-Waterproof-Picnic-Shopping/dp/B0CCS1HSMK?tag=all0ad0-21https://m.media-amazon.com/images/I/410kS6OJJHL.jpg

- https://www.amazon.com/Large-Multipurpose-Waterproof-Picnic-Shopping/dp/B0CF58TZ9J?tag=all0ad0-21https://m.media-amazon.com/images/I/410kS6OJJHL.jpg

- https://www.amazon.com/Lay-My-Heart-Angela-Pneuman-ebook/dp/B00FJ5EQ0Q?tag=all0ad0-21https://m.media-amazon.com/images/I/419DBRj4HuL.jpg

- https://www.amazon.com/LeadDock-Ice-Cube-Tray-Lid/dp/B0CB6TN9DY?tag=all0ad0-21https://m.media-amazon.com/images/I/51WpROdbWEL.jpg

- https://www.amazon.com/Learning-Educational-Preschool-Developmental-Montessori/dp/B0BY2HBQLS?tag=all0ad0-21https://m.media-amazon.com/images/I/510R2-67PbL.jpg

- https://www.amazon.com/LEDKINGDOMUS-inches-Driving-Compatible-Pickup/dp/B09176936Z?tag=all0ad0-21https://m.media-amazon.com/images/I/416I+aay3RL.jpg

- https://www.amazon.com/Legend-Randidly-Ghosthound-LitRPG-Adventure/dp/B09CR88G8L?tag=all0ad0-21https://m.media-amazon.com/images/I/51cte+pTRVL.jpg

- https://www.amazon.com/Legend-Randidly-Ghosthound-LitRPG-Adventure/dp/B09NB32VS5?tag=all0ad0-21https://m.media-amazon.com/images/I/51HquIJf4EL.jpg

- https://www.amazon.com/Legend-Randidly-Ghosthound-LitRPG-Adventure/dp/B09WTLH4Z9?tag=all0ad0-21https://m.media-amazon.com/images/I/61j-pJq9blL.jpg

- https://www.amazon.com/LENRUE-Computer-Speakers-Desktop-AUX_Black/dp/B0BRFN13S9?tag=all0ad0-21https://m.media-amazon.com/images/I/41XeSj+qShL.jpg

- https://www.amazon.com/LIANTRAL-Firewood-Outdoor-Upgrade-Fireplace/dp/B0BKSVRW4N?tag=all0ad0-21https://m.media-amazon.com/images/I/51Y9mIZmocL.jpg

- https://www.amazon.com/Lilys-White-Lace-Carolyn-Brown-ebook/dp/B00DTTW5UW?tag=all0ad0-21https://m.media-amazon.com/images/I/51iPZWUaUQL.jpg

- https://www.amazon.com/LISEN-Magnetic-Install-Friendly-Smartphones/dp/B07YRKDF4P?tag=all0ad0-21https://m.media-amazon.com/images/I/51tMoOMiRzL.jpg

- https://www.amazon.com/LJIOEZZI-Balaclava-Weather-Snowboarding-Motorcycling/dp/B0BHVNYM8Z?tag=all0ad0-21https://m.media-amazon.com/images/I/31YRjxzYi8L.jpg

- https://www.amazon.com/LOXP-2C-CAR-Sun-Shade-Umbrella-Medium/dp/B0BR7WLLY4?tag=all0ad0-21https://m.media-amazon.com/images/I/41vWPHUEsJL.jpg

- https://www.amazon.com/LUENX-Trendy-Oversized-Aviator-Sunglasses/dp/B09CMB5D7N?tag=all0ad0-21https://m.media-amazon.com/images/I/41uZsi7kskL.jpg

- https://www.amazon.com/MAGEFY-Eyelashes-Natural-Handmade-Reusable/dp/B0956V789H?tag=all0ad0-21https://m.media-amazon.com/images/I/51UgJTxfm2S.jpg

- https://www.amazon.com/Magnetic-Birthday-Building-Preschool-Montessori/dp/B0BWWPC5MR?tag=all0ad0-21https://m.media-amazon.com/images/I/61UuS8o90ZL.jpg

- https://www.amazon.com/Makartt-Extension-Glitter-Trendy-Builder/dp/B096VQW7NF?tag=all0ad0-21https://m.media-amazon.com/images/I/31hUzSIu6gL.jpg

- https://www.amazon.com/Makeup-Brush-Holder-Travel-Essentials/dp/B0C7G5YXRZ?tag=all0ad0-21https://m.media-amazon.com/images/I/31od+KNxUkL.jpg

- https://www.amazon.com/MaraFansie-Housewarming-Birthday-Anniversary-Graduation/dp/B0BS416GJ3?tag=all0ad0-21https://m.media-amazon.com/images/I/51QjF6NaQ+L.jpg

- https://www.amazon.com/MAREE-Face-Moisturizer-Anti-Wrinkle-Hyaluronic/dp/B0C3LXWJJ7?tag=all0ad0-21https://m.media-amazon.com/images/I/512NgLTHbTL.jpg

- https://www.amazon.com/Matter-Black-Lives-Writing-Yorker/dp/B08TP4YC6S?tag=all0ad0-21https://m.media-amazon.com/images/I/513aBwlCG-L.jpg

- https://www.amazon.com/Mavericks-Craig-Alanson-audiobook/dp/B07GH5ZJ3N?tag=all0ad0-21https://m.media-amazon.com/images/I/51OW+MpwHYL.jpg

- https://www.amazon.com/McAfee-Protection-Exclusive-Monitoring-Subscription/dp/B0BB2N69J8?tag=all0ad0-21https://m.media-amazon.com/images/I/510kxZvrlKL.jpg

- https://www.amazon.com/McAfee-Protection-Unlimited-Device-Download/dp/B07BFRVMMN?tag=all0ad0-21https://m.media-amazon.com/images/I/51qNb5s7JzL.jpg

- https://www.amazon.com/McAfee-Total-Protection-Devices-Subscription/dp/B07K98XDX8?tag=all0ad0-21https://m.media-amazon.com/images/I/51P0zntKKaL.jpg

- https://www.amazon.com/McAfee-Total-Protection-Devices-Subscription/dp/B07K995RWG?tag=all0ad0-21https://m.media-amazon.com/images/I/51hk1owA-eL.jpg

- https://www.amazon.com/McClusky-Battle-Midway-David-Rigby-ebook/dp/B07NJCKM5P?tag=all0ad0-21https://m.media-amazon.com/images/I/41wKgBrbEhL.jpg

- https://www.amazon.com/Meat-Thermometer-Digital-Grilling-Cooking/dp/B0BQ782XNW?tag=all0ad0-21https://m.media-amazon.com/images/I/51cOJyK7rHL.jpg

- https://www.amazon.com/Missing-Molly-Natalie-Barelli-audiobook/dp/B07N7HZ9XJ?tag=all0ad0-21https://m.media-amazon.com/images/I/41G2D08UIgL.jpg

- https://www.amazon.com/Mondays-Not-Coming-audiobook/dp/B07B7897X8?tag=all0ad0-21https://m.media-amazon.com/images/I/51BRoON6IWL.jpg

- https://www.amazon.com/Monster-Wireless-Bluetooth-Headphones-Rotating/dp/B097JZQXXL?tag=all0ad0-21https://m.media-amazon.com/images/I/41RciianoPL.jpg

- https://www.amazon.com/Moolan-Cordless-Portable-Powerful-Rechargeable/dp/B0CB1C743Z?tag=all0ad0-21https://m.media-amazon.com/images/I/41qRyj2A+xL.jpg

- https://www.amazon.com/MORNEEX-Polyester-Bathroom-Waterproof-72X72inches/dp/B0B712Q9QD?tag=all0ad0-21https://m.media-amazon.com/images/I/41ejnaDrS0L.jpg

- https://www.amazon.com/MOYEIKH-Talking-Elderly-Visually-Impaired/dp/B0C4LCMTNN?tag=all0ad0-21https://m.media-amazon.com/images/I/41m7k3YEUXL.jpg

- https://www.amazon.com/Mr-You-Organizer-ModelsStand-Dust-proof-Velvet%EF%BC%8C12L/dp/B082X6BCJG?tag=all0ad0-21https://m.media-amazon.com/images/I/51cRFT9gZCL.jpg

- https://www.amazon.com/MUJERBAY-Massager-Compression-Full-Cover-Fasciitis/dp/B0BWJ4LT32?tag=all0ad0-21https://m.media-amazon.com/images/I/41d7tUceqaL.jpg

- https://www.amazon.com/Musashi-audiobook/dp/B07FXMJCX6?tag=all0ad0-21https://m.media-amazon.com/images/I/515t3Zygd7L.jpg

- https://www.amazon.com/MUSICOZY-Headphones-Bluetooth-Headband-Waterproof/dp/B09NN1MJQS?tag=all0ad0-21https://m.media-amazon.com/images/I/41qxlHs2CTL.jpg

- https://www.amazon.com/My-Dear-Hamilton-audiobook/dp/B077NN1WWF?tag=all0ad0-21https://m.media-amazon.com/images/I/51sBrSA5VfL.jpg

- https://www.amazon.com/NATOLIKE-Pickleball-Lightweight-Fiberglass-Polypropylene/dp/B0BY8JF32S?tag=all0ad0-21https://m.media-amazon.com/images/I/61I+i2U7+sL.jpg

- https://www.amazon.com/Natrol-High-Potency-Antioxidant-Vitamin-Tablets/dp/B08KXGJXR1?tag=all0ad0-21https://m.media-amazon.com/images/I/41y11UVSqkL.jpg

- https://www.amazon.com/Necromancer-Spellmonger-Book-10/dp/B083YVZ8YQ?tag=all0ad0-21https://m.media-amazon.com/images/I/51FELytNyCL.jpg

- https://www.amazon.com/NeoLartes-July-White-Berry-Garlands/dp/B0BWDHKFWV?tag=all0ad0-21https://m.media-amazon.com/images/I/51P3USHQMCL.jpg

- https://www.amazon.com/Neoprene-Dumbbell-Weights-Anti-slip-Anti-roll/dp/B087JDLWLQ?tag=all0ad0-21https://m.media-amazon.com/images/I/31hKC3UgF7L.jpg

- https://www.amazon.com/NEW-Norton-AntiVirus-Plus-Antivirus/dp/B07Q69X7XL?tag=all0ad0-21https://m.media-amazon.com/images/I/51M35ZaPBmL.jpg

- https://www.amazon.com/Nexillumi-LED-Lights-60-75-Inch/dp/B07XBJR7GY?tag=all0ad0-21https://m.media-amazon.com/images/I/519sc2GYDnL.jpg

- https://www.amazon.com/Nicebay-Professional-Dryerwith3-Attachments-Lightweight/dp/B0CBSHRBS6?tag=all0ad0-21https://m.media-amazon.com/images/I/41Og2LDzcML.jpg

- https://www.amazon.com/Night-Sleep-Death-Stars-Novel/dp/B07XY9SKT3?tag=all0ad0-21https://m.media-amazon.com/images/I/51bBRM-4BUL.jpg

- https://www.amazon.com/NuLink-Electric-Inflation-Decoration-110V-120V/dp/B01H2QF6SK?tag=all0ad0-21https://m.media-amazon.com/images/I/41mdv0LnxfL.jpg

- https://www.amazon.com/Nylavee-Computer-Speakers-Soundbar-Connection/dp/B0BZCMM17X?tag=all0ad0-21https://m.media-amazon.com/images/I/41GYynP1rrL.jpg

- https://www.amazon.com/Oakland-Living-Rose-Bird-Bath/dp/B000PAKVJK?tag=all0ad0-21https://m.media-amazon.com/images/I/41MzpuSS3yL.jpg

- https://www.amazon.com/Oasis-033879-001-VersaFiller-Filter-Cartridge/dp/B002WDQGXS?tag=all0ad0-21https://m.media-amazon.com/images/I/41nyJPxi4SL.jpg

- https://www.amazon.com/OBL-Plastic-Durable-Non-deformable-Imitation/dp/B07SKJ946F?tag=all0ad0-21https://m.media-amazon.com/images/I/51Z2NMJGOwL.jpg

- https://www.amazon.com/OCHYIT-Protector-Waterproof-Defender-Analyzer/dp/B0BKL8DWQR?tag=all0ad0-21https://m.media-amazon.com/images/I/41XownI6MdL.jpg

- https://www.amazon.com/Oil-Sprayer-Dispenser-Accessories-Spritzer/dp/B0B93CBCFC?tag=all0ad0-21https://m.media-amazon.com/images/I/51nNMZPYUXL.jpg

- https://www.amazon.com/OKIMO-Wireless-Computer-Ergonomic-Chromebook/dp/B0CC4KLTKM?tag=all0ad0-21https://m.media-amazon.com/images/I/41LJQ8jNxZL.jpg

- https://www.amazon.com/Organizer-Buttonholes-Stretchable-Connectable-Adjustable/dp/B0C22ZMRWC?tag=all0ad0-21https://m.media-amazon.com/images/I/51ql4-4eN8L.jpg

- https://www.amazon.com/Organizer-organizer-Zippers-Blocking-Insert%EF%BC%8C5/dp/B07WMWCBTQ?tag=all0ad0-21https://m.media-amazon.com/images/I/310UEu-zi7L.jpg

- https://www.amazon.com/ORICO-Adapter-External-Converter-Transfer/dp/B0B3MMJ1LB?tag=all0ad0-21https://m.media-amazon.com/images/I/41dfdJx68AL.jpg

- https://www.amazon.com/Original-Certified-Charging-Lightning-Compatible/dp/B0CJDHYYZD?tag=all0ad0-21https://m.media-amazon.com/images/I/41trxkrOxLL.jpg

- https://www.amazon.com/Oupeng-sky-Carabiner-Clip-Ring/dp/B07MSBZ7BZ?tag=all0ad0-21https://m.media-amazon.com/images/I/51Bxk8se22L.jpg

- https://www.amazon.com/Over-Top-Jonathan-Van-Ness-audiobook/dp/B07Q386LM5?tag=all0ad0-21https://m.media-amazon.com/images/I/51rcfpVI5UL.jpg

- https://www.amazon.com/Padfolio-Portfolio-Interview-Document-Organizer/dp/B07VLPS9ZK?tag=all0ad0-21https://m.media-amazon.com/images/I/41CSIOr0ZoL.jpg

- https://www.amazon.com/Pairs-Heavy-Ratchet-Tie-Mount-Crossbar-Easy/dp/B0725Z9LSB?tag=all0ad0-21https://m.media-amazon.com/images/I/41xI-f4Jj9L.jpg

- https://www.amazon.com/Paperwhite-Generation-Signature-Lightweight-Transparent/dp/B0C8B1JJYZ?tag=all0ad0-21https://m.media-amazon.com/images/I/41MpXChQpIL.jpg

- https://www.amazon.com/Paw-Patrol-Collectible-DIE-CAST-Vehicles/dp/B07S6VH6DD?tag=all0ad0-21https://m.media-amazon.com/images/I/41nC9yFe1dL.jpg

- https://www.amazon.com/Peace-nest-Checkered-Checkerboard-Lightweight/dp/B0BX969J3C?tag=all0ad0-21https://m.media-amazon.com/images/I/51D8BO3dasL.jpg

- https://www.amazon.com/Perfect-Run-Book/dp/B09SVMRP12?tag=all0ad0-21https://m.media-amazon.com/images/I/51zkV2RkC2L.jpg

- https://www.amazon.com/Pet-Grooming-Brush-Double-Sided-Blue/dp/B0BRPZY67Z?tag=all0ad0-21https://m.media-amazon.com/images/I/41iA2Z29chL.jpg

- https://www.amazon.com/Pieces-Washed-Reversible-Cooling-Closure/dp/B094FGZ3XD?tag=all0ad0-21https://m.media-amazon.com/images/I/41hX+gmSQvL.jpg

- https://www.amazon.com/PINHEN-Stabilizer-360%C2%B0Rotate-Hands-Free-Compatible/dp/B09N8VL6VT?tag=all0ad0-21https://m.media-amazon.com/images/I/41j3QfO4JLL.jpg

- https://www.amazon.com/Planner-2023-2024-Academic-Calendar-Hardcover/dp/B0BP22KCYV?tag=all0ad0-21https://m.media-amazon.com/images/I/51xfDnKUkSL.jpg

- https://www.amazon.com/Ponytail-hoyuwak-Rhinestone-Accessories-Silver/dp/B0C1GRQNLM?tag=all0ad0-21https://m.media-amazon.com/images/I/51Jl+ePN1vL.jpg

- https://www.amazon.com/Portable-Wireless-Espresso-Machine-Freshly-brewed/dp/B09LCKLYGT?tag=all0ad0-21https://m.media-amazon.com/images/I/21uyv4z55pL.jpg

- https://www.amazon.com/Premom-Quantitative-Ovulation-Predictor-Numerical/dp/B07P7LSW57?tag=all0ad0-21https://m.media-amazon.com/images/I/51dY9dReMhL.jpg

- https://www.amazon.com/Professional-Pedicure-Rosmax-Stainless-Washable/dp/B08TM7TH1N?tag=all0ad0-21https://m.media-amazon.com/images/I/519tcTL-K8L.jpg

- https://www.amazon.com/Projector-Bluetooth-15000Lumens-Portable-Compatible/dp/B0CGXHVB5D?tag=all0ad0-21https://m.media-amazon.com/images/I/414hYl+AJuL.jpg

- https://www.amazon.com/Projector-Control-Bluetooth-Dimmable-Projection/dp/B09F95JS41?tag=all0ad0-21https://m.media-amazon.com/images/I/61GYRolZ-9L.jpg

- https://www.amazon.com/Projector-HOMPOW-Bluetooth-Correction-Compatible/dp/B0BCKV1VHX?tag=all0ad0-21https://m.media-amazon.com/images/I/616j3cS2O9L.jpg

- https://www.amazon.com/Protector-Coverage-Protection-Installation-Specially/dp/B0B87THFLK?tag=all0ad0-21https://m.media-amazon.com/images/I/41tlgaNVLCL.jpg

- https://www.amazon.com/Protectors-Furniture-Scratches-Hardwood-Large/dp/B0CHB4ZR22?tag=all0ad0-21https://m.media-amazon.com/images/I/51UIaW9XlXL.jpg

- https://www.amazon.com/Purifier-Purifiers-VEWIOR-Settings-Ultra-Quiet/dp/B0B41Z7B6H?tag=all0ad0-21https://m.media-amazon.com/images/I/41o0rCSrKcL.jpg

- https://www.amazon.com/Purifiers-Purifier-Aromatherapy-Function-Filtration/dp/B0C5GCPDV2?tag=all0ad0-21https://m.media-amazon.com/images/I/41KvpYD7k5L.jpg

- https://www.amazon.com/QAWDAWM-Conduction-Headphones-Bluetooth-Waterproof/dp/B0CGR1799N?tag=all0ad0-21https://m.media-amazon.com/images/I/41trzwTzdKL.jpg

- https://www.amazon.com/REDESS-Beanie-Women-Winter-Slouchy/dp/B0BZVDHFNJ?tag=all0ad0-21https://m.media-amazon.com/images/I/51hHyl+rkWL.jpg

- https://www.amazon.com/Robot-Vacuum-Mop-Combo-Self-Charging/dp/B0CD3XTMS1?tag=all0ad0-21https://m.media-amazon.com/images/I/518O5sALu1L.jpg

- https://www.amazon.com/RONGPRO-Combination-Carpenter-Zinc-Alloy-Die-Casting/dp/B09GVQRZVK?tag=all0ad0-21https://m.media-amazon.com/images/I/41uHEayHMEL.jpg

- https://www.amazon.com/ROOMLIFE-Chenille-Slipcover-Loveseat-Sectional/dp/B0BJ5T5Z91?tag=all0ad0-21https://m.media-amazon.com/images/I/51VNyhUtziL.jpg

- https://www.amazon.com/Ryan-Rule-York-Ruthless-Book/dp/B0BPJSLNDX?tag=all0ad0-21https://m.media-amazon.com/images/I/51o9mZQpbgL.jpg

- https://www.amazon.com/Sandstorm-Street-Rats-Aramoor-Book/dp/B09QDYGXGT?tag=all0ad0-21https://m.media-amazon.com/images/I/61g7nbsvDiL.jpg

- https://www.amazon.com/Santoku-Knife-Stainless-Ergonomic-Restaurant/dp/B0865TNBKC?tag=all0ad0-21https://m.media-amazon.com/images/I/41bVfVBEhOL.jpg

- https://www.amazon.com/SATC-Woodworking-Carpenters-Gardening-Resistant/dp/B09WYGJJ3F?tag=all0ad0-21https://m.media-amazon.com/images/I/31U+zqjEzNL.jpg

- https://www.amazon.com/Scissors-ULG-Hairdressing-Stainless-Detachable/dp/B09ZTZYDT2?tag=all0ad0-21https://m.media-amazon.com/images/I/315+PnPQhmL.jpg

- https://www.amazon.com/SeaVees-Mens-Standard-Casual-Sneaker/dp/B008TUCU1A?tag=all0ad0-21https://m.media-amazon.com/images/I/31z4PJ-dnQL.jpg

- https://www.amazon.com/Security-Lighting-Waterproof-Outdoor-Basketball/dp/B09GV2B545?tag=all0ad0-21https://m.media-amazon.com/images/I/41Hh9Px9UzL.jpg

- https://www.amazon.com/Shadow-Dark-Queen-Serpentwar-Saga/dp/B07YCT8PKM?tag=all0ad0-21https://m.media-amazon.com/images/I/517+deIbkiL.jpg

- https://www.amazon.com/Shadowplay-Spellmonger-Legacy-Secrets-Book/dp/B09DZ4S8MG?tag=all0ad0-21https://m.media-amazon.com/images/I/51mV-QcOzOL.jpg

- https://www.amazon.com/Shamrocks-Bedroom-Bathroom-Kitchen-Hallway/dp/B07ZV5DSTN?tag=all0ad0-21https://m.media-amazon.com/images/I/51J0KqPk6ML.jpg

- https://www.amazon.com/Silicone-Reusable-AiKanbo-Airtight-Preservation/dp/B09X1LXS9B?tag=all0ad0-21https://m.media-amazon.com/images/I/41fNI3t5hYS.jpg

- https://www.amazon.com/Sixriver-Crimper-Straightener-Crimping-Volumizing/dp/B0C85XZFM8?tag=all0ad0-21https://m.media-amazon.com/images/I/51OrfENeNCL.jpg

- https://www.amazon.com/Skyfoot-Adjustable-Increase-Insoles-Cushioning/dp/B0BN5V3PPF?tag=all0ad0-21https://m.media-amazon.com/images/I/41uwN2FiiiL.jpg

- https://www.amazon.com/skysen-Magnetic-Decorative-Handles-Hardware/dp/B07L72D7FX?tag=all0ad0-21https://m.media-amazon.com/images/I/51AIynaJumL.jpg

- https://www.amazon.com/STAR-WARS-SW-Halloween-Wookiee/dp/B0BHZVTNQV?tag=all0ad0-21https://m.media-amazon.com/images/I/411fjZsBYrL.jpg

- https://www.amazon.com/Stens-375-402-Black-Decker-82-020/dp/B01H5K2QSQ?tag=all0ad0-21https://m.media-amazon.com/images/I/21Tkj5Btb0L.jpg

- https://www.amazon.com/Stickers-Bottles-Waterproof-Animals-Skateboard/dp/B09S3JSNV3?tag=all0ad0-21https://m.media-amazon.com/images/I/61E2JiiBkML.jpg

- https://www.amazon.com/Still-Just-Geek-Annotated-Memoir/dp/B09HZB3WGP?tag=all0ad0-21https://m.media-amazon.com/images/I/51Ee05wyCwL.jpg

- https://www.amazon.com/Straightener-MiroPure-Straightening-Anti-Scald-Temperature/dp/B06XGXP9RP?tag=all0ad0-21https://m.media-amazon.com/images/I/41okvbcUEnL.jpg

- https://www.amazon.com/Summit-Treestands-Replacement-Cables-Climbing/dp/B001BAGLXI?tag=all0ad0-21https://m.media-amazon.com/images/I/41vANZDPuCL.jpg

- https://www.amazon.com/Support-Car-Car-Support-Memory-Car-Driving/dp/B07MV5X84K?tag=all0ad0-21https://m.media-amazon.com/images/I/51iEP2LoKmL.jpg

- https://www.amazon.com/Surface-Charger-Microsoft-Compatible-Laptop/dp/B0BW41H1W2?tag=all0ad0-21https://m.media-amazon.com/images/I/31Xt8mDjKyL.jpg

- https://www.amazon.com/Survival-Essentials-Tactical-Emergency-Activities/dp/B0BFFC8ZTV?tag=all0ad0-21https://m.media-amazon.com/images/I/512QQyOIcjL.jpg

- https://www.amazon.com/SwaggWood-Certified-Lightning-Charging-Compatible/dp/B0CFQF6LS6?tag=all0ad0-21https://m.media-amazon.com/images/I/41vq56QWFNL.jpg

- https://www.amazon.com/Tablecloth-Disposable-Surfboard-Rectangle-Birthday/dp/B09X2GK6Z7?tag=all0ad0-21https://m.media-amazon.com/images/I/51M30CIsW2L.jpg

- https://www.amazon.com/Tanming-Womens-Seamless-Workout-Running/dp/B0BHY73PWB?tag=all0ad0-21https://m.media-amazon.com/images/I/310AJZGSfCL.jpg

- https://www.amazon.com/Tear-off-Productivity-Anna-Marie-Collections/dp/B09GDDCMJP?tag=all0ad0-21https://m.media-amazon.com/images/I/41yZVQwPEZL.jpg

- https://www.amazon.com/Textures-Graphite-Charcoal-Steven-Pearce-ebook/dp/B01N7Y0XP5?tag=all0ad0-21https://m.media-amazon.com/images/I/61XIPXXBL5L.jpg

- https://www.amazon.com/The-Beginning-of-Everything-audiobook/dp/B081NXJVT5?tag=all0ad0-21https://m.media-amazon.com/images/I/51aG06izsLL.jpg

- https://www.amazon.com/The-Enlightenment/dp/B07VHFHN3Y?tag=all0ad0-21https://m.media-amazon.com/images/I/51ArbS5NSDL.jpg

- https://www.amazon.com/The-Good-Lie/dp/B08QVNGF3M?tag=all0ad0-21https://m.media-amazon.com/images/I/51UPMGqzseS.jpg

- https://www.amazon.com/Thermal-Moisture-Wicking-Breathable-Charcoal/dp/B0929CZSL2?tag=all0ad0-21https://m.media-amazon.com/images/I/61GFFLF2InL.jpg

- https://www.amazon.com/Tiny-Worlds-flashcards-Preschoolers-FlashCards/dp/9811186480?tag=all0ad0-21https://m.media-amazon.com/images/I/51Du0jKtPzL.jpg

- https://www.amazon.com/TOZO-G1-Headphones-Sensitivity-Low-Latency/dp/B0B31GZW61?tag=all0ad0-21https://m.media-amazon.com/images/I/41E2gk95+aL.jpg

- https://www.amazon.com/Traffic-Secrets-Underground-Playbook-Customers/dp/B08B9XH6KH?tag=all0ad0-21https://m.media-amazon.com/images/I/51hWa7NS0NL.jpg

- https://www.amazon.com/Twinkle-Star-Decorative-Waterproof-Decorations/dp/B098JY29L7?tag=all0ad0-21https://m.media-amazon.com/images/I/616wtcSojML.jpg

- https://www.amazon.com/TWOPAN-Docking-Station-Charging-Reader/dp/B08DP397VJ?tag=all0ad0-21https://m.media-amazon.com/images/I/51uMUogb87L.jpg

- https://www.amazon.com/ULA-YUAN-Earrings-Sterling-Lightweight-Zirconia/dp/B0C4TD5XT1?tag=all0ad0-21https://m.media-amazon.com/images/I/41xB7mwzhLL.jpg

- https://www.amazon.com/Ultrean-Scale%EF%BC%8C33lb-Graduation-Rechargeable-Function/dp/B0C4T7DYPF?tag=all0ad0-21https://m.media-amazon.com/images/I/51AeRXTVp5L.jpg

- https://www.amazon.com/undercoat-grooming-couch-remover-cleaning/dp/B0BQXW1KKT?tag=all0ad0-21https://m.media-amazon.com/images/I/412YRiMjcUL.jpg

- https://www.amazon.com/Unfinished-Large-Unpainted-Birthday-Decoration/dp/B0C8YQCQBY?tag=all0ad0-21https://m.media-amazon.com/images/I/31bPd6gVmSL.jpg

- https://www.amazon.com/UniLiGis-Washable-Backpack-Adjustable-Drawstring/dp/B08CDZDKP2?tag=all0ad0-21https://m.media-amazon.com/images/I/413CAK6mBXL.jpg

- https://www.amazon.com/Uniquewise-QI003353R-L-Handcrafted-Burgundy-Natural/dp/B07F866473?tag=all0ad0-21https://m.media-amazon.com/images/I/313YcWeXFoL.jpg

- https://www.amazon.com/VacLife-Cordless-Charger-350CFM-Electric-High-Speed/dp/B0C6MZZLTT?tag=all0ad0-21https://m.media-amazon.com/images/I/41bEaK78nKL.jpg

- https://www.amazon.com/VAV-Infrared-Strong-Diffuser-Concentrator/dp/B07H8SR9K7?tag=all0ad0-21https://m.media-amazon.com/images/I/41uNl6-KSPL.jpg

- https://www.amazon.com/Vilucks-Reusable-Universal-Microfiber-Cloths/dp/B09TPPK2T6?tag=all0ad0-21https://m.media-amazon.com/images/I/21tCfu9A9wL.jpg

- https://www.amazon.com/Vooii-iPhone-Silicone-Protective-Microfiber/dp/B07Z7LY135?tag=all0ad0-21https://m.media-amazon.com/images/I/41LTfdLnNVL.jpg

- https://www.amazon.com/Walking-Sam-Father-Hundred-Across/dp/B0BJ14HHVD?tag=all0ad0-21https://m.media-amazon.com/images/I/51xw7IcZbAL.jpg

- https://www.amazon.com/Watchers-gripping-debut-horror-novel-ebook/dp/B08TP9ZQY5?tag=all0ad0-21https://m.media-amazon.com/images/I/41g5T7Vj8vL.jpg

- https://www.amazon.com/Waterproof-Exquisitely-Lengthening-Thickening-Smudge-Proof/dp/B09FJK67YH?tag=all0ad0-21https://m.media-amazon.com/images/I/51X6cb5qVvL.jpg