Hot Posts

PCI-SIG Completes CopprLink Cabling Standard: PCIe 5.0 & 6.0 Get Wired

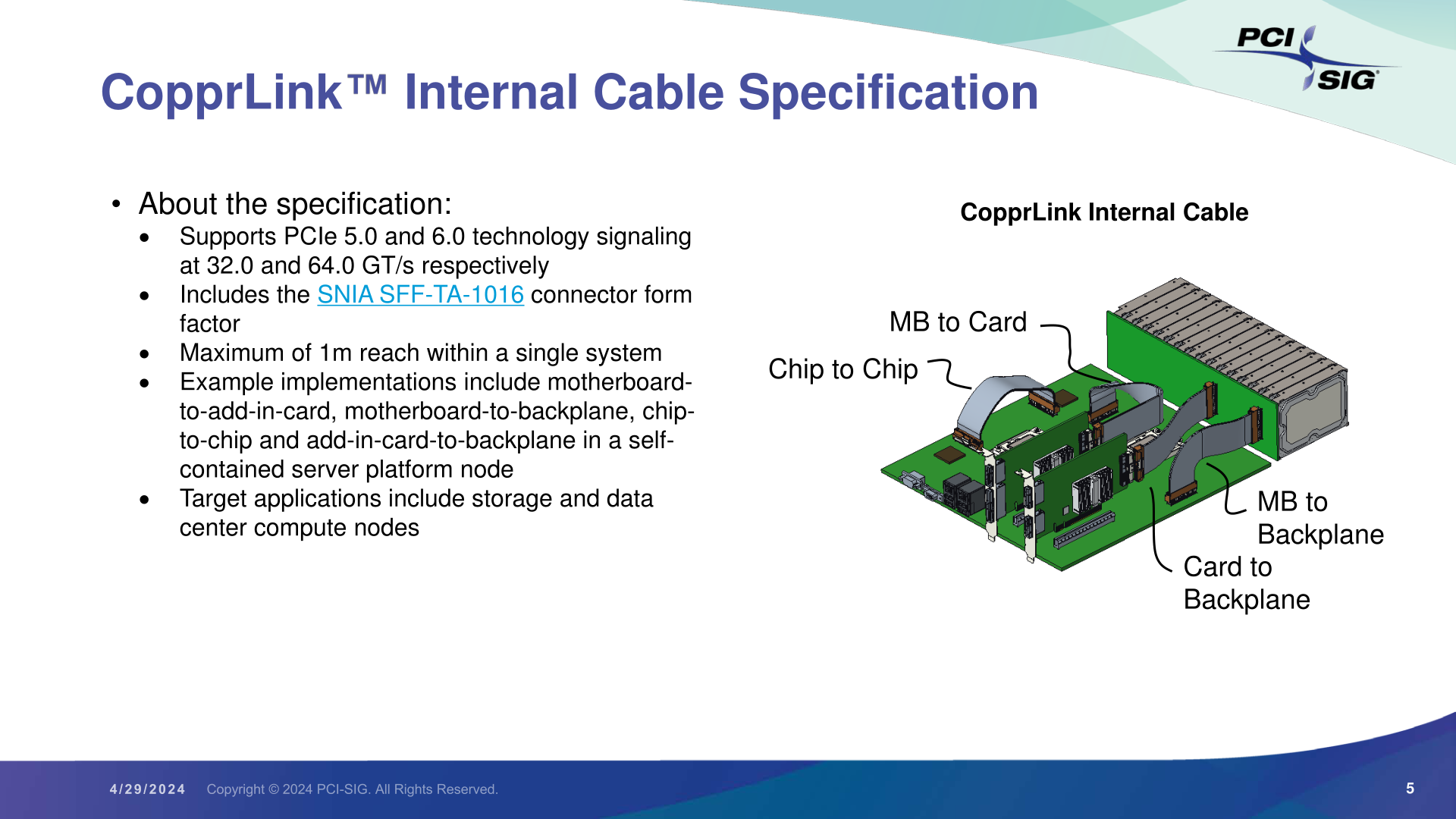

The PCI-SIG sends word over this morning that the special interest group has completed their development efforts on the group’s new PCI-Express cabling standard, CopprLink. Designed to go hand-in-hand with PCIe 5.0 and PCIe 6.0, CopprLink defines both internal and external copper cabling for the latest PCIe standards, giving system vendors and assemblers the ability to use wires to connect devices within a system, or even whole systems.

The CopprLink standard is, in practice, a pair of standards sharing the same brand-name under the PCI-SIG umbrella. The internal standard, “CopprLink Internal Cable”, is designed to allow for a new generation of PCIe cables up to 1 meter in length that are capable of sustaining PCIe 5.0 and PCIe 6.0 signaling. Internal CopprLink effectively supplants a host of older internal PCIe cabling standards (including the abandoned OCuLink), which were originally designed for earlier generations of PCIe signaling.

At a high level, internal CopprLink is intended to provide not only host-to-device connectivity, but even more transparent backhaul applications such as motherboard-to-backplane connectivity, and unique applications such as chip-to-chip PCIe connections. In other words, CopprLink allows for cabled PCIe to be used in almost any situation where a PCIe connection needs to be established within a system. Strictly speaking, CopprLink doesn't replace the PCIe CEM connector in any way – but the relatively thick copper cables have less signal loss than PCB traces, making a cabled standard extremely useful even for internal connections. PCI-SIG sees CopprLink cables taking hold in the storage and data center markets, product categories where we already see PCIe cabling in use today.

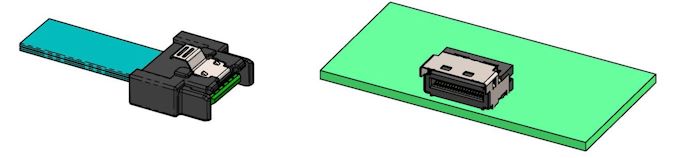

The companion connector standard for internal CopprLink is the SNIA-developed SFF-TA-1016 connector, which bears more than a passing resemblance to the widely-used SFF-8654 (SlimSAS) connector. SFF-TA-1016 is available in x4, x8, and x16 configurations, and while the PCI-SIG doesn’t go so far as to defining widths within their own standard, the connectors available paint a clear picture of the options at hand. Internal CopprLink x4 should be especially popular with storage, as we already see today.

Top: SFF-TA-1016 Family of Connectors (Figure 4-1, Image Courtesy SNIA)

Bottom: Sample SFF-TA-1016 x4 Contact Plug and Recepticle (Figure 4-2, Image Courtesy SNIA)

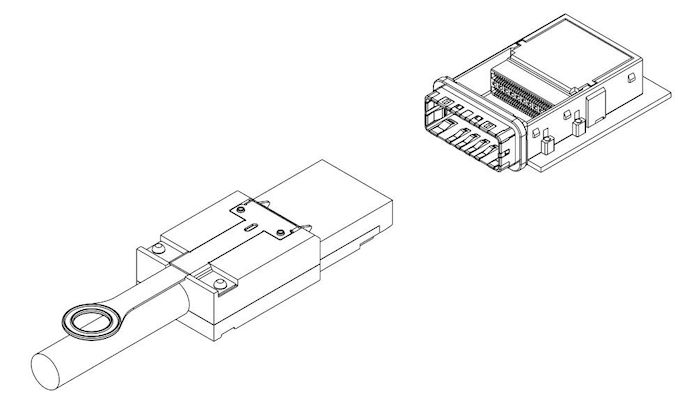

Meanwhile, the group has also developed an external cabling standard to cover those same PCIe 5.0/6.0 data rates. External CopprLink cables can go up to 2 meters, allowing for board-to-board connections within a rack, and even short rack-to-rack PCIe connections.

The external version of CopprLink also uses a more robust connector, relying on SNIA’s SFF-TA-1032 standard. Like internal/1016, this is available with x4, x8, and x16 configurations, using 44, 68, and 120 positions/pins respectively. The PCI-SIG is expecting this version of the standard to be primarily adopted by the AI/Machine Learning markets, which need to move heaps of data between systems. Notably, however, they don’t really expect the storage market to make use of this spec – instead, they’ll be served by an updated version of the classic PCI Express External Cabling standard.

SFF-TA-1032 x16 Plug and Connector (Figure 4-1, Image Courtesy SNIA)

Finally, a bit farther out on the group’s roadmap, PIG-SIG is al... PCIe

Popular Post

AMD Announces FSR 3.1: Seriously Improved Upscaling Quality AMD's FidelityFX Super Resolution 3 technology package introduced a plethora of enhancements to the FSR technology on Radeon RX 6000 and 7000-series graphics cards last September. But perfection has no limits, so this week, the company is rolling out its FSR 3.1 technology, which improves upscaling quality, decouples frame generation from AMD's upscaling, and makes it easier for developers to work with FSR.

Arguably, AMD's FSR 3.1's primary enhancement is its improved temporal upscaling image quality: compared to FSR 2.2, the image flickers less at rest and no longer ghosts when in movement. This is a significant improvement, as flickering and ghosting artifacts are particularly annoying. Meanwhile, FSR 3.1 has to be implemented by the game developer itself, and the first title to support this new technology sometime later this year is Ratchet & Clank: Rift Apart.

| Temporal Stability | |

| AMD FSR 2.2 | AMD FSR 3.1 |

| Ghosting Reduction | |

| AMD FSR 2.2 | AMD FSR 3.1 |

Another significant development brought by FSR 3.1 is its decoupling from the Frame Generation feature introduced by FSR 3. This capability relies on a form of AMD's Fluid Motion Frames (AFMF) optical flow interpolation. It uses temporal game data like motion vectors to add an additional frame between existing ones. This ability can lead to a performance boost of up to two times in compatible games, but it was initially tied to FSR 3 upscaling, which is a limitation. Starting from FSR 3.1, it will work with other upscaling methods, though AMD refrains from saying which methods and on which hardware for now. Also, the company does not disclose when it is expected to be implemented by game developers.

In addition, AMD is bringing support for FSR3 to Vulkan and Xbox Game Development Kit, enabling game developers on these platforms to use it. It also adds FSR 3.1 to the FidelityFX API, which simplifies debugging and enables forward compatibility with updated versions of FSR.

Upon its release in September 2023, AMD FSR 3 was initially supported by two titles, Forspoken and Immortals of Aveum, with ten more games poised to join them back then. Fast forward to six months later, the lineup has expanded to an impressive roster of 40 games either currently supporting or set to incorporate FSR 3 shortly. As of March 2024, FSR is supported by games like Avatar: Frontiers of Pandora, Starfield, The Last of Us Part I. Shortly, Cyberpunk 2077, Dying Light 2 Stay Human, Frostpunk 2, and Ratchet & Clank: Rift Apart will support FSR shortly.

Source: AMD

GPUs

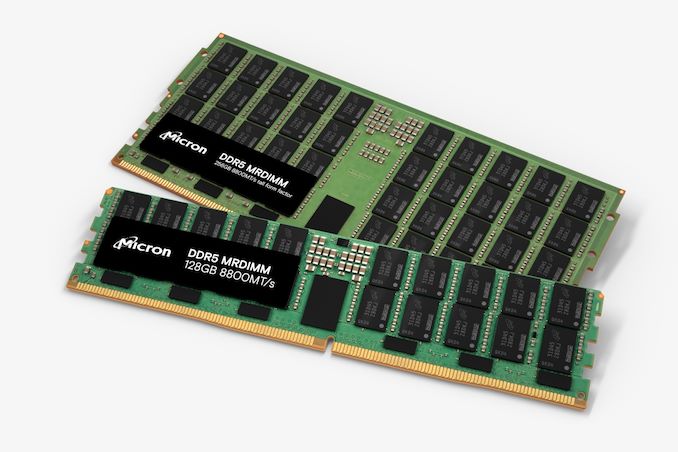

JEDEC Plans LPDDR6-Based CAMM, DDR5 MRDIMM Specifications Following a relative lull in the desktop memory industry in the previous decade, the past few years have seen a flurry of new memory standards and form factors enter development. Joining the traditional DIMM/SO-DIMM form factors, we've seen the introduction of space-efficient DDR5 CAMM2s, their LPDDR5-based counterpart the LPCAMM2, and the high-clockspeed optimized CUDIMM. But JEDEC, the industry organization behind these efforts, is not done there. In a press release sent out at the start of the week, the group announced that it is working on standards for DDR5 Multiplexed Rank DIMMs (MRDIMM) for servers, as well as an updated LPCAMM standard to go with next-generation LPDDR6 memory.

Just last week Micron introduced the industry's first DDR5 MRDIMMs, which are timed to launch alongside Intel's Xeon 6 server platforms. But while Intel and its partners are moving full steam ahead on MRDIMMs, the MRDIMM specification has not been fully ratified by JEDEC itself. All told, it's not unusual to see Intel pushing the envelope here on new memory technologies (the company is big enough to bootstrap its own ecosystem). But as MRDIMMs are ultimately meant to be more than just a tool for Intel, a proper industry standard is still needed – even if that takes a bit longer.

Under the hood, MRDIMMs continue to use DDR5 components, form-factor, pinout, SPD, power management ICs (PMICs), and thermal sensors. The major change with the technology is the introduction of multiplexing, which combines multiple data signals over a single channel. The MRDIMM standard also adds RCD/DB logic in a bid to boost performance, increase capacity of memory modules up to 256 GB (for now), shrink latencies, and reduce power consumption of high-end memory subsystems. And, perhaps key to MRDIMM adoption, the standard is being implemented as a backwards-compatible extension to traditional DDR5 RDIMMs, meaning that MRDIMM-capable servers can use either RDIMMs or MRDIMMs, depending on how the operator opts to configure the system.

The MRDIMM standard aims to double the peak bandwidth to 12.8 Gbps, increasing pin speed and supporting more than two ranks. Additionally, a "Tall MRDIMM" form factor is in the works (and pictured above), which is designed to allow for higher capacity DIMMs by providing more area for laying down memory chips. Currently, ultra high capacity DIMMs require using expensive, multi-layer DRAM packages that use through-silicon vias (3DS packaging) to attach the individual DRAM dies; a Tall MRDIMM, on the other hand, can just use a larger number of commodity DRAM chips. Overall, the Tall MRDIMM form factor enables twice the number of DRAM single-die packages on the DIMM.

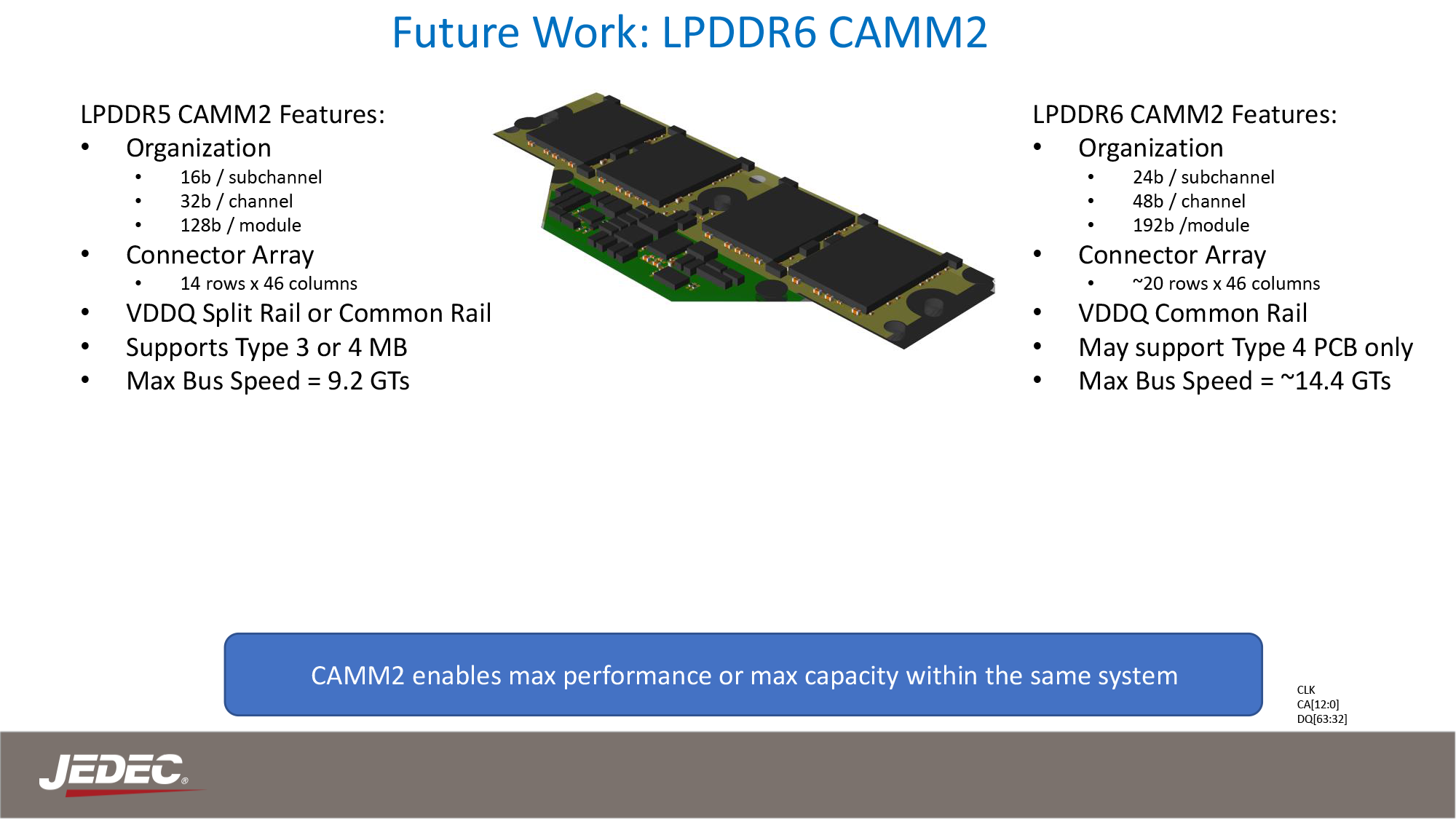

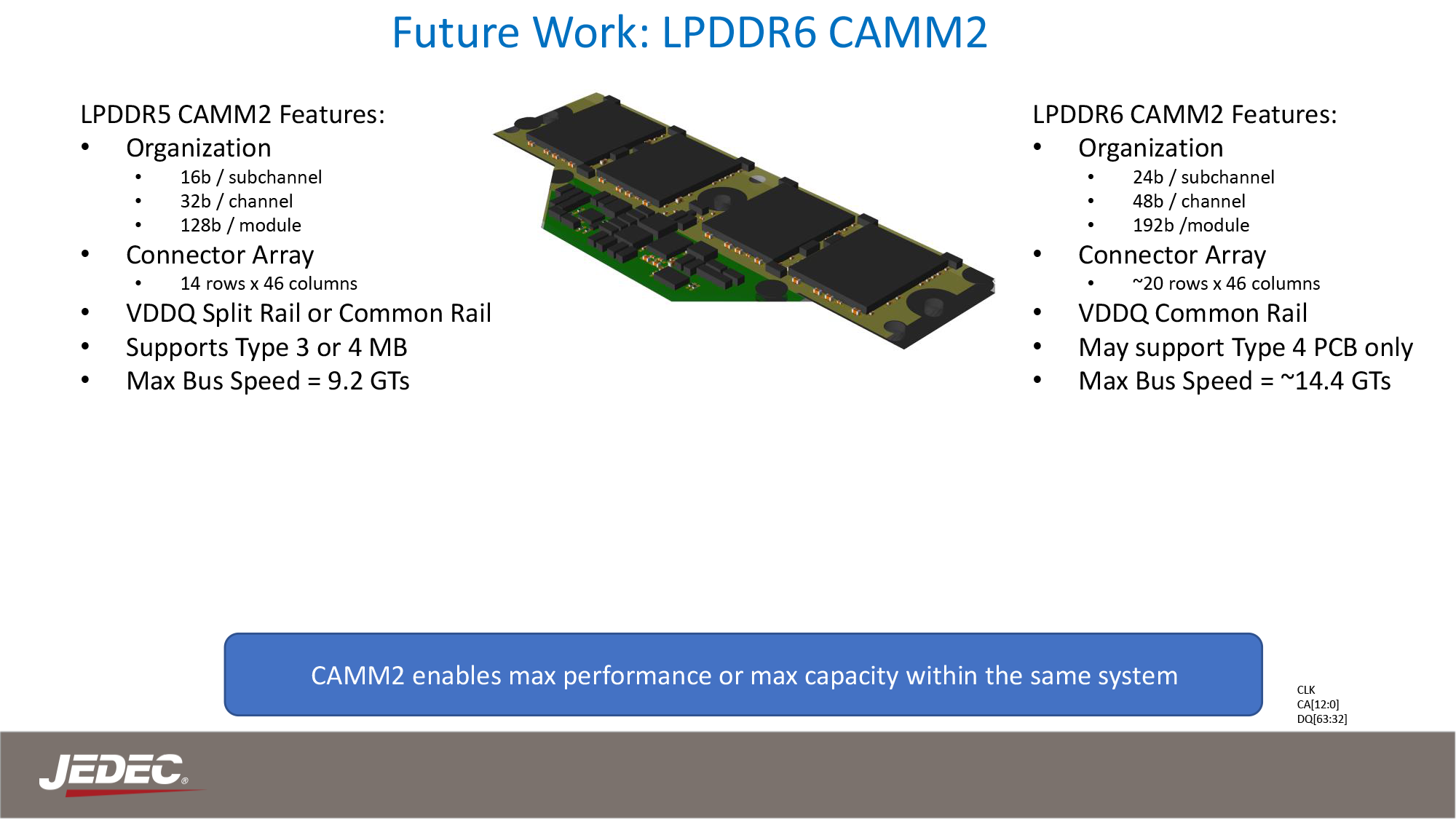

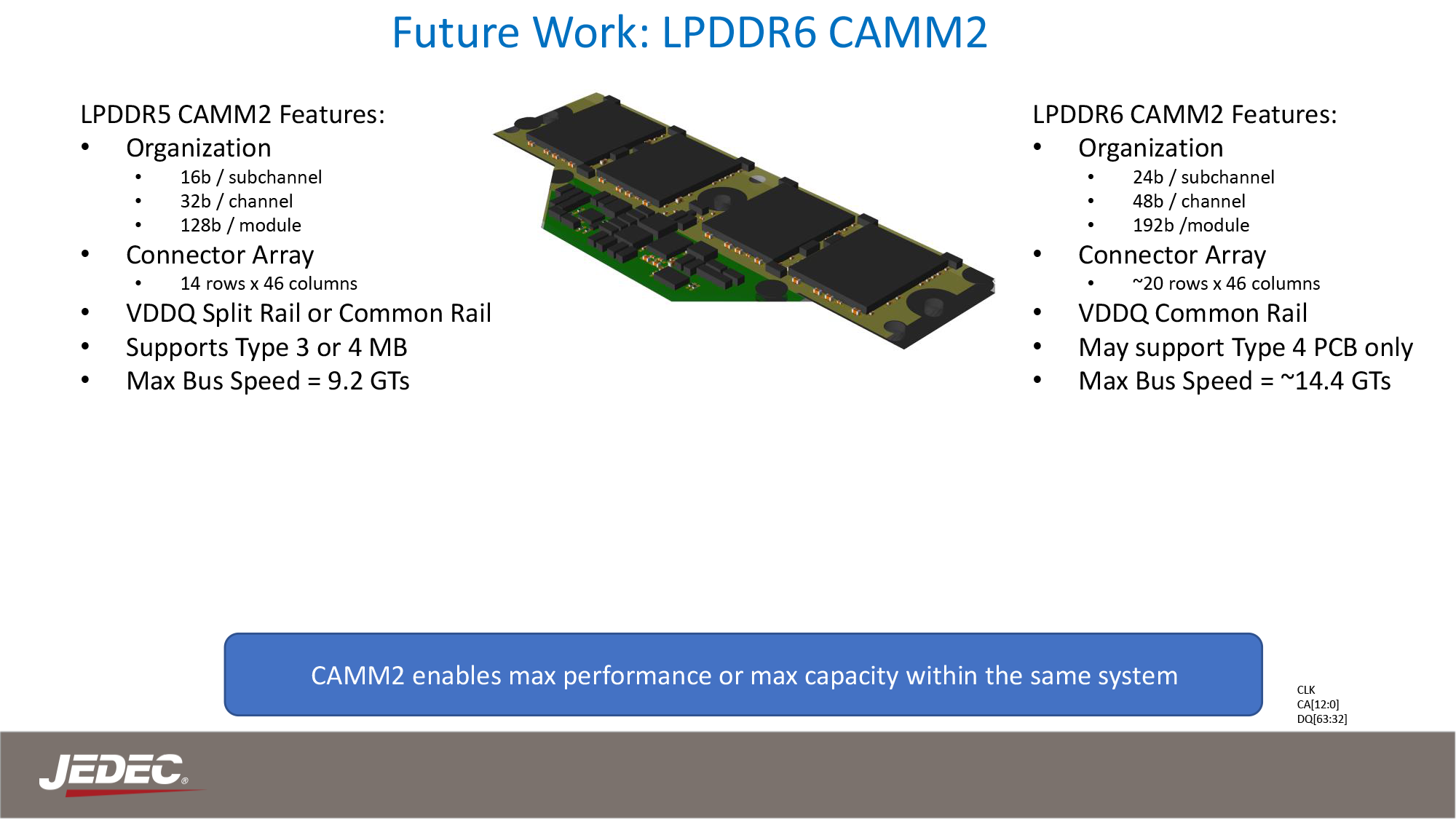

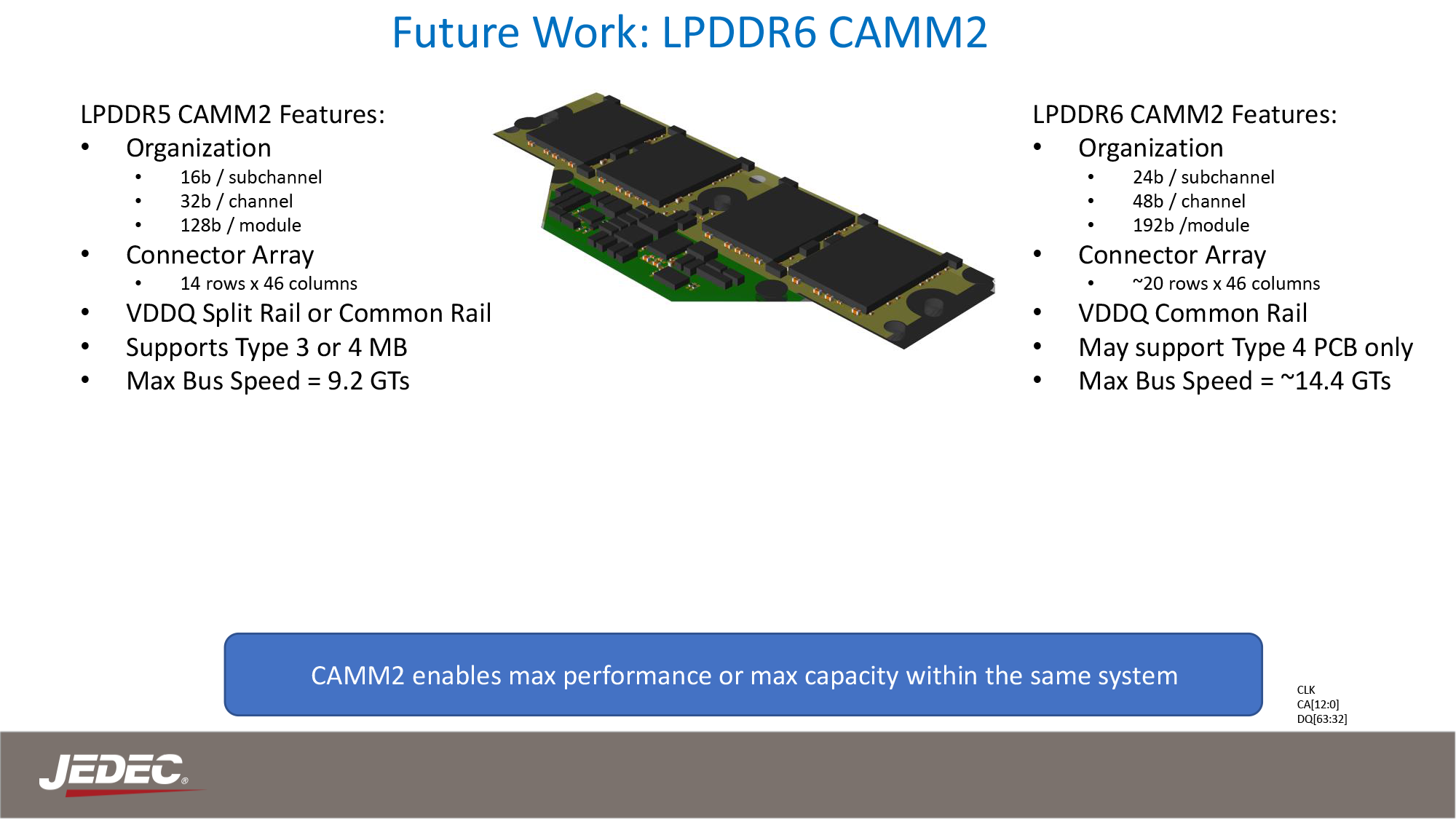

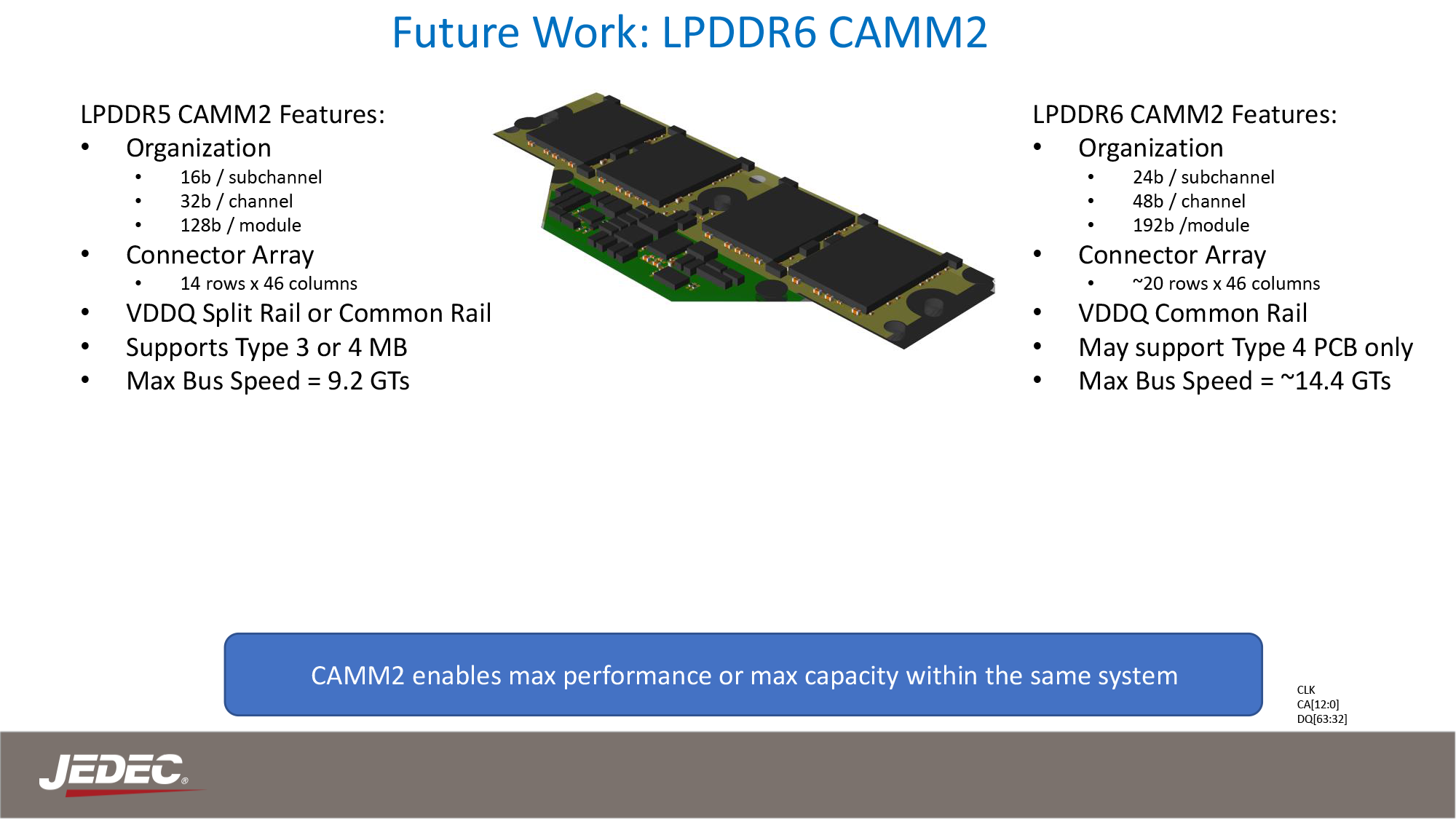

Meanwhile, this week's announcement from JEDEC offers the first significant insight into what to expect from LPDDR6 CAMMs. And despite LPDDR5 CAMMs having barely made it out the door, some significant shifts with LPDDR6 itself means that JEDEC will need to make some major changes to the CAMM standard to accommodate the newer memory type.

JEDEC Presentation: The CAMM2 Journey and Future Potential

Besides the higher memory clockspeeds allowed by LPDDR6 – JEDEC is targeting data transfer rates of 14.4 GT/s and higher – the new memory form-factor will also incorporate an altogether new connector array. This is to accommodate LPDDR6's wider memory bus, which sees the channel width of an individual memory chip grow from 16-bits wide to 24-bits wide. As a result, the current LPCAMM design, which is intended to match the PC standard of a cumulative 128-bit (16x8) design needs to be reconfigured to match LPDDR6's alterations.

Ultimately, JEDEC is targeting a 24-bit subhannel/48-bit channel design, which will result in a 192-bit wide LPCAMM. While the LPCAMM connector itself is set to grow from 14 rows of pins to possibly as high as 20. New memory technologies typically require new DIMMs to begin with, so it's important to clarify that this is not unexpected, but at th... Memory

JEDEC Presentation: The CAMM2 Journey and Future Potential

Report: China to Pivot from AMD & Intel CPUs To Domestic Chips in Government PCs China has initiated a policy shift to eliminate American processors from government computers and servers, reports Financial Times. The decision is aimed to gradually eliminate processors from AMD and Intel from system used by China's government agencies, which will mean lower sales for U.S.-based chipmakers and higher sales of China's own CPUs.

The new procurement guidelines, introduced quietly at the end of 2023, mandates government entities to prioritize 'safe and reliable' processors and operating systems in their purchases. This directive is part of a concerted effort to bolster domestic technology and parallels a similar push within state-owned enterprises to embrace technology designed in China.

The list of approved processors and operating systems, published by China's Information Technology Security Evaluation Center, exclusively features Chinese companies. There are 18 approved processors that use a mix of architectures, including x86 and ARM, while the operating systems are based on open-source Linux software. Notably, the list includes chips from Huawei and Phytium, both of which are on the U.S. export blacklist.

This shift towards domestic technology is a cornerstone of China's national strategy for technological autonomy in the military, government, and state sectors. The guidelines provide clear and detailed instructions for exclusively using Chinese processors, marking a significant step in China's quest for self-reliance in technology.

State-owned enterprises have been instructed to complete their transition to domestic CPUs by 2027. Meanwhile, Chinese government entites have to submit progress reports on their IT system overhauls quarterly. Although some foreign technology will still be permitted, the emphasis is clearly on adopting local alternatives.

The move away from foreign hardware is expected to have a measurable impact on American tech companies. China is a major market for AMD (accounting for 15% of sales last year) and Intel (commanding 27% of Intel's revenue), contributing to a substantial portion of their sales. Additionally, Microsoft, while not disclosing specific figures, has acknowledged that China accounts for a small percentage of its revenues. And while government sales are only a fraction of overall China sales (as compared to the larger commercial PC business) the Chinese government is by no means a small customer.

Analysts questioned by Financial Times predict that the transition to domestic processors will advance more swiftly for server processors than for client PCs, due to the less complex software ecosystem needing replacement. They estimate that China will need to invest approximately $91 billion from 2023 to 2027 to overhaul the IT infrastructure in government and adjascent industries.

CPUs

TSMC: Performance and Yields of 2nm on Track, Mass Production To Start In 2025 In addition to revealing its roadmap and plans concerning its current leading-edge process technologies, TSMC also shared progress of its N2 node as part of its Symposiums 2024. The company's first 2nm-class fabrication node, and predominantly featuring gate-all-around transistors, according to TSMC N2 has almost achieved its target performance and yield goals, which places it on track to enter high-volume manufacturing in the second half of 2025.

TSMC states that 'N2 development is well on track and N2P is next.' In particular, gate-all-around nanosheet devices currently achieve over 90% of their expected performance, whereas yields of 256 Mb SRAM (32 MB) devices already exceeds 80%, depending on the batch. All of this for a node that is over a year away from mass production.

Meanwhile, average yield of a 256 Mb SRAM was around 70% as of March, 2024, up from around 35% in April, 2023. Device performance has also been improving with higher frequencies being achieved while keeping power consumption in check.

Chip designer interest towards TSMC's first 2nm-class gate-all-around nanosheet transistor-based technology is significant, too. The number of new tape-outs (NTOs) in the first year of N2 is over two-times higher than it was for N5. Though with that said, given TSMC's close working relationship with a handful of high-volume vendors – most notably Appe – NTOs can be a very misleading figure since the first year of a new node at TSMC is capacity constrained, and consequently the bulk of that capacity goes to TSMC's priority partners.

Meanwhile, there were considerably more N5 tapeouts in its second year (some where N5P, of course) and N2 promises to have 2.6X more NTOs in its second year. So the node indeed looks quite promising. In fact, based on TSMC's slides (which we're unfortunately not able to republish), N2 is more popular than N3 in terms of NTOs both in the first and the second years of existence.

When it comes to the second year of N2, in the second half of 2026 TSMC plans to roll out its N2P technology, which promises additional performance and power benefits. N2P is expected to improve frequency by 15% - 20%, reduce power consumption by 30% - 40%, and increase chip density by over 1.15 times compared to N3E, significant benefits to move to all-new GAA nanosheet transistors.

Finally, for those companies that need the best in performance, power, and density, TSMC is poised to offer their A16 process in 2026. That node will also bring in backside power delivery, which will add costs, but is expected to greatly improve performance efficiency and scaling.

Semiconductors

Search This Blog

OfferNest

Subscribe Us

Most Popular

AMD Announces FSR 3.1: Seriously Improved Upscaling Quality AMD's FidelityFX Super Resolution 3 technology package introduced a plethora of enhancements to the FSR technology on Radeon RX 6000 and 7000-series graphics cards last September. But perfection has no limits, so this week, the company is rolling out its FSR 3.1 technology, which improves upscaling quality, decouples frame generation from AMD's upscaling, and makes it easier for developers to work with FSR.

Arguably, AMD's FSR 3.1's primary enhancement is its improved temporal upscaling image quality: compared to FSR 2.2, the image flickers less at rest and no longer ghosts when in movement. This is a significant improvement, as flickering and ghosting artifacts are particularly annoying. Meanwhile, FSR 3.1 has to be implemented by the game developer itself, and the first title to support this new technology sometime later this year is Ratchet & Clank: Rift Apart.

| Temporal Stability | |

| AMD FSR 2.2 | AMD FSR 3.1 |

| Ghosting Reduction | |

| AMD FSR 2.2 | AMD FSR 3.1 |

Another significant development brought by FSR 3.1 is its decoupling from the Frame Generation feature introduced by FSR 3. This capability relies on a form of AMD's Fluid Motion Frames (AFMF) optical flow interpolation. It uses temporal game data like motion vectors to add an additional frame between existing ones. This ability can lead to a performance boost of up to two times in compatible games, but it was initially tied to FSR 3 upscaling, which is a limitation. Starting from FSR 3.1, it will work with other upscaling methods, though AMD refrains from saying which methods and on which hardware for now. Also, the company does not disclose when it is expected to be implemented by game developers.

In addition, AMD is bringing support for FSR3 to Vulkan and Xbox Game Development Kit, enabling game developers on these platforms to use it. It also adds FSR 3.1 to the FidelityFX API, which simplifies debugging and enables forward compatibility with updated versions of FSR.

Upon its release in September 2023, AMD FSR 3 was initially supported by two titles, Forspoken and Immortals of Aveum, with ten more games poised to join them back then. Fast forward to six months later, the lineup has expanded to an impressive roster of 40 games either currently supporting or set to incorporate FSR 3 shortly. As of March 2024, FSR is supported by games like Avatar: Frontiers of Pandora, Starfield, The Last of Us Part I. Shortly, Cyberpunk 2077, Dying Light 2 Stay Human, Frostpunk 2, and Ratchet & Clank: Rift Apart will support FSR shortly.

Source: AMD

GPUs

JEDEC Plans LPDDR6-Based CAMM, DDR5 MRDIMM Specifications Following a relative lull in the desktop memory industry in the previous decade, the past few years have seen a flurry of new memory standards and form factors enter development. Joining the traditional DIMM/SO-DIMM form factors, we've seen the introduction of space-efficient DDR5 CAMM2s, their LPDDR5-based counterpart the LPCAMM2, and the high-clockspeed optimized CUDIMM. But JEDEC, the industry organization behind these efforts, is not done there. In a press release sent out at the start of the week, the group announced that it is working on standards for DDR5 Multiplexed Rank DIMMs (MRDIMM) for servers, as well as an updated LPCAMM standard to go with next-generation LPDDR6 memory.

Just last week Micron introduced the industry's first DDR5 MRDIMMs, which are timed to launch alongside Intel's Xeon 6 server platforms. But while Intel and its partners are moving full steam ahead on MRDIMMs, the MRDIMM specification has not been fully ratified by JEDEC itself. All told, it's not unusual to see Intel pushing the envelope here on new memory technologies (the company is big enough to bootstrap its own ecosystem). But as MRDIMMs are ultimately meant to be more than just a tool for Intel, a proper industry standard is still needed – even if that takes a bit longer.

Under the hood, MRDIMMs continue to use DDR5 components, form-factor, pinout, SPD, power management ICs (PMICs), and thermal sensors. The major change with the technology is the introduction of multiplexing, which combines multiple data signals over a single channel. The MRDIMM standard also adds RCD/DB logic in a bid to boost performance, increase capacity of memory modules up to 256 GB (for now), shrink latencies, and reduce power consumption of high-end memory subsystems. And, perhaps key to MRDIMM adoption, the standard is being implemented as a backwards-compatible extension to traditional DDR5 RDIMMs, meaning that MRDIMM-capable servers can use either RDIMMs or MRDIMMs, depending on how the operator opts to configure the system.

The MRDIMM standard aims to double the peak bandwidth to 12.8 Gbps, increasing pin speed and supporting more than two ranks. Additionally, a "Tall MRDIMM" form factor is in the works (and pictured above), which is designed to allow for higher capacity DIMMs by providing more area for laying down memory chips. Currently, ultra high capacity DIMMs require using expensive, multi-layer DRAM packages that use through-silicon vias (3DS packaging) to attach the individual DRAM dies; a Tall MRDIMM, on the other hand, can just use a larger number of commodity DRAM chips. Overall, the Tall MRDIMM form factor enables twice the number of DRAM single-die packages on the DIMM.

Meanwhile, this week's announcement from JEDEC offers the first significant insight into what to expect from LPDDR6 CAMMs. And despite LPDDR5 CAMMs having barely made it out the door, some significant shifts with LPDDR6 itself means that JEDEC will need to make some major changes to the CAMM standard to accommodate the newer memory type.

JEDEC Presentation: The CAMM2 Journey and Future Potential

Besides the higher memory clockspeeds allowed by LPDDR6 – JEDEC is targeting data transfer rates of 14.4 GT/s and higher – the new memory form-factor will also incorporate an altogether new connector array. This is to accommodate LPDDR6's wider memory bus, which sees the channel width of an individual memory chip grow from 16-bits wide to 24-bits wide. As a result, the current LPCAMM design, which is intended to match the PC standard of a cumulative 128-bit (16x8) design needs to be reconfigured to match LPDDR6's alterations.

Ultimately, JEDEC is targeting a 24-bit subhannel/48-bit channel design, which will result in a 192-bit wide LPCAMM. While the LPCAMM connector itself is set to grow from 14 rows of pins to possibly as high as 20. New memory technologies typically require new DIMMs to begin with, so it's important to clarify that this is not unexpected, but at th... Memory

JEDEC Presentation: The CAMM2 Journey and Future Potential

Report: China to Pivot from AMD & Intel CPUs To Domestic Chips in Government PCs China has initiated a policy shift to eliminate American processors from government computers and servers, reports Financial Times. The decision is aimed to gradually eliminate processors from AMD and Intel from system used by China's government agencies, which will mean lower sales for U.S.-based chipmakers and higher sales of China's own CPUs.

The new procurement guidelines, introduced quietly at the end of 2023, mandates government entities to prioritize 'safe and reliable' processors and operating systems in their purchases. This directive is part of a concerted effort to bolster domestic technology and parallels a similar push within state-owned enterprises to embrace technology designed in China.

The list of approved processors and operating systems, published by China's Information Technology Security Evaluation Center, exclusively features Chinese companies. There are 18 approved processors that use a mix of architectures, including x86 and ARM, while the operating systems are based on open-source Linux software. Notably, the list includes chips from Huawei and Phytium, both of which are on the U.S. export blacklist.

This shift towards domestic technology is a cornerstone of China's national strategy for technological autonomy in the military, government, and state sectors. The guidelines provide clear and detailed instructions for exclusively using Chinese processors, marking a significant step in China's quest for self-reliance in technology.

State-owned enterprises have been instructed to complete their transition to domestic CPUs by 2027. Meanwhile, Chinese government entites have to submit progress reports on their IT system overhauls quarterly. Although some foreign technology will still be permitted, the emphasis is clearly on adopting local alternatives.

The move away from foreign hardware is expected to have a measurable impact on American tech companies. China is a major market for AMD (accounting for 15% of sales last year) and Intel (commanding 27% of Intel's revenue), contributing to a substantial portion of their sales. Additionally, Microsoft, while not disclosing specific figures, has acknowledged that China accounts for a small percentage of its revenues. And while government sales are only a fraction of overall China sales (as compared to the larger commercial PC business) the Chinese government is by no means a small customer.

Analysts questioned by Financial Times predict that the transition to domestic processors will advance more swiftly for server processors than for client PCs, due to the less complex software ecosystem needing replacement. They estimate that China will need to invest approximately $91 billion from 2023 to 2027 to overhaul the IT infrastructure in government and adjascent industries.

CPUs

TSMC: Performance and Yields of 2nm on Track, Mass Production To Start In 2025 In addition to revealing its roadmap and plans concerning its current leading-edge process technologies, TSMC also shared progress of its N2 node as part of its Symposiums 2024. The company's first 2nm-class fabrication node, and predominantly featuring gate-all-around transistors, according to TSMC N2 has almost achieved its target performance and yield goals, which places it on track to enter high-volume manufacturing in the second half of 2025.

TSMC states that 'N2 development is well on track and N2P is next.' In particular, gate-all-around nanosheet devices currently achieve over 90% of their expected performance, whereas yields of 256 Mb SRAM (32 MB) devices already exceeds 80%, depending on the batch. All of this for a node that is over a year away from mass production.

Meanwhile, average yield of a 256 Mb SRAM was around 70% as of March, 2024, up from around 35% in April, 2023. Device performance has also been improving with higher frequencies being achieved while keeping power consumption in check.

Chip designer interest towards TSMC's first 2nm-class gate-all-around nanosheet transistor-based technology is significant, too. The number of new tape-outs (NTOs) in the first year of N2 is over two-times higher than it was for N5. Though with that said, given TSMC's close working relationship with a handful of high-volume vendors – most notably Appe – NTOs can be a very misleading figure since the first year of a new node at TSMC is capacity constrained, and consequently the bulk of that capacity goes to TSMC's priority partners.

Meanwhile, there were considerably more N5 tapeouts in its second year (some where N5P, of course) and N2 promises to have 2.6X more NTOs in its second year. So the node indeed looks quite promising. In fact, based on TSMC's slides (which we're unfortunately not able to republish), N2 is more popular than N3 in terms of NTOs both in the first and the second years of existence.

When it comes to the second year of N2, in the second half of 2026 TSMC plans to roll out its N2P technology, which promises additional performance and power benefits. N2P is expected to improve frequency by 15% - 20%, reduce power consumption by 30% - 40%, and increase chip density by over 1.15 times compared to N3E, significant benefits to move to all-new GAA nanosheet transistors.

Finally, for those companies that need the best in performance, power, and density, TSMC is poised to offer their A16 process in 2026. That node will also bring in backside power delivery, which will add costs, but is expected to greatly improve performance efficiency and scaling.

Semiconductors

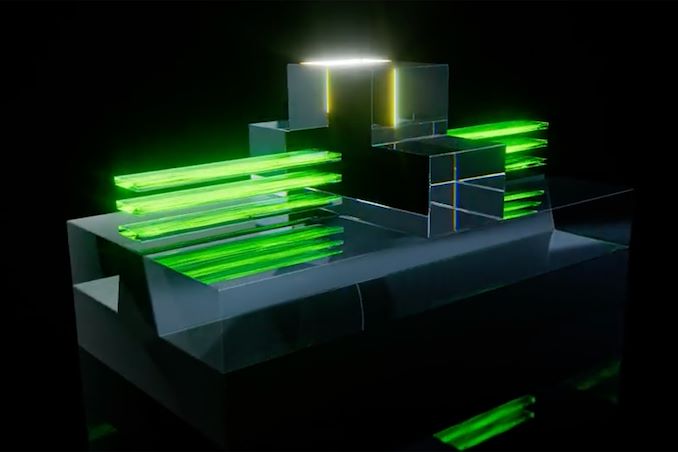

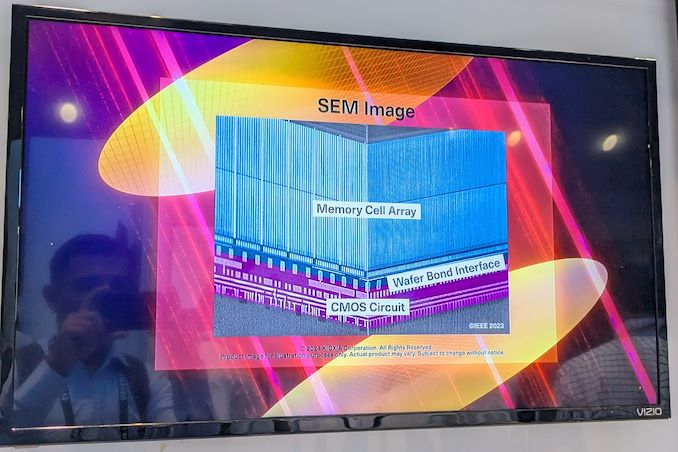

Kioxia Details BiCS 8 NAND at FMS 2024: 218 Layers With Superior Scaling Kioxia's booth at FMS 2024 was a busy one with multiple technology demonstrations keeping visitors occupied. A walk-through of the BiCS 8 manufacturing process was the first to grab my attention. Kioxia and Western Digital announced the sampling of BiCS 8 in March 2023. We had touched briefly upon its CMOS Bonded Array (CBA) scheme in our coverage of Kioxial's 2Tb QLC NAND device and coverage of Western Digital's 128 TB QLC enterprise SSD proof-of-concept demonstration. At Kioxia's booth, we got more insights.

Traditionally, fabrication of flash chips involved placement of the associate logic circuitry (CMOS process) around the periphery of the flash array. The process then moved on to putting the CMOS under the cell array, but the wafer development process was serialized with the CMOS logic getting fabricated first followed by the cell array on top. However, this has some challenges because the cell array requires a high-temperature processing step to ensure higher reliability that can be detrimental to the health of the CMOS logic. Thanks to recent advancements in wafer bonding techniques, the new CBA process allows the CMOS wafer and cell array wafer to be processed independently in parallel and then pieced together, as shown in the models above.

The BiCS 8 3D NAND incorporates 218 layers, compared to 112 layers in BiCS 5 and 162 layers in BiCS 6. The company decided to skip over BiCS 7 (or, rather, it was probably a short-lived generation meant as an internal test vehicle). The generation retains the four-plane charge trap structure of BiCS 6. In its TLC avatar, it is available as a 1 Tbit device. The QLC version is available in two capacities - 1 Tbit and 2 Tbit.

Kioxia also noted that while the number of layers (218) doesn't compare favorably with the latest layer counts from the competition, its lateral scaling / cell shrinkage has enabled it to be competitive in terms of bit density as well as operating speeds (3200 MT/s). For reference, the latest shipping NAND from Micron - the G9 - has 276 layers with a bit density in TLC mode of 21 Gbit/mm2, and operates at up to 3600 MT/s. However, its 232L NAND operates only up to 2400 MT/s and has a bit density of 14.6 Gbit/mm2.

It must be noted that the CBA hybrid bonding process has advantages over the current processes used by other vendors - including Micron's CMOS under array (CuA) and SK hynix's 4D PUC (periphery-under-chip) developed in the late 2010s. It is expected that other NAND vendors will also move eventually to some variant of the hybrid bonding scheme used by Kioxia.

Storage

The AMD Ryzen AI 9 HX 370 Review: Unleashing Zen 5 and RDNA 3.5 Into Notebooks During the opening keynote delivered by AMD CEO Dr. Lisa Su at Computex 2024, AMD finally lifted the lid on their highly-anticipated Zen 5 microarchitecture. The backbone for the next couple of years of everything CPU at AMD, the company unveiled their plans to bring Zen 5 in the consumer market, announcing both their next-generation mobile and desktop products at the same time. With a tight schedule that will see both platforms launch within weeks of each other, today AMD is taking their first step with the launch of the Ryzen AI 300 series – codenamed Strix Point – their new Zen 5-powered mobile SoC.

The latest and greatest from AMD, the Strix Point brings significant architectural improvements across AMD's entire IP portfolio. Headlining the chip, of course, is the company's new Zen 5 CPU microarchitecture, which is taking multiple steps to improve on CPU performance without the benefits of big clockspeed gains. And reflecting the industry's current heavy emphasis on AI performance, Strix Point also includes the latest XDNA 2-based NPU, which boasts up to 50 TOPS of performance. Other improvements include an upgraded integrated graphics processor, with AMD moving to the RDNA 3.5 graphics architecture.

The architectural updates in Strix Point are also seeing AMD opt for a heterogenous CPU design from the very start, incorporating both performance and efficiency cores as a means of offering better overall performance in power-constrained devices. AMD first introduced their compact Zen cores in the middle of the Zen 4 generation, and while they made it into products such as AMD's small-die Phoenix 2 platform, this is the first time AMD's flagship mobile silicon has included them as well. And while this change is going to be transparent from a user perspective, under the hood it represents an important improvement in CPU design. As a result, all Ryzen AI 300 chips are going to include a mix of not only AMD's (mostly) full-fat Zen 5 CPU cores, but also their compact Zen 5c cores, boosting the chips' total CPU core counts and performance in multi-threaded situations.

For today's launch, the AMD Ryzen AI 300 series will consist of just three SKUs: the flagship Ryzen AI 9 HX 375, with 12 CPU cores, as well as the Ryzen AI 9 HX 370 and Ryzen 9 365, with 12 and 10 cores respectively. All three SoCs combine both the regular Zen 5 core with the more compact Zen 5c cores to make up the CPU cluster, and are paired with a powerful Raden 890M/880M GPU, and a XDNA 2-based NPU.

As the successor to the Zen 4-based Phoenix/Hawk Point, the AMD Ryzen AI 300 series is targeting a diverse and active notebook market that has become the largest segment of the PC industry overall. And it is telling that, for the first time in the Zen era, AMD is launching their mobile chips first – if only by days – rather than their typical desktop-first launch. It's both a reflection on how the PC industry has changed over the years, and how AMD has continued to iterate and improve upon its mobile chips; this is as close to mobile-first as the company has ever been.

Getting down to business, for our review of the Ryzen AI 300 series, we are taking a look at ASUS's Zenbook S 16 (2024), a 16-inch laptop that's equipped with AMD's Ryzen AI 9 HX 370. The sightly more modest Ryzen features four Zen 5 CPU cores and 8 Zen 5c CPU cores, as well as AMD's latest RDNA 3.5 Radeon 890M integrated graphics. Overall, the HX 370 has a configurable TDP of between 15 and 54 W, depending on the desired notebook configuration.

Fleshing out the rest of the Zenbook S 16, ASUS has equipped the laptop with a bevy of features and technologies fitting for a flagship Ryzen notebook. The centerpiece of the laptop is a Lumina OLED 16-inch display, with a resolution of up to 2880 x 1800 and a variable 120 Hz refresh rate. Meanwhile, inside the Zenbook S 16 is 32 GB of LPDDR5 memory and a 1 TB PCIe 4.0 NVMe SSD. And while this is a 16-inch class notebook, ASUS has still designed it with an emphasis on portability, leading to the Zenbook S 16 coming in at 1.1 cm thick, and weighting 1.5 kg. That petite design also means ASUS has configured the Ryzen AI 9 HX 370 chip inside rather conservatively: out of the box, the chip runs at a TDP of just 17 Watts.

CPUs

Tags

- https://www.amazon.com/2020-2021-Planner-Academic-Do-Twin-Wire/dp/B083V11TM5?tag=all0ad0-21https://m.media-amazon.com/images/I/41btLRSWksL.jpg

- https://www.amazon.com/Acid-Dreams-Complete-History-Sixties-ebook/dp/B005012G6U?tag=all0ad0-21https://m.media-amazon.com/images/I/51SwQkWyzAL.jpg

- https://www.amazon.com/Adaptive-Charging-Charger-Compatible-EP-TA20JBE/dp/B07NPD5T5H?tag=all0ad0-21https://m.media-amazon.com/images/I/419ZKbzdOwL.jpg

- https://www.amazon.com/Adjustable-Foldable-Portable-Compatible-Smartphones/dp/B0963PBY4C?tag=all0ad0-21https://m.media-amazon.com/images/I/51p4wF13kCL.jpg

- https://www.amazon.com/African-Twisted-Headwraps-Headband-Headscarf/dp/B09FDMKTZP?tag=all0ad0-21https://m.media-amazon.com/images/I/41WGbzL+RkL.jpg

- https://www.amazon.com/AINOPE-Charging-Braided-compatible-MacBook/dp/B094YDZQ1C?tag=all0ad0-21https://m.media-amazon.com/images/I/51ppc0xIVtL.jpg

- https://www.amazon.com/Ambergris-Saints-Madmen-Shriek-Finch/dp/B08GGCSN3S?tag=all0ad0-21https://m.media-amazon.com/images/I/51zgVCTiUiL.jpg

- https://www.amazon.com/Amplim-Hospital-Thermometer-Professional-Thermometer/dp/B0865R5H82?tag=all0ad0-21https://m.media-amazon.com/images/I/31K01H4s6UL.jpg

- https://www.amazon.com/Animal-Gaming-Electronic-Lights-Birthday/dp/B0B2QTLWMS?tag=all0ad0-21https://m.media-amazon.com/images/I/41GY22qHwkL.jpg

- https://www.amazon.com/Animals-Flashcards-Children-Alphabet-cards/dp/9811168881?tag=all0ad0-21https://m.media-amazon.com/images/I/51U2gcSb62L.jpg

- https://www.amazon.com/ANNKIE-Dance-Electronic-Lights-Birthday/dp/B0B74DVQQV?tag=all0ad0-21https://m.media-amazon.com/images/I/51K45aP99DL.jpg

- https://www.amazon.com/Anti-Wrinkle-Silicone-Reusable-D%C3%A9collet%C3%A9-Eliminate/dp/B07FQ3QV1C?tag=all0ad0-21https://m.media-amazon.com/images/I/41RhHEsEi3L.jpg

- https://www.amazon.com/anyloop-Military-Smartwatch-Bluetooth-Waterproof/dp/B0C4P7R6CK?tag=all0ad0-21https://m.media-amazon.com/images/I/41lUAHTmi5L.jpg

- https://www.amazon.com/Aromatherapy-Shower-Steamers-Relaxation-Everything/dp/B08QDKWBWS?tag=all0ad0-21https://m.media-amazon.com/images/I/613KwmLJJ1L.jpg

- https://www.amazon.com/Audible-A-Rose-in-Winter/dp/B09JHTGT14?tag=all0ad0-21https://m.media-amazon.com/images/I/51z1bVj4CxL.jpg

- https://www.amazon.com/Audible-Fall-School-Good-Evil/dp/B0B8SZY3P5?tag=all0ad0-21https://m.media-amazon.com/images/I/51MWO0bOLIL.jpg

- https://www.amazon.com/Audible-Termination-Shock-A-Novel/dp/B09556Y79B?tag=all0ad0-21https://m.media-amazon.com/images/I/51jEsfJXG3S.jpg

- https://www.amazon.com/Automatic-Toddlers-Operated-Batteries-Birthday/dp/B0BZH34G2G?tag=all0ad0-21https://m.media-amazon.com/images/I/51PQaTAouaL.jpg

- https://www.amazon.com/AWGOU-Baby-Wipes-Dispenser-Large-Capacity/dp/B0BS3K9BFV?tag=all0ad0-21https://m.media-amazon.com/images/I/41emlsr6WnL.jpg

- https://www.amazon.com/AYAO-Blades-8-Inch-12TPI-2-Pack/dp/B0C9C1VB3D?tag=all0ad0-21https://m.media-amazon.com/images/I/41jMumgfJ4L.jpg

- https://www.amazon.com/Backless-Sleeve-Ribbed-Fitted-Shirts/dp/B0B68KPGP8?tag=all0ad0-21https://m.media-amazon.com/images/I/41vB0uLnuzL.jpg

- https://www.amazon.com/BCHWAY-Stuffed-Storage-Beanbag-Organizer/dp/B09WR2KPPG?tag=all0ad0-21https://m.media-amazon.com/images/I/41GBr+1tEBL.jpg

- https://www.amazon.com/beeprt-Bluetooth-Shipping-Label-Printer/dp/B0BK93ZSNC?tag=all0ad0-21https://m.media-amazon.com/images/I/41Ufm05KrJL.jpg

- https://www.amazon.com/Benewid-Creami-Pints-Lids-Containers/dp/B0C85Q44N6?tag=all0ad0-21https://m.media-amazon.com/images/I/41bFv6o0xjL.jpg

- https://www.amazon.com/BIG-TEETH-Magnetic-Microfiber-5-Piece/dp/B0BRXBM2T9?tag=all0ad0-21https://m.media-amazon.com/images/I/51BCt4B8jDL.jpg

- https://www.amazon.com/Blackbeard-Americas-Most-Notorious-Pirate/dp/B086N4X4SG?tag=all0ad0-21https://m.media-amazon.com/images/I/51fqeuICW+L.jpg

- https://www.amazon.com/Blaster-Automatic-Toddlers-Christmas-Birthday/dp/B0CCV9RDM5?tag=all0ad0-21https://m.media-amazon.com/images/I/51j8FkZtBiL.jpg

- https://www.amazon.com/Bloodline-Jess-Lourey/dp/1542016312?tag=all0ad0-21https://m.media-amazon.com/images/I/51KLqBsOIbL.jpg

- https://www.amazon.com/Bracelet-Stainless-Zirconium-Ceramic-Statement/dp/B0B2CQR5YW?tag=all0ad0-21https://m.media-amazon.com/images/I/41iUmnfsAmL.jpg

- https://www.amazon.com/Bride-Shadow-King-Book/dp/B0B75RL7DX?tag=all0ad0-21https://m.media-amazon.com/images/I/51LyIt-n5+L.jpg

- https://www.amazon.com/Bright-Empires-House-Spirit-Shadow/dp/B08T4VG1S2?tag=all0ad0-21https://m.media-amazon.com/images/I/61-JxjVNClL.jpg

- https://www.amazon.com/BRIGHTWORLD-Stuffers-Upgrade-5-9inch-Birthday/dp/B0B6RBCYZ7?tag=all0ad0-21https://m.media-amazon.com/images/I/61pQaIf3NVL.jpg

- https://www.amazon.com/Bunfly-Clipper-Grooming-Suction-Capacity/dp/B0C6PMSY3Z?tag=all0ad0-21https://m.media-amazon.com/images/I/51ig7m1g9OL.jpg

- https://www.amazon.com/C412H-Spring-Wound-Commercial-12-Hour-Automatic/dp/B00CTW2LYA?tag=all0ad0-21https://m.media-amazon.com/images/I/41QNVA+3MRL.jpg

- https://www.amazon.com/Cardone-Select-84-832-Ignition-Distributor/dp/B000CFFAYY?tag=all0ad0-21https://m.media-amazon.com/images/I/414iGfzryML.jpg

- https://www.amazon.com/ceiba-tree-Graduation-Envelopes-Classroom/dp/B0BQQKSLFK?tag=all0ad0-21https://m.media-amazon.com/images/I/51ZOS4YOvzL.jpg

- https://www.amazon.com/CellElection-Elastic-Ponytail-Holders-Straight/dp/B09TFDLR85?tag=all0ad0-21https://m.media-amazon.com/images/I/514QbooGKKL.jpg

- https://www.amazon.com/Certified-Charger-Charging-Braveridge-Lightning/dp/B0C1VKRXN1?tag=all0ad0-21https://m.media-amazon.com/images/I/41XG+lopk8L.jpg

- https://www.amazon.com/Certified%E3%80%91-Charger-Fasting-Charging-Compatible/dp/B0C489SXGB?tag=all0ad0-21https://m.media-amazon.com/images/I/41dNzZS3BML.jpg

- https://www.amazon.com/Charger-Certified-Lightning-Charging-Compatible/dp/B0C4L9S7QH?tag=all0ad0-21https://m.media-amazon.com/images/I/514iP4Fy28L.jpg

- https://www.amazon.com/Chicken-Shredder-Ergonomic-Anti-Slip-Dishwasher/dp/B0C5R1KZP6?tag=all0ad0-21https://m.media-amazon.com/images/I/61cx6f737WL.jpg

- https://www.amazon.com/Christmas-Decorations-PHITRIC-Sparkling-Fireplace/dp/B0B7WNC93J?tag=all0ad0-21https://m.media-amazon.com/images/I/51Gv07W+JCL.jpg

- https://www.amazon.com/Christmas-Snowflake-Stamping-Manicure-Designer/dp/B09L4SV5YY?tag=all0ad0-21https://m.media-amazon.com/images/I/51TQJxPWLrL.jpg

- https://www.amazon.com/Cleaning-Bathroom-Crevice-Bristle-Multifunctional/dp/B0CDBK4C9T?tag=all0ad0-21https://m.media-amazon.com/images/I/415dsUeaDmL.jpg

- https://www.amazon.com/Clinic-Crohns-Disease-Ulcerative-Colitis-ebook/dp/B09ZBLJLFL?tag=all0ad0-21https://m.media-amazon.com/images/I/41f5FHJle+L.jpg

- https://www.amazon.com/Coasters-Absorbent-Ceramic-Coaster-Housewarming/dp/B09ZKJRSLH?tag=all0ad0-21https://m.media-amazon.com/images/I/51POqbEgyOL.jpg

- https://www.amazon.com/CoBak-Rotating-Case-iPad-Generation/dp/B0BBR8MFHM?tag=all0ad0-21https://m.media-amazon.com/images/I/516NR1N0QKL.jpg

- https://www.amazon.com/COLORFULLEAF-Bamboo-Underwear-Breathable-Trunks/dp/B0B9BX5S9L?tag=all0ad0-21https://m.media-amazon.com/images/I/31MYPhHHapL.jpg

- https://www.amazon.com/Comforter-Paisley-Microfiber-Bohemian-Pillowcases/dp/B0BZP1SC6F?tag=all0ad0-21https://m.media-amazon.com/images/I/51KkoN3AgNL.jpg

- https://www.amazon.com/Compressed-Cordless-Electric-Brushless-Portable/dp/B0BBR1XHLS?tag=all0ad0-21https://m.media-amazon.com/images/I/41dJ2sJpGjL.jpg

- https://www.amazon.com/Cordking-14-Protectors-Shockproof-Microfiber/dp/B0B6GKRCGM?tag=all0ad0-21https://m.media-amazon.com/images/I/41tXMeWi5FL.jpg

- https://www.amazon.com/Cordless-High-Speed-Brushless-Lightweight-Cleaners/dp/B0CGL8NBM8?tag=all0ad0-21https://m.media-amazon.com/images/I/41NfsXSEnLL.jpg

- https://www.amazon.com/Cordless-Straightening-Travel-Wireless-Straightener/dp/B0CJ2HQL3H?tag=all0ad0-21https://m.media-amazon.com/images/I/31wTmdUZyuL.jpg

- https://www.amazon.com/Corrector-Clavicle-Adjustable-Straightener-Providing/dp/B07L41CV8B?tag=all0ad0-21https://m.media-amazon.com/images/I/41B0xbK2kRL.jpg

- https://www.amazon.com/Court-Wizard-Terry-Mancour-audiobook/dp/B07PC2RQSC?tag=all0ad0-21https://m.media-amazon.com/images/I/512jFQbt6JL.jpg

- https://www.amazon.com/Cozivwaiy-Platform-Sandals-Studded-Evening/dp/B0BM43W7VF?tag=all0ad0-21https://m.media-amazon.com/images/I/41+RFM1gP7L.jpg

- https://www.amazon.com/Crenova-Magnetic-Construction-Preschool-Educational/dp/B0CC1RZ2BJ?tag=all0ad0-21https://m.media-amazon.com/images/I/51lJxAlaL3L.jpg

- https://www.amazon.com/Dan-Darci-Marbling-Paint-Kids/dp/B08CLVVJ8C?tag=all0ad0-21https://m.media-amazon.com/images/I/61nDIOC0B0L.jpg

- https://www.amazon.com/Dash-Cam-Front-BOOGIIO-Dashboard/dp/B08LZJ8GMH?tag=all0ad0-21https://m.media-amazon.com/images/I/41B3QK42N1L.jpg

- https://www.amazon.com/Democracy-America-What-Wrong-About-ebook/dp/B0867TRV52?tag=all0ad0-21https://m.media-amazon.com/images/I/4129LSadlmL.jpg

- https://www.amazon.com/Detailing-Attachment-Scrubber-Cleaning-Upholstery/dp/B07WGKQVN7?tag=all0ad0-21https://m.media-amazon.com/images/I/41K75BhGaML.jpg

- https://www.amazon.com/Diameter-Hydrophilic-Filtration-Non-sterile-COBETTER/dp/B0B7BB3L1R?tag=all0ad0-21https://m.media-amazon.com/images/I/31KD4E7TW5L.jpg

- https://www.amazon.com/Diamond-Organizer-Jewelry-Storage-Diamonds/dp/B08JLVSZ15?tag=all0ad0-21https://m.media-amazon.com/images/I/514Z+bbZfQL.jpg

- https://www.amazon.com/Diamond-Painting-Diamonds-12x16inch-30%C3%9740cm/dp/B09X1CQJHX?tag=all0ad0-21https://m.media-amazon.com/images/I/51xEpqCkI-L.jpg

- https://www.amazon.com/didforu-Monocular-Telescope-Monoscope-Binocular/dp/B0C3757D5G?tag=all0ad0-21https://m.media-amazon.com/images/I/512qer0p1oL.jpg

- https://www.amazon.com/Dinkhiiro-Outdoor-Pickleball-Balls-Pickle-Ball-Accessories-Pickleball/dp/B0BNQ8HM76?tag=all0ad0-21https://m.media-amazon.com/images/I/41ni+GPR71L.jpg

- https://www.amazon.com/Distant-Horizon-Backyard-Starship-Book/dp/B0BDP9RPQL?tag=all0ad0-21https://m.media-amazon.com/images/I/512FYS+c9wL.jpg

- https://www.amazon.com/Dorman-1650134-Chevrolet-Driver-Assembly/dp/B00JW1XGDG?tag=all0ad0-21https://m.media-amazon.com/images/I/51p-ja2Vc9L.jpg

- https://www.amazon.com/DosTutu-Mermaid-Costume-Pageant-Birthday/dp/B09NJK6K9M?tag=all0ad0-21https://m.media-amazon.com/images/I/51pVYQBZKJL.jpg

- https://www.amazon.com/dp/B09GFWPXWH?tag=all0ad0-21https://m.media-amazon.com/images/I/51xO7ZL-sVL.jpg

- https://www.amazon.com/DREAMS-VISIONS-Jesus-Awakening-Muslim-ebook/dp/B0078FAA3M?tag=all0ad0-21https://m.media-amazon.com/images/I/51BKVftuXDL.jpg

- https://www.amazon.com/DSJUGGLING-Transparent-Two-Tone-Juggling-Beginners/dp/B09WHRZCFF?tag=all0ad0-21https://m.media-amazon.com/images/I/419NOeSGijL.jpg

- https://www.amazon.com/Empire-of-Storms-Sarah-J-Maas-audiobook/dp/B01KIQV5EU?tag=all0ad0-21https://m.media-amazon.com/images/I/51EMceUgxFL.jpg

- https://www.amazon.com/Eniucow-Montessori-Permanent-Traction-Toddlers/dp/B0B7CZ9KGN?tag=all0ad0-21https://m.media-amazon.com/images/I/31bJyZYqhJL.jpg

- https://www.amazon.com/Eslazoer-insulated-neoprene-reusable-activity/dp/B0BKSMXVN8?tag=all0ad0-21https://m.media-amazon.com/images/I/41kTXH2JxBL.jpg

- https://www.amazon.com/Everyday-Solutions-Mug-Tree-Polished/dp/B0B4T6NCML?tag=all0ad0-21https://m.media-amazon.com/images/I/31-7RTd1fUL.jpg

- https://www.amazon.com/Extender-Universal-Rotatable-Extension-Attachment/dp/B0C4YLVH3D?tag=all0ad0-21https://m.media-amazon.com/images/I/41+YysChcFL.jpg

- https://www.amazon.com/Eyelash-Extension-Cleanser-BREYLEE-Shampoo/dp/B08RJFTFN4?tag=all0ad0-21https://m.media-amazon.com/images/I/51UncwwSzwL.jpg

- https://www.amazon.com/Fatal-Discord-Michael-Massing-audiobook/dp/B078YDCMBD?tag=all0ad0-21https://m.media-amazon.com/images/I/51AJdROll+L.jpg

- https://www.amazon.com/Faucet-Sprayer-Attachment-Replacement-included/dp/B0BCFMT7WY?tag=all0ad0-21https://m.media-amazon.com/images/I/31wZDbk-bYL.jpg

- https://www.amazon.com/Feeling-Good-David-D-Burns-audiobook/dp/B01N9TCVLD?tag=all0ad0-21https://m.media-amazon.com/images/I/51ixV6lf9AL.jpg

- https://www.amazon.com/FeelinGirl-Waitsted-Shapewear-Control-Lifting/dp/B0CBK29G76?tag=all0ad0-21https://m.media-amazon.com/images/I/31Cl6qaK0HL.jpg

- https://www.amazon.com/Fenceguru-Decorative-Rustproof-Barrier-Landscape/dp/B0BZ91ZPHF?tag=all0ad0-21https://m.media-amazon.com/images/I/519kGS3sjxL.jpg

- https://www.amazon.com/Fernco-PQC-105-Flexible-Reusable-Plastic/dp/B00CFVNCCK?tag=all0ad0-21https://m.media-amazon.com/images/I/21xcHMaS37L.jpg

- https://www.amazon.com/Floating-Shelves-Bathroom-Bedroom-Kitchen/dp/B0CF8J497J?tag=all0ad0-21https://m.media-amazon.com/images/I/51cT9HpSh4L.jpg

- https://www.amazon.com/Forehead-Thermometer-Infrared-Eligible-Indicator/dp/B0B4ZD6K43?tag=all0ad0-21https://m.media-amazon.com/images/I/31J97vQVUHL.jpg

- https://www.amazon.com/FRGROW-Lights-Spectrum-Function-Gooseneck/dp/B0CC4P13L7?tag=all0ad0-21https://m.media-amazon.com/images/I/51UXtap-56L.jpg

- https://www.amazon.com/Funrous-Mattress-Lifter-Helper-Stainless/dp/B09WMZPM1N?tag=all0ad0-21https://m.media-amazon.com/images/I/31Vg6rJibzL.jpg

- https://www.amazon.com/GAOY-Glassy-Foundation-Combination-Polish/dp/B0BD4MMFVM?tag=all0ad0-21https://m.media-amazon.com/images/I/414wIShbm+L.jpg

- https://www.amazon.com/Gay-Pride-Rainbow-Heart-Silicone/dp/B01J8E5NUA?tag=all0ad0-21https://m.media-amazon.com/images/I/31TtNjUl3uL.jpg

- https://www.amazon.com/Gerod-Compatible-Replacement-Cushions-Headphones/dp/B09BPV34ZB?tag=all0ad0-21https://m.media-amazon.com/images/I/41cXXPmWNoL.jpg

- https://www.amazon.com/GetKen-Dispenser-Rechargeable-Portable-Automatic/dp/B0C4T26LK4?tag=all0ad0-21https://m.media-amazon.com/images/I/41v5jE7AsIL.jpg

- https://www.amazon.com/Gifts-Girls-birthday-Toys-Duplication/dp/B0B6FY328P?tag=all0ad0-21https://m.media-amazon.com/images/I/51mMmkuwdfL.jpg

- https://www.amazon.com/Girls-Charm-Bracelet-Making-Kit/dp/B0CFCC8HBZ?tag=all0ad0-21https://m.media-amazon.com/images/I/81-SB1q4h1L.jpg

- https://www.amazon.com/Girls-Charm-Bracelet-Making-Kit/dp/B0CFF3SLJT?tag=all0ad0-21https://m.media-amazon.com/images/I/81kJ83iM+IL.jpg

- https://www.amazon.com/GloFX-Blue-Rave-Bedroom-Decor/dp/B0B7ZXG6PS?tag=all0ad0-21https://m.media-amazon.com/images/I/41iPdpfs1DL.jpg

- https://www.amazon.com/GMSOL-Diamond-Necklaces-Necklace-Layered/dp/B0BXKY3XW9?tag=all0ad0-21https://m.media-amazon.com/images/I/21iQgpW3i4L.jpg

- https://www.amazon.com/Greenland-Home-GL-THROWSH-Shangri-La-Throw/dp/B017U6U8JO?tag=all0ad0-21https://m.media-amazon.com/images/I/61VISLraXWL.jpg

- https://www.amazon.com/Gyierwe-High-Pressure-Stainless-Adjustable-Filtration/dp/B0C5RHCSN8?tag=all0ad0-21https://m.media-amazon.com/images/I/51RZ3tTLzyL.jpg

- https://www.amazon.com/Halloween-Decorations-Indoor-DECSPAS-Haunted/dp/B0C6JPZ6K5?tag=all0ad0-21https://m.media-amazon.com/images/I/51zSmWVx7HL.jpg

- https://www.amazon.com/HawSkgFub-Curtains-Farmhouse-Seasonal-Bathroom/dp/B0BVLTJR4P?tag=all0ad0-21https://m.media-amazon.com/images/I/412k-TN9yzL.jpg

- https://www.amazon.com/Helping-Soldering-Hand-Base-Microscope/dp/B0BBR46ZQ9?tag=all0ad0-21https://m.media-amazon.com/images/I/41IZoepkAkL.jpg

- https://www.amazon.com/Her-Soul-Take-Souls-Trilogy/dp/B0BDT2M2QZ?tag=all0ad0-21https://m.media-amazon.com/images/I/51Z1AUkTytL.jpg

- https://www.amazon.com/HISANDUK-Pendant-Fixtures-Kitchen-Adjustable/dp/B0B76G6VCT?tag=all0ad0-21https://m.media-amazon.com/images/I/41SzQX+tAeL.jpg

- https://www.amazon.com/Homeleo-Operated-Christmas-Strawberry-Decorations/dp/B07WTVGTWX?tag=all0ad0-21https://m.media-amazon.com/images/I/51+CNn9QowL.jpg

- https://www.amazon.com/House-of-Impossible-Beauties-audiobook/dp/B077VQ68HH?tag=all0ad0-21https://m.media-amazon.com/images/I/51VFkDIrDsL.jpg

- https://www.amazon.com/HOUSE-Organizer-Upgraded-Undersink-Organizers/dp/B0BY8XZK71?tag=all0ad0-21https://m.media-amazon.com/images/I/510FKKVDhpL.jpg

- https://www.amazon.com/House-Witch-Humorous-Romantic-Fantasy/dp/B0BLJ7CQKK?tag=all0ad0-21https://m.media-amazon.com/images/I/61+JJSw9jZL.jpg

- https://www.amazon.com/HR-Quadcopter-Beginners-Altitude-Batteries/dp/B08L8YFT4S?tag=all0ad0-21https://m.media-amazon.com/images/I/41pf-DNDj5L.jpg

- https://www.amazon.com/Humble-Chic-Wall-Art-Prints/dp/B07QL3GTX4?tag=all0ad0-21https://m.media-amazon.com/images/I/31QO1OLNDGL.jpg

- https://www.amazon.com/Huyerdo-Corduroy-Cosmetic-Aesthetic-Organizer/dp/B0C1YXPX5M?tag=all0ad0-21https://m.media-amazon.com/images/I/51Es7IjWzjL.jpg

- https://www.amazon.com/I-Invited-Her-In-Adele-Parks-audiobook/dp/B07JZGFFHY?tag=all0ad0-21https://m.media-amazon.com/images/I/51ak3gyHziL.jpg

- https://www.amazon.com/In1docom-Peanut-Massage-Massager-Lacrosse/dp/B0CB4S2JRX?tag=all0ad0-21https://m.media-amazon.com/images/I/41sBnhqhZzL.jpg

- https://www.amazon.com/INeedIt-D101-Portable-Wireless-Organization/dp/B0BCKV8B81?tag=all0ad0-21https://m.media-amazon.com/images/I/21OGF1G7x9L.jpg

- https://www.amazon.com/Inflatable-Ground-Dustproof-Rainproof-Waterproof/dp/B0CB8B4PKX?tag=all0ad0-21https://m.media-amazon.com/images/I/51o0yuVrjPL.jpg

- https://www.amazon.com/Insane-Labz-Bitartrate-AMPiberry-Endurance/dp/B07V6JCWJG?tag=all0ad0-21https://m.media-amazon.com/images/I/51GZtu2lUZL.jpg

- https://www.amazon.com/Island-Queen-A-Novel/dp/B08MLPY619?tag=all0ad0-21https://m.media-amazon.com/images/I/51snO62ltvL.jpg

- https://www.amazon.com/J-hong-Toddlers-Learning-Montessori-Christmas/dp/B0C4LK67Q5?tag=all0ad0-21https://m.media-amazon.com/images/I/51q4mMYfoLL.jpg

- https://www.amazon.com/jalz-Wooden-Spoons-Cooking-3-Piece/dp/B07DZKTC9B?tag=all0ad0-21https://m.media-amazon.com/images/I/416iXJ1B8PL.jpg

- https://www.amazon.com/JENN-ARDOR-Fashion-Sneakers-Comfortable/dp/B08N16X7HR?tag=all0ad0-21https://m.media-amazon.com/images/I/41y+m0CTBeS.jpg

- https://www.amazon.com/John-Sterling-Sports-7-Ball-Capacity/dp/B01DWSH1I0?tag=all0ad0-21https://m.media-amazon.com/images/I/31-O3z3v+XL.jpg

- https://www.amazon.com/Jorpet-Elevated-Adjustable-Non-Slip-Stainless/dp/B0C3YRH31J?tag=all0ad0-21https://m.media-amazon.com/images/I/41+P1DIyA0L.jpg

- https://www.amazon.com/JOYMODE-women-workout-clothes-Legging/dp/B08766FN91?tag=all0ad0-21https://m.media-amazon.com/images/I/31DoD7LD8EL.jpg

- https://www.amazon.com/Kettlebell-Whiskey-Shaped-Silicone-Melting/dp/B0C5NBXHDF?tag=all0ad0-21https://m.media-amazon.com/images/I/41XwetcdbXL.jpg

- https://www.amazon.com/Kingdom-Come-Backyard-Starship-Book/dp/B0BKBRDCV2?tag=all0ad0-21https://m.media-amazon.com/images/I/51DX-OPQv6L.jpg

- https://www.amazon.com/Kitchen-BAYZZ-Cushioned-Non-Slip-Waterproof/dp/B095GZYG7Z?tag=all0ad0-21https://m.media-amazon.com/images/I/41-vM6JlCeL.jpg

- https://www.amazon.com/KOIOS-Immersion-Multifunctional-Stainless-Titanium/dp/B076GW89V9?tag=all0ad0-21https://m.media-amazon.com/images/I/41YrmEtdu0L.jpg

- https://www.amazon.com/Lady-Orc-Sworn-Book/dp/B0B4BB9B21?tag=all0ad0-21https://m.media-amazon.com/images/I/51VsjpQ+WoL.jpg

- https://www.amazon.com/Large-Multipurpose-Waterproof-Picnic-Shopping/dp/B0CCS1HSMK?tag=all0ad0-21https://m.media-amazon.com/images/I/410kS6OJJHL.jpg

- https://www.amazon.com/Large-Multipurpose-Waterproof-Picnic-Shopping/dp/B0CF58TZ9J?tag=all0ad0-21https://m.media-amazon.com/images/I/410kS6OJJHL.jpg

- https://www.amazon.com/Lay-My-Heart-Angela-Pneuman-ebook/dp/B00FJ5EQ0Q?tag=all0ad0-21https://m.media-amazon.com/images/I/419DBRj4HuL.jpg

- https://www.amazon.com/LeadDock-Ice-Cube-Tray-Lid/dp/B0CB6TN9DY?tag=all0ad0-21https://m.media-amazon.com/images/I/51WpROdbWEL.jpg

- https://www.amazon.com/Learning-Educational-Preschool-Developmental-Montessori/dp/B0BY2HBQLS?tag=all0ad0-21https://m.media-amazon.com/images/I/510R2-67PbL.jpg

- https://www.amazon.com/LEDKINGDOMUS-inches-Driving-Compatible-Pickup/dp/B09176936Z?tag=all0ad0-21https://m.media-amazon.com/images/I/416I+aay3RL.jpg

- https://www.amazon.com/Legend-Randidly-Ghosthound-LitRPG-Adventure/dp/B09CR88G8L?tag=all0ad0-21https://m.media-amazon.com/images/I/51cte+pTRVL.jpg

- https://www.amazon.com/Legend-Randidly-Ghosthound-LitRPG-Adventure/dp/B09NB32VS5?tag=all0ad0-21https://m.media-amazon.com/images/I/51HquIJf4EL.jpg

- https://www.amazon.com/Legend-Randidly-Ghosthound-LitRPG-Adventure/dp/B09WTLH4Z9?tag=all0ad0-21https://m.media-amazon.com/images/I/61j-pJq9blL.jpg

- https://www.amazon.com/LENRUE-Computer-Speakers-Desktop-AUX_Black/dp/B0BRFN13S9?tag=all0ad0-21https://m.media-amazon.com/images/I/41XeSj+qShL.jpg

- https://www.amazon.com/LIANTRAL-Firewood-Outdoor-Upgrade-Fireplace/dp/B0BKSVRW4N?tag=all0ad0-21https://m.media-amazon.com/images/I/51Y9mIZmocL.jpg

- https://www.amazon.com/Lilys-White-Lace-Carolyn-Brown-ebook/dp/B00DTTW5UW?tag=all0ad0-21https://m.media-amazon.com/images/I/51iPZWUaUQL.jpg

- https://www.amazon.com/LISEN-Magnetic-Install-Friendly-Smartphones/dp/B07YRKDF4P?tag=all0ad0-21https://m.media-amazon.com/images/I/51tMoOMiRzL.jpg

- https://www.amazon.com/LJIOEZZI-Balaclava-Weather-Snowboarding-Motorcycling/dp/B0BHVNYM8Z?tag=all0ad0-21https://m.media-amazon.com/images/I/31YRjxzYi8L.jpg

- https://www.amazon.com/LOXP-2C-CAR-Sun-Shade-Umbrella-Medium/dp/B0BR7WLLY4?tag=all0ad0-21https://m.media-amazon.com/images/I/41vWPHUEsJL.jpg

- https://www.amazon.com/LUENX-Trendy-Oversized-Aviator-Sunglasses/dp/B09CMB5D7N?tag=all0ad0-21https://m.media-amazon.com/images/I/41uZsi7kskL.jpg

- https://www.amazon.com/MAGEFY-Eyelashes-Natural-Handmade-Reusable/dp/B0956V789H?tag=all0ad0-21https://m.media-amazon.com/images/I/51UgJTxfm2S.jpg

- https://www.amazon.com/Magnetic-Birthday-Building-Preschool-Montessori/dp/B0BWWPC5MR?tag=all0ad0-21https://m.media-amazon.com/images/I/61UuS8o90ZL.jpg

- https://www.amazon.com/Makartt-Extension-Glitter-Trendy-Builder/dp/B096VQW7NF?tag=all0ad0-21https://m.media-amazon.com/images/I/31hUzSIu6gL.jpg

- https://www.amazon.com/Makeup-Brush-Holder-Travel-Essentials/dp/B0C7G5YXRZ?tag=all0ad0-21https://m.media-amazon.com/images/I/31od+KNxUkL.jpg

- https://www.amazon.com/MaraFansie-Housewarming-Birthday-Anniversary-Graduation/dp/B0BS416GJ3?tag=all0ad0-21https://m.media-amazon.com/images/I/51QjF6NaQ+L.jpg

- https://www.amazon.com/MAREE-Face-Moisturizer-Anti-Wrinkle-Hyaluronic/dp/B0C3LXWJJ7?tag=all0ad0-21https://m.media-amazon.com/images/I/512NgLTHbTL.jpg

- https://www.amazon.com/Matter-Black-Lives-Writing-Yorker/dp/B08TP4YC6S?tag=all0ad0-21https://m.media-amazon.com/images/I/513aBwlCG-L.jpg

- https://www.amazon.com/Mavericks-Craig-Alanson-audiobook/dp/B07GH5ZJ3N?tag=all0ad0-21https://m.media-amazon.com/images/I/51OW+MpwHYL.jpg

- https://www.amazon.com/McAfee-Protection-Exclusive-Monitoring-Subscription/dp/B0BB2N69J8?tag=all0ad0-21https://m.media-amazon.com/images/I/510kxZvrlKL.jpg

- https://www.amazon.com/McAfee-Protection-Unlimited-Device-Download/dp/B07BFRVMMN?tag=all0ad0-21https://m.media-amazon.com/images/I/51qNb5s7JzL.jpg

- https://www.amazon.com/McAfee-Total-Protection-Devices-Subscription/dp/B07K98XDX8?tag=all0ad0-21https://m.media-amazon.com/images/I/51P0zntKKaL.jpg

- https://www.amazon.com/McAfee-Total-Protection-Devices-Subscription/dp/B07K995RWG?tag=all0ad0-21https://m.media-amazon.com/images/I/51hk1owA-eL.jpg

- https://www.amazon.com/McClusky-Battle-Midway-David-Rigby-ebook/dp/B07NJCKM5P?tag=all0ad0-21https://m.media-amazon.com/images/I/41wKgBrbEhL.jpg

- https://www.amazon.com/Meat-Thermometer-Digital-Grilling-Cooking/dp/B0BQ782XNW?tag=all0ad0-21https://m.media-amazon.com/images/I/51cOJyK7rHL.jpg

- https://www.amazon.com/Missing-Molly-Natalie-Barelli-audiobook/dp/B07N7HZ9XJ?tag=all0ad0-21https://m.media-amazon.com/images/I/41G2D08UIgL.jpg

- https://www.amazon.com/Mondays-Not-Coming-audiobook/dp/B07B7897X8?tag=all0ad0-21https://m.media-amazon.com/images/I/51BRoON6IWL.jpg

- https://www.amazon.com/Monster-Wireless-Bluetooth-Headphones-Rotating/dp/B097JZQXXL?tag=all0ad0-21https://m.media-amazon.com/images/I/41RciianoPL.jpg

- https://www.amazon.com/Moolan-Cordless-Portable-Powerful-Rechargeable/dp/B0CB1C743Z?tag=all0ad0-21https://m.media-amazon.com/images/I/41qRyj2A+xL.jpg

- https://www.amazon.com/MORNEEX-Polyester-Bathroom-Waterproof-72X72inches/dp/B0B712Q9QD?tag=all0ad0-21https://m.media-amazon.com/images/I/41ejnaDrS0L.jpg

- https://www.amazon.com/MOYEIKH-Talking-Elderly-Visually-Impaired/dp/B0C4LCMTNN?tag=all0ad0-21https://m.media-amazon.com/images/I/41m7k3YEUXL.jpg

- https://www.amazon.com/Mr-You-Organizer-ModelsStand-Dust-proof-Velvet%EF%BC%8C12L/dp/B082X6BCJG?tag=all0ad0-21https://m.media-amazon.com/images/I/51cRFT9gZCL.jpg

- https://www.amazon.com/MUJERBAY-Massager-Compression-Full-Cover-Fasciitis/dp/B0BWJ4LT32?tag=all0ad0-21https://m.media-amazon.com/images/I/41d7tUceqaL.jpg

- https://www.amazon.com/Musashi-audiobook/dp/B07FXMJCX6?tag=all0ad0-21https://m.media-amazon.com/images/I/515t3Zygd7L.jpg

- https://www.amazon.com/MUSICOZY-Headphones-Bluetooth-Headband-Waterproof/dp/B09NN1MJQS?tag=all0ad0-21https://m.media-amazon.com/images/I/41qxlHs2CTL.jpg

- https://www.amazon.com/My-Dear-Hamilton-audiobook/dp/B077NN1WWF?tag=all0ad0-21https://m.media-amazon.com/images/I/51sBrSA5VfL.jpg

- https://www.amazon.com/NATOLIKE-Pickleball-Lightweight-Fiberglass-Polypropylene/dp/B0BY8JF32S?tag=all0ad0-21https://m.media-amazon.com/images/I/61I+i2U7+sL.jpg

- https://www.amazon.com/Natrol-High-Potency-Antioxidant-Vitamin-Tablets/dp/B08KXGJXR1?tag=all0ad0-21https://m.media-amazon.com/images/I/41y11UVSqkL.jpg

- https://www.amazon.com/Necromancer-Spellmonger-Book-10/dp/B083YVZ8YQ?tag=all0ad0-21https://m.media-amazon.com/images/I/51FELytNyCL.jpg

- https://www.amazon.com/NeoLartes-July-White-Berry-Garlands/dp/B0BWDHKFWV?tag=all0ad0-21https://m.media-amazon.com/images/I/51P3USHQMCL.jpg

- https://www.amazon.com/Neoprene-Dumbbell-Weights-Anti-slip-Anti-roll/dp/B087JDLWLQ?tag=all0ad0-21https://m.media-amazon.com/images/I/31hKC3UgF7L.jpg

- https://www.amazon.com/NEW-Norton-AntiVirus-Plus-Antivirus/dp/B07Q69X7XL?tag=all0ad0-21https://m.media-amazon.com/images/I/51M35ZaPBmL.jpg

- https://www.amazon.com/Nexillumi-LED-Lights-60-75-Inch/dp/B07XBJR7GY?tag=all0ad0-21https://m.media-amazon.com/images/I/519sc2GYDnL.jpg

- https://www.amazon.com/Nicebay-Professional-Dryerwith3-Attachments-Lightweight/dp/B0CBSHRBS6?tag=all0ad0-21https://m.media-amazon.com/images/I/41Og2LDzcML.jpg

- https://www.amazon.com/Night-Sleep-Death-Stars-Novel/dp/B07XY9SKT3?tag=all0ad0-21https://m.media-amazon.com/images/I/51bBRM-4BUL.jpg

- https://www.amazon.com/NuLink-Electric-Inflation-Decoration-110V-120V/dp/B01H2QF6SK?tag=all0ad0-21https://m.media-amazon.com/images/I/41mdv0LnxfL.jpg

- https://www.amazon.com/Nylavee-Computer-Speakers-Soundbar-Connection/dp/B0BZCMM17X?tag=all0ad0-21https://m.media-amazon.com/images/I/41GYynP1rrL.jpg

- https://www.amazon.com/Oakland-Living-Rose-Bird-Bath/dp/B000PAKVJK?tag=all0ad0-21https://m.media-amazon.com/images/I/41MzpuSS3yL.jpg

- https://www.amazon.com/Oasis-033879-001-VersaFiller-Filter-Cartridge/dp/B002WDQGXS?tag=all0ad0-21https://m.media-amazon.com/images/I/41nyJPxi4SL.jpg

- https://www.amazon.com/OBL-Plastic-Durable-Non-deformable-Imitation/dp/B07SKJ946F?tag=all0ad0-21https://m.media-amazon.com/images/I/51Z2NMJGOwL.jpg

- https://www.amazon.com/OCHYIT-Protector-Waterproof-Defender-Analyzer/dp/B0BKL8DWQR?tag=all0ad0-21https://m.media-amazon.com/images/I/41XownI6MdL.jpg

- https://www.amazon.com/Oil-Sprayer-Dispenser-Accessories-Spritzer/dp/B0B93CBCFC?tag=all0ad0-21https://m.media-amazon.com/images/I/51nNMZPYUXL.jpg

- https://www.amazon.com/OKIMO-Wireless-Computer-Ergonomic-Chromebook/dp/B0CC4KLTKM?tag=all0ad0-21https://m.media-amazon.com/images/I/41LJQ8jNxZL.jpg

- https://www.amazon.com/Organizer-Buttonholes-Stretchable-Connectable-Adjustable/dp/B0C22ZMRWC?tag=all0ad0-21https://m.media-amazon.com/images/I/51ql4-4eN8L.jpg

- https://www.amazon.com/Organizer-organizer-Zippers-Blocking-Insert%EF%BC%8C5/dp/B07WMWCBTQ?tag=all0ad0-21https://m.media-amazon.com/images/I/310UEu-zi7L.jpg

- https://www.amazon.com/ORICO-Adapter-External-Converter-Transfer/dp/B0B3MMJ1LB?tag=all0ad0-21https://m.media-amazon.com/images/I/41dfdJx68AL.jpg

- https://www.amazon.com/Original-Certified-Charging-Lightning-Compatible/dp/B0CJDHYYZD?tag=all0ad0-21https://m.media-amazon.com/images/I/41trxkrOxLL.jpg

- https://www.amazon.com/Oupeng-sky-Carabiner-Clip-Ring/dp/B07MSBZ7BZ?tag=all0ad0-21https://m.media-amazon.com/images/I/51Bxk8se22L.jpg

- https://www.amazon.com/Over-Top-Jonathan-Van-Ness-audiobook/dp/B07Q386LM5?tag=all0ad0-21https://m.media-amazon.com/images/I/51rcfpVI5UL.jpg

- https://www.amazon.com/Padfolio-Portfolio-Interview-Document-Organizer/dp/B07VLPS9ZK?tag=all0ad0-21https://m.media-amazon.com/images/I/41CSIOr0ZoL.jpg

- https://www.amazon.com/Pairs-Heavy-Ratchet-Tie-Mount-Crossbar-Easy/dp/B0725Z9LSB?tag=all0ad0-21https://m.media-amazon.com/images/I/41xI-f4Jj9L.jpg

- https://www.amazon.com/Paperwhite-Generation-Signature-Lightweight-Transparent/dp/B0C8B1JJYZ?tag=all0ad0-21https://m.media-amazon.com/images/I/41MpXChQpIL.jpg

- https://www.amazon.com/Paw-Patrol-Collectible-DIE-CAST-Vehicles/dp/B07S6VH6DD?tag=all0ad0-21https://m.media-amazon.com/images/I/41nC9yFe1dL.jpg

- https://www.amazon.com/Peace-nest-Checkered-Checkerboard-Lightweight/dp/B0BX969J3C?tag=all0ad0-21https://m.media-amazon.com/images/I/51D8BO3dasL.jpg

- https://www.amazon.com/Perfect-Run-Book/dp/B09SVMRP12?tag=all0ad0-21https://m.media-amazon.com/images/I/51zkV2RkC2L.jpg

- https://www.amazon.com/Pet-Grooming-Brush-Double-Sided-Blue/dp/B0BRPZY67Z?tag=all0ad0-21https://m.media-amazon.com/images/I/41iA2Z29chL.jpg

- https://www.amazon.com/Pieces-Washed-Reversible-Cooling-Closure/dp/B094FGZ3XD?tag=all0ad0-21https://m.media-amazon.com/images/I/41hX+gmSQvL.jpg

- https://www.amazon.com/PINHEN-Stabilizer-360%C2%B0Rotate-Hands-Free-Compatible/dp/B09N8VL6VT?tag=all0ad0-21https://m.media-amazon.com/images/I/41j3QfO4JLL.jpg

- https://www.amazon.com/Planner-2023-2024-Academic-Calendar-Hardcover/dp/B0BP22KCYV?tag=all0ad0-21https://m.media-amazon.com/images/I/51xfDnKUkSL.jpg

- https://www.amazon.com/Ponytail-hoyuwak-Rhinestone-Accessories-Silver/dp/B0C1GRQNLM?tag=all0ad0-21https://m.media-amazon.com/images/I/51Jl+ePN1vL.jpg

- https://www.amazon.com/Portable-Wireless-Espresso-Machine-Freshly-brewed/dp/B09LCKLYGT?tag=all0ad0-21https://m.media-amazon.com/images/I/21uyv4z55pL.jpg

- https://www.amazon.com/Premom-Quantitative-Ovulation-Predictor-Numerical/dp/B07P7LSW57?tag=all0ad0-21https://m.media-amazon.com/images/I/51dY9dReMhL.jpg

- https://www.amazon.com/Professional-Pedicure-Rosmax-Stainless-Washable/dp/B08TM7TH1N?tag=all0ad0-21https://m.media-amazon.com/images/I/519tcTL-K8L.jpg

- https://www.amazon.com/Projector-Bluetooth-15000Lumens-Portable-Compatible/dp/B0CGXHVB5D?tag=all0ad0-21https://m.media-amazon.com/images/I/414hYl+AJuL.jpg

- https://www.amazon.com/Projector-Control-Bluetooth-Dimmable-Projection/dp/B09F95JS41?tag=all0ad0-21https://m.media-amazon.com/images/I/61GYRolZ-9L.jpg

- https://www.amazon.com/Projector-HOMPOW-Bluetooth-Correction-Compatible/dp/B0BCKV1VHX?tag=all0ad0-21https://m.media-amazon.com/images/I/616j3cS2O9L.jpg

- https://www.amazon.com/Protector-Coverage-Protection-Installation-Specially/dp/B0B87THFLK?tag=all0ad0-21https://m.media-amazon.com/images/I/41tlgaNVLCL.jpg

- https://www.amazon.com/Protectors-Furniture-Scratches-Hardwood-Large/dp/B0CHB4ZR22?tag=all0ad0-21https://m.media-amazon.com/images/I/51UIaW9XlXL.jpg

- https://www.amazon.com/Purifier-Purifiers-VEWIOR-Settings-Ultra-Quiet/dp/B0B41Z7B6H?tag=all0ad0-21https://m.media-amazon.com/images/I/41o0rCSrKcL.jpg

- https://www.amazon.com/Purifiers-Purifier-Aromatherapy-Function-Filtration/dp/B0C5GCPDV2?tag=all0ad0-21https://m.media-amazon.com/images/I/41KvpYD7k5L.jpg

- https://www.amazon.com/QAWDAWM-Conduction-Headphones-Bluetooth-Waterproof/dp/B0CGR1799N?tag=all0ad0-21https://m.media-amazon.com/images/I/41trzwTzdKL.jpg

- https://www.amazon.com/REDESS-Beanie-Women-Winter-Slouchy/dp/B0BZVDHFNJ?tag=all0ad0-21https://m.media-amazon.com/images/I/51hHyl+rkWL.jpg

- https://www.amazon.com/Robot-Vacuum-Mop-Combo-Self-Charging/dp/B0CD3XTMS1?tag=all0ad0-21https://m.media-amazon.com/images/I/518O5sALu1L.jpg

- https://www.amazon.com/RONGPRO-Combination-Carpenter-Zinc-Alloy-Die-Casting/dp/B09GVQRZVK?tag=all0ad0-21https://m.media-amazon.com/images/I/41uHEayHMEL.jpg

- https://www.amazon.com/ROOMLIFE-Chenille-Slipcover-Loveseat-Sectional/dp/B0BJ5T5Z91?tag=all0ad0-21https://m.media-amazon.com/images/I/51VNyhUtziL.jpg

- https://www.amazon.com/Ryan-Rule-York-Ruthless-Book/dp/B0BPJSLNDX?tag=all0ad0-21https://m.media-amazon.com/images/I/51o9mZQpbgL.jpg

- https://www.amazon.com/Sandstorm-Street-Rats-Aramoor-Book/dp/B09QDYGXGT?tag=all0ad0-21https://m.media-amazon.com/images/I/61g7nbsvDiL.jpg

- https://www.amazon.com/Santoku-Knife-Stainless-Ergonomic-Restaurant/dp/B0865TNBKC?tag=all0ad0-21https://m.media-amazon.com/images/I/41bVfVBEhOL.jpg

- https://www.amazon.com/SATC-Woodworking-Carpenters-Gardening-Resistant/dp/B09WYGJJ3F?tag=all0ad0-21https://m.media-amazon.com/images/I/31U+zqjEzNL.jpg

- https://www.amazon.com/Scissors-ULG-Hairdressing-Stainless-Detachable/dp/B09ZTZYDT2?tag=all0ad0-21https://m.media-amazon.com/images/I/315+PnPQhmL.jpg

- https://www.amazon.com/SeaVees-Mens-Standard-Casual-Sneaker/dp/B008TUCU1A?tag=all0ad0-21https://m.media-amazon.com/images/I/31z4PJ-dnQL.jpg

- https://www.amazon.com/Security-Lighting-Waterproof-Outdoor-Basketball/dp/B09GV2B545?tag=all0ad0-21https://m.media-amazon.com/images/I/41Hh9Px9UzL.jpg

- https://www.amazon.com/Shadow-Dark-Queen-Serpentwar-Saga/dp/B07YCT8PKM?tag=all0ad0-21https://m.media-amazon.com/images/I/517+deIbkiL.jpg

- https://www.amazon.com/Shadowplay-Spellmonger-Legacy-Secrets-Book/dp/B09DZ4S8MG?tag=all0ad0-21https://m.media-amazon.com/images/I/51mV-QcOzOL.jpg

- https://www.amazon.com/Shamrocks-Bedroom-Bathroom-Kitchen-Hallway/dp/B07ZV5DSTN?tag=all0ad0-21https://m.media-amazon.com/images/I/51J0KqPk6ML.jpg

- https://www.amazon.com/Silicone-Reusable-AiKanbo-Airtight-Preservation/dp/B09X1LXS9B?tag=all0ad0-21https://m.media-amazon.com/images/I/41fNI3t5hYS.jpg

- https://www.amazon.com/Sixriver-Crimper-Straightener-Crimping-Volumizing/dp/B0C85XZFM8?tag=all0ad0-21https://m.media-amazon.com/images/I/51OrfENeNCL.jpg

- https://www.amazon.com/Skyfoot-Adjustable-Increase-Insoles-Cushioning/dp/B0BN5V3PPF?tag=all0ad0-21https://m.media-amazon.com/images/I/41uwN2FiiiL.jpg

- https://www.amazon.com/skysen-Magnetic-Decorative-Handles-Hardware/dp/B07L72D7FX?tag=all0ad0-21https://m.media-amazon.com/images/I/51AIynaJumL.jpg

- https://www.amazon.com/STAR-WARS-SW-Halloween-Wookiee/dp/B0BHZVTNQV?tag=all0ad0-21https://m.media-amazon.com/images/I/411fjZsBYrL.jpg

- https://www.amazon.com/Stens-375-402-Black-Decker-82-020/dp/B01H5K2QSQ?tag=all0ad0-21https://m.media-amazon.com/images/I/21Tkj5Btb0L.jpg

- https://www.amazon.com/Stickers-Bottles-Waterproof-Animals-Skateboard/dp/B09S3JSNV3?tag=all0ad0-21https://m.media-amazon.com/images/I/61E2JiiBkML.jpg

- https://www.amazon.com/Still-Just-Geek-Annotated-Memoir/dp/B09HZB3WGP?tag=all0ad0-21https://m.media-amazon.com/images/I/51Ee05wyCwL.jpg

- https://www.amazon.com/Straightener-MiroPure-Straightening-Anti-Scald-Temperature/dp/B06XGXP9RP?tag=all0ad0-21https://m.media-amazon.com/images/I/41okvbcUEnL.jpg

- https://www.amazon.com/Summit-Treestands-Replacement-Cables-Climbing/dp/B001BAGLXI?tag=all0ad0-21https://m.media-amazon.com/images/I/41vANZDPuCL.jpg

- https://www.amazon.com/Support-Car-Car-Support-Memory-Car-Driving/dp/B07MV5X84K?tag=all0ad0-21https://m.media-amazon.com/images/I/51iEP2LoKmL.jpg

- https://www.amazon.com/Surface-Charger-Microsoft-Compatible-Laptop/dp/B0BW41H1W2?tag=all0ad0-21https://m.media-amazon.com/images/I/31Xt8mDjKyL.jpg

- https://www.amazon.com/Survival-Essentials-Tactical-Emergency-Activities/dp/B0BFFC8ZTV?tag=all0ad0-21https://m.media-amazon.com/images/I/512QQyOIcjL.jpg

- https://www.amazon.com/SwaggWood-Certified-Lightning-Charging-Compatible/dp/B0CFQF6LS6?tag=all0ad0-21https://m.media-amazon.com/images/I/41vq56QWFNL.jpg

- https://www.amazon.com/Tablecloth-Disposable-Surfboard-Rectangle-Birthday/dp/B09X2GK6Z7?tag=all0ad0-21https://m.media-amazon.com/images/I/51M30CIsW2L.jpg

- https://www.amazon.com/Tanming-Womens-Seamless-Workout-Running/dp/B0BHY73PWB?tag=all0ad0-21https://m.media-amazon.com/images/I/310AJZGSfCL.jpg

- https://www.amazon.com/Tear-off-Productivity-Anna-Marie-Collections/dp/B09GDDCMJP?tag=all0ad0-21https://m.media-amazon.com/images/I/41yZVQwPEZL.jpg

- https://www.amazon.com/Textures-Graphite-Charcoal-Steven-Pearce-ebook/dp/B01N7Y0XP5?tag=all0ad0-21https://m.media-amazon.com/images/I/61XIPXXBL5L.jpg

- https://www.amazon.com/The-Beginning-of-Everything-audiobook/dp/B081NXJVT5?tag=all0ad0-21https://m.media-amazon.com/images/I/51aG06izsLL.jpg

- https://www.amazon.com/The-Enlightenment/dp/B07VHFHN3Y?tag=all0ad0-21https://m.media-amazon.com/images/I/51ArbS5NSDL.jpg

- https://www.amazon.com/The-Good-Lie/dp/B08QVNGF3M?tag=all0ad0-21https://m.media-amazon.com/images/I/51UPMGqzseS.jpg

- https://www.amazon.com/Thermal-Moisture-Wicking-Breathable-Charcoal/dp/B0929CZSL2?tag=all0ad0-21https://m.media-amazon.com/images/I/61GFFLF2InL.jpg

- https://www.amazon.com/Tiny-Worlds-flashcards-Preschoolers-FlashCards/dp/9811186480?tag=all0ad0-21https://m.media-amazon.com/images/I/51Du0jKtPzL.jpg

- https://www.amazon.com/TOZO-G1-Headphones-Sensitivity-Low-Latency/dp/B0B31GZW61?tag=all0ad0-21https://m.media-amazon.com/images/I/41E2gk95+aL.jpg

- https://www.amazon.com/Traffic-Secrets-Underground-Playbook-Customers/dp/B08B9XH6KH?tag=all0ad0-21https://m.media-amazon.com/images/I/51hWa7NS0NL.jpg

- https://www.amazon.com/Twinkle-Star-Decorative-Waterproof-Decorations/dp/B098JY29L7?tag=all0ad0-21https://m.media-amazon.com/images/I/616wtcSojML.jpg

- https://www.amazon.com/TWOPAN-Docking-Station-Charging-Reader/dp/B08DP397VJ?tag=all0ad0-21https://m.media-amazon.com/images/I/51uMUogb87L.jpg

- https://www.amazon.com/ULA-YUAN-Earrings-Sterling-Lightweight-Zirconia/dp/B0C4TD5XT1?tag=all0ad0-21https://m.media-amazon.com/images/I/41xB7mwzhLL.jpg

- https://www.amazon.com/Ultrean-Scale%EF%BC%8C33lb-Graduation-Rechargeable-Function/dp/B0C4T7DYPF?tag=all0ad0-21https://m.media-amazon.com/images/I/51AeRXTVp5L.jpg

- https://www.amazon.com/undercoat-grooming-couch-remover-cleaning/dp/B0BQXW1KKT?tag=all0ad0-21https://m.media-amazon.com/images/I/412YRiMjcUL.jpg

- https://www.amazon.com/Unfinished-Large-Unpainted-Birthday-Decoration/dp/B0C8YQCQBY?tag=all0ad0-21https://m.media-amazon.com/images/I/31bPd6gVmSL.jpg

- https://www.amazon.com/UniLiGis-Washable-Backpack-Adjustable-Drawstring/dp/B08CDZDKP2?tag=all0ad0-21https://m.media-amazon.com/images/I/413CAK6mBXL.jpg

- https://www.amazon.com/Uniquewise-QI003353R-L-Handcrafted-Burgundy-Natural/dp/B07F866473?tag=all0ad0-21https://m.media-amazon.com/images/I/313YcWeXFoL.jpg

- https://www.amazon.com/VacLife-Cordless-Charger-350CFM-Electric-High-Speed/dp/B0C6MZZLTT?tag=all0ad0-21https://m.media-amazon.com/images/I/41bEaK78nKL.jpg

- https://www.amazon.com/VAV-Infrared-Strong-Diffuser-Concentrator/dp/B07H8SR9K7?tag=all0ad0-21https://m.media-amazon.com/images/I/41uNl6-KSPL.jpg

- https://www.amazon.com/Vilucks-Reusable-Universal-Microfiber-Cloths/dp/B09TPPK2T6?tag=all0ad0-21https://m.media-amazon.com/images/I/21tCfu9A9wL.jpg

- https://www.amazon.com/Vooii-iPhone-Silicone-Protective-Microfiber/dp/B07Z7LY135?tag=all0ad0-21https://m.media-amazon.com/images/I/41LTfdLnNVL.jpg

- https://www.amazon.com/Walking-Sam-Father-Hundred-Across/dp/B0BJ14HHVD?tag=all0ad0-21https://m.media-amazon.com/images/I/51xw7IcZbAL.jpg

- https://www.amazon.com/Watchers-gripping-debut-horror-novel-ebook/dp/B08TP9ZQY5?tag=all0ad0-21https://m.media-amazon.com/images/I/41g5T7Vj8vL.jpg

- https://www.amazon.com/Waterproof-Exquisitely-Lengthening-Thickening-Smudge-Proof/dp/B09FJK67YH?tag=all0ad0-21https://m.media-amazon.com/images/I/51X6cb5qVvL.jpg

- https://www.amazon.com/Waterproof-Toddler-Automatic-Sprinkler-Induction/dp/B0BFB53X61?tag=all0ad0-21https://m.media-amazon.com/images/I/51hZjr8pPCL.jpg

- https://www.amazon.com/Webroot-Internet-Antivirus-Protection-Subscription/dp/B07DDL3N69?tag=all0ad0-21https://m.media-amazon.com/images/I/41engcvUs-L.jpg

- https://www.amazon.com/Welcome-Retriever-Sunflowers-Farmhouse-Decorations/dp/B0BX4CGRZ6?tag=all0ad0-21https://m.media-amazon.com/images/I/51mDBvSQDgL.jpg

- https://www.amazon.com/Westinghouse-6361700-One-Light-Barnwood-Accents/dp/B07FV24JKY?tag=all0ad0-21https://m.media-amazon.com/images/I/31CAM-d4pHL.jpg

- https://www.amazon.com/whall-Cordless-Upgraded-Brushless-Lightweight/dp/B0CCS6K9ZQ?tag=all0ad0-21https://m.media-amazon.com/images/I/41yccuwbFUL.jpg

- https://www.amazon.com/Wildfire-Street-Rats-Aramoor-Book/dp/B09TQ2N654?tag=all0ad0-21https://m.media-amazon.com/images/I/616qu3UwE1L.jpg

- https://www.amazon.com/Women-Socks-Winter-Womens-Pairs/dp/B0B51QC6YX?tag=all0ad0-21https://m.media-amazon.com/images/I/51ewD5NWbWL.jpg

- https://www.amazon.com/Womens-Shoulder-Striped-Jumpsuits-Rompers/dp/B09SJ1G85L?tag=all0ad0-21https://m.media-amazon.com/images/I/41YYqXXx5dL.jpg

- https://www.amazon.com/Wozukia-Watercolor-MatInteresting-Amphibians-Decoration/dp/B0BYF9D2FX?tag=all0ad0-21https://m.media-amazon.com/images/I/518pUiLOefL.jpg

- https://www.amazon.com/X-cosrack-Organizer-Adjustable-Multifunctional-Cabinets/dp/B08BZMX161?tag=all0ad0-21https://m.media-amazon.com/images/I/51pk2BkIxjL.jpg

- https://www.amazon.com/XIOYIG-Tabletop-Portable-Concrete-Fireplace/dp/B0BJPD1Y4S?tag=all0ad0-21https://m.media-amazon.com/images/I/31ESaKZWI5L.jpg

- https://www.amazon.com/Y-W-Y-Bracelet-Mermaid-Jewelry-Supplies/dp/B095Y3SGBT?tag=all0ad0-21https://m.media-amazon.com/images/I/81mmuW14WML.jpg

- https://www.amazon.com/YaberAuto-Battery-Portable-Extended-Charging/dp/B0C4Y9NTQT?tag=all0ad0-21https://m.media-amazon.com/images/I/51GZ73w-xSL.jpg

- https://www.amazon.com/Yicostar-Walking-Collapsible-Portable-Dispenser/dp/B08XYWNVPP?tag=all0ad0-21https://m.media-amazon.com/images/I/31XbaAaEM-L.jpg

- https://www.amazon.com/YONHISDAT-Rechargerable-Circulation-360%C2%B0Rotation-Vehicles/dp/B0C1G12PFH?tag=all0ad0-21https://m.media-amazon.com/images/I/412XGJmpeoL.jpg

- https://www.amazon.com/YORKING-Headlights-Rectangular-Freightinger-Oldsmobile/dp/B07CF7JVTN?tag=all0ad0-21https://m.media-amazon.com/images/I/61XCciZBQTL.jpg

- https://www.amazon.com/YOU-WIZV-Keychain-Cartoon-Accessory/dp/B0BPJ3RJL6?tag=all0ad0-21https://m.media-amazon.com/images/I/41kdT+esfOL.jpg

- https://www.amazon.com/YOYI-Sandproof-Lightweight-Waterproof-Festivals/dp/B0C2SRR115?tag=all0ad0-21https://m.media-amazon.com/images/I/61NsvKATXeL.jpg

Categories

- https://www.amazon.com/2020-2021-Planner-Academic-Do-Twin-Wire/dp/B083V11TM5?tag=all0ad0-21https://m.media-amazon.com/images/I/41btLRSWksL.jpg (1)